import tensorflow as tf import numpy as np import matplotlib.pyplot as plt def add_layer(input,in_size,out_size,activation_function=None): with tf.name_scope(‘layer‘): with tf.name_scope(‘Weights‘): Weights = tf.Variable(tf.random_normal([in_size,out_size]),name=‘w‘) with tf.name_scope(‘biases‘): biases = tf.Variable(tf.zeros([1,out_size])+0.1,name=‘b‘) with tf.name_scope(‘Wx_plus_b‘): Wx_plus_b = tf.add(tf.matmul(input,Weights),biases) if activation_function is None: outputs = Wx_plus_b else: outputs = activation_function(Wx_plus_b) return outputs x_data = np.linspace(-1,1,300)[:,np.newaxis] noise = np.random.normal(0,0.05,x_data.shape)#噪音 y_data = np.square(x_data)-0.5+noise with tf.name_scope(‘inputs‘): xs = tf.placeholder(tf.float32,[None,1],name=‘x_input‘) ys = tf.placeholder(tf.float32,[None,1],name=‘y_input‘) #add hidden layer l1 = add_layer(xs,1,10,activation_function=tf.nn.relu) #add output layer predition = add_layer(l1,10,1,activation_function=None) with tf.name_scope(‘loss‘): loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys-predition),reduction_indices=[1])) with tf.name_scope(‘train‘): train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss) init = tf.initialize_all_variables() sess = tf.Session() merged = tf.summary.merge_all() writer = tf.summary.FileWriter("D:/logs/",sess.graph) #目录结构尽量简单,复杂了容易出现找不到文件,原因不清楚 sess.run(init)

执行后,在命令行中输入,

一定要先到logs文件夹所在目录下,在输入下面命令,不然会找不到

tensorboard --logdir=D:/logs/ #文件目录和之前里的保持一致

执行结果:

打开浏览器:

输入 显示的网址

显示的网址

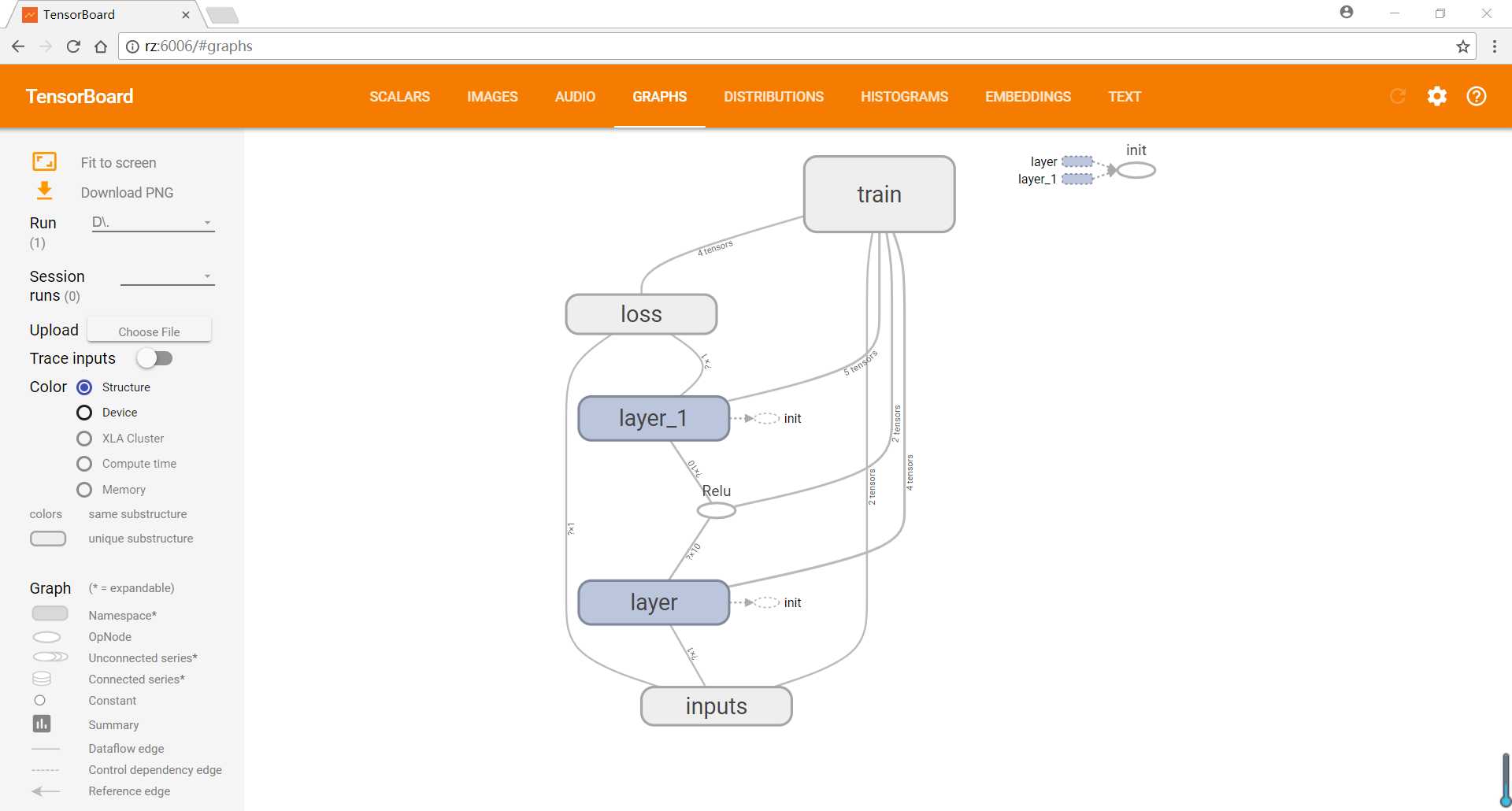

TensorFlow基础9——tensorboard显示网络结构

原文:http://www.cnblogs.com/renzhong/p/7358124.html