Step 0:导入必要的库

import tensorflow as tf import numpy as np import os

Step 1:获取图片文件名以及对应的标签

# you need to change this to your data directory train_dir = ‘E:\\data\\Dog_Cat\\train\\‘#Windows #train_dir = ‘/home/kevin/tensorflow/cats_vs_dogs/data/train/‘#linux def get_files(file_dir): ‘‘‘ Args: file_dir: file directory Returns: list of images and labels ‘‘‘ cats = [] label_cats = [] dogs = [] label_dogs = [] for file in os.listdir(file_dir): name = file.split(sep=‘.‘) if name[0]==‘cat‘: cats.append(file_dir + file) label_cats.append(0) else: dogs.append(file_dir + file) label_dogs.append(1) print(‘There are %d cats\nThere are %d dogs‘ %(len(cats), len(dogs))) image_list = np.hstack((cats, dogs))#合并数据 label_list = np.hstack((label_cats, label_dogs)) #转置、随机打乱 temp = np.array([image_list, label_list])#转换成2维矩阵 temp = temp.transpose()#转置 np.random.shuffle(temp)#按行随机打乱顺序 image_list = list(temp[:, 0])#取出第0列数据,即图片路径 label_list = list(temp[:, 1])#取出第1列数据,即图片标签 label_list = [int(i) for i in label_list]#转换成int数据类型 return image_list, label_list

函数注释:

1)np.hstack:

函数原型:numpy.hstack(tup)

tup可以是python中的元组(tuple)、列表(list),或者numpy中数组(array),函数作用是将tup在水平方向上(按列顺序)合并。

举例:

a=[1,2,3]

b=[4,5,6]

print(np.hstack((a,b)))

输出:[1 2 3 4 5 6 ]

2)transpose()

函数原型:numpy.transpose(a, axes=None)

作用:将输入的array转置,并返回转置后的array

举例:

>>> x = np.arange(4).reshape((2,2))

>>> x

array([[0, 1],

[2, 3]])

>>> np.transpose(x)

array([[0, 2],

[1, 3]])

注:

image_list = ["D:\\1.jpg","D:\\2.jpg","D:\\3.jpg"] label_list = [1,0,1] temp = np.array([image_list, label_list]) print(temp) #输出: #[[‘D:\\1.jpg‘ ‘D:\\2.jpg‘ ‘D:\\3.jpg‘] # [‘1‘ ‘0‘ ‘1‘]] temp = temp.transpose() print(temp) #输出: #[[‘D:\\1.jpg‘ ‘1‘] # [‘D:\\2.jpg‘ ‘0‘] # [‘D:\\3.jpg‘ ‘1‘]] np.random.shuffle(temp) print(temp) #输出: #[[‘D:\\2.jpg‘ ‘0‘] # [‘D:\\1.jpg‘ ‘1‘] # [‘D:\\3.jpg‘ ‘1‘]]

Step 2:

def get_batch(image, label, image_W, image_H, batch_size, capacity): ‘‘‘ Args: image: list type label: list type image_W: image width image_H: image height batch_size: batch size capacity: the maximum elements in queue Returns: image_batch: 4D tensor [batch_size, width, height, 3], dtype=tf.float32 label_batch: 1D tensor [batch_size], dtype=tf.int32 ‘‘‘ #将python的list数据类型转换为tensorflow的数据类型 image = tf.cast(image, tf.string) label = tf.cast(label, tf.int32) #image = tf.convert_to_tensor(image_list, dtype=tf.string) #label = tf.convert_to_tensor(label_list, dtype=tf.int32) # make an input queue 生成一个队列 input_queue = tf.train.slice_input_producer([image, label]) label = input_queue[1] image_contents = tf.read_file(input_queue[0])#读取图片 image = tf.image.decode_jpeg(image_contents, channels=3)#解码jpg格式图片 ###################################### # data argumentation should go to here ###################################### #图片resize image = tf.image.resize_image_with_crop_or_pad(image, image_W, image_H) # if you want to test the generated batches of images, you might want to comment the following line. # 如果想看到正常的图片,请注释掉111行(标准化)和 126行(image_batch = tf.cast(image_batch, tf.float32)) # 训练时不要注释掉! #数据标准化 image = tf.image.per_image_standardization(image) #Creates batches of tensors in tensors. image_batch, label_batch = tf.train.batch([image, label], batch_size= batch_size, num_threads= 2, #线程数设置 capacity = capacity) #队列中最多能容纳的元素 #you can also use shuffle_batch # image_batch, label_batch = tf.train.shuffle_batch([image,label], # batch_size=BATCH_SIZE, # num_threads=64, # capacity=CAPACITY, # min_after_dequeue=CAPACITY-1) #print(label_batch.shape) label_batch = tf.reshape(label_batch, [batch_size])###多此一举? #print(label_batch.shape) image_batch = tf.cast(image_batch, tf.float32) return image_batch, label_batch

函数注释:

1)tf.cast

cast(

x,

dtype,

name=None

)

将x转换为dtype数据类型的张量。

举例:

x = tf.constant([1.8, 2.2], dtype=tf.float32) tf.cast(x, tf.int32) # [1, 2], dtype=tf.int32

2)tf.train.slice_input_producer

slice_input_producer(

tensor_list,

num_epochs=None,

shuffle=True,

seed=None,

capacity=32,

shared_name=None,

name=None

)

Produces a slice of each Tensor in tensor_list.

Implemented using a Queue -- a QueueRunner for the Queue is added to the current Graph‘s QUEUE_RUNNERcollection.

Args:

Returns:

A list of tensors, one for each element of tensor_list. If the tensor in tensor_list has shape [N, a, b, .., z], then the corresponding output tensor will have shape [a, b, ..., z].

Raises:

简单说来,就是生成一个队列,该队列的容量为capacity。

3)tf.read_file

作用:读取输入文件的内容并输出

4)tf.image.decode_jpeg

作用:将JPEG格式编码的图片解码成uint8数据类型的tensor。

5)tf.image.resize_image_with_crop_or_pad

resize_image_with_crop_or_pad(

image,

target_height,

target_width

)

将图片大小调整为target_height和target_width大小。若原图像比较大,则以中心点为裁剪。若原图像比较小,则在短边补零,使得大小为target_height和target_width。

6)tf.image.per_image_standardization

线性尺度变化,使得原图像具有零均值,单位范数( zero mean and unit norm)。

也就是计算(x - mean) / adjusted_stddev,其中mean是图像中所有像素的平均值,adjusted_stddev = max(stddev, 1.0/sqrt(image.NumElements()))。

adjusted_stddev是图像中所有像素的标准差,max作用为防止stddev的值为0。

7)tf.train.batch

batch(

tensors,

batch_size,

num_threads=1,

capacity=32,

enqueue_many=False,

shapes=None,

dynamic_pad=False,

allow_smaller_final_batch=False,

shared_name=None,

name=None

)

作用:Creates batches of tensors in tensors.即从输入的tensors获取batch_size大小的数据。

该函数是利用队列实现的。因此在使用的时候需要使用QueueRunner启动队列。

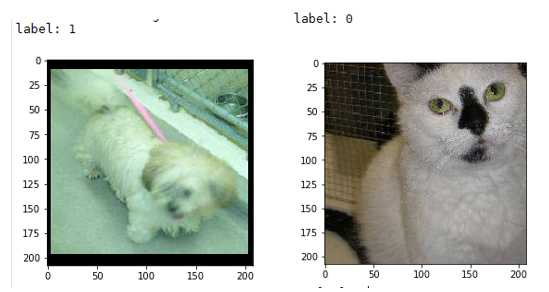

step3:测试。测试上面写的两个函数是否正确。

#%% TEST # To test the generated batches of images # When training the model, DO comment the following codes import matplotlib.pyplot as plt BATCH_SIZE = 4 CAPACITY = 256 IMG_W = 208 IMG_H = 208 #train_dir = ‘/home/kevin/tensorflow/cats_vs_dogs/data/train/‘ train_dir = ‘E:\\data\\Dog_Cat\\train\\‘ image_list, label_list = get_files(train_dir) image_batch, label_batch = get_batch(image_list, label_list, IMG_W, IMG_H, BATCH_SIZE, CAPACITY) with tf.Session() as sess: i = 0 coord = tf.train.Coordinator() threads = tf.train.start_queue_runners(coord=coord) try: while not coord.should_stop() and i<1: img, label = sess.run([image_batch, label_batch]) # just test one batch for j in range(BATCH_SIZE):#np.arange(BATCH_SIZE): print(‘label: %d‘ %label[j]) plt.imshow(img[j,:,:,:]) plt.show() i+=1 except tf.errors.OutOfRangeError: print(‘done!‘) finally: coord.request_stop() coord.join(threads)

函数注释:

1)tf.train.Coordinator()

作用:线程协调者

任意一个线程可以调用coord.request_stop()来使所有线程停止。为了达到这一目的,每个线程必须定期检查coord.should_stop()。只要coord.request_stop()一被调用,那么coord.should_stop()马上返回True。

因此,一个典型的 thread running with a coordinator如下:

while not coord.should_stop(): ...do some work...

2)tf.train.start_queue_runners

作用:启动graph中所有的队列。

最后的效果:

说明:

代码来自:https://github.com/kevin28520/My-TensorFlow-tutorials,略有修改

函数作用主要参考tensorflow官网。https://www.tensorflow.org/versions/master/api_docs/

注:

我建了一个deep learning交流群,感兴趣的可以加群,大家一起交流,一起进步。qq群号:134449436

原文:http://www.cnblogs.com/hejunlin1992/p/7609231.html