Learning Visual Question Answering by Bootstrapping Hard Attention

Google DeepMind ECCV-2018

2018-08-05 19:24:44

Paper:https://arxiv.org/abs/1808.00300

Introduction:

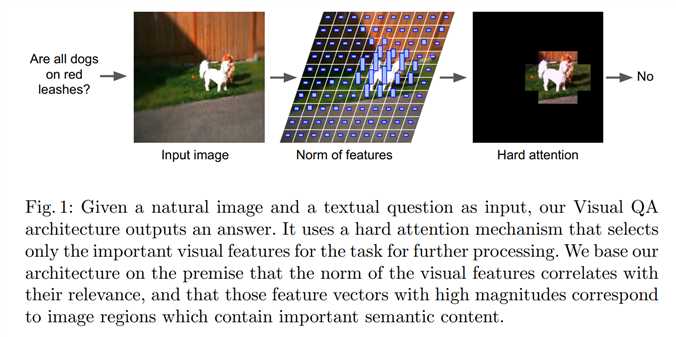

本文尝试仅仅用 hard attention 的方法来抠出最有用的 feature,进行 VQA 任务的学习。

Soft Attention:

Existing attention models [7,8,9,10] are predominantly based on soft attention, in which all information is adaptively re-weighted before being aggregated. This can improve accuracy by isolating important information and avoiding interference from unimportant information.

Hard Attention:

--

论文阅读:Learning Visual Question Answering by Bootstrapping Hard Attention

原文:https://www.cnblogs.com/wangxiaocvpr/p/9427034.html