http://foaa.de/old-blog/2010/10/intro-to-pacemaker-on-heartbeat/trackback/index.html

With squeeze around the corner it’s time to reconsider your everything with debian – once again Of course i am aware, that pacemaker is available since 2008, but sticking to debian stable, my first contact with pacemaker came with squeeze. Also, i was not really aware what i missed, because my simple setup just worked. With my new database server, already squeezing, and my virtual army of test installations, i’ve spent about 50 hours or so with 6.0 and can say: nicely done, again. However, sometimes there is work before pleasure, and that’s the case with heartbeat 3.0.2 in squeeze (lenny: 2.1.3). This article will not cover pacemakers abilities in depth, it aims to help with the very first steps into the cold water and / or give you an impression of what you can expect, before you decide to change everything.

This article targets heartbeat 2 users, which are already familiar with the matter. However, here is a short, rough, strongly oversimplified summary on clusters in general and heartbeat 2 in particular for readers totally new to clustering:

------------------------------------------------------------------------------

Active/active — Traffic intended for the failed node is either passed onto an existing node or load balanced across the remaining nodes. This is usually only possible when the nodes utilize a homogeneous software configuration.

Active/passive — Provides a fully redundant instance of each node, which is only brought online when its associated primary node fails. This configuration typically requires the most extra hardware.

N+1 — Provides a single extra node that is brought online to take over the role of the node that has failed. In the case of heterogeneous software configuration on each primary node, the extra node must be universally capable of assuming any of the roles of the primary nodes it is responsible for. This normally refers to clusters which have multiple services running simultaneously; in the single service case, this degenerates to active/passive.

N+M — In cases where a single cluster is managing many services, having only one dedicated failover node may not offer sufficient redundancy. In such cases, more than one (M) standby servers are included and available. The number of standby servers is a tradeoff between cost and reliability requirements.

N-to-1 — Allows the failover standby node to become the active one temporarily, until the original node can be restored or brought back online, at which point the services or instances must be failed-back to it in order to restore high availability.

N-to-N — A combination of active/active and N+M clusters, N to N clusters redistribute the services, instances or connections from the failed node among the remaining active nodes, thus eliminating (as with active/active) the need for a ‘standby‘ node, but introducing a need for extra capacity on all active nodes.------------------------------------------------------------------------------

Before heartbeat 3.0 (or 2.9.9), heartbeat was “standalone”, self sufficient and could be installed and configure in a matter of minutes. For many installations, it was enough and if you wanted a little bit more (eg monitoring services on a higher OSI layer), you could easily add mon and you were fine. Starting with 2.9.9, the linux-ha team strongly discourages from using heartbeat without pacemaker. So, thats what i will do. Naive as i am, and based on my experiences with heartbeat beforehand, i went right into it and straight against the next wall. Do not confuse pacemaker with your good ol’ heartbeat. It is not, it is far more (especially far more complex), also far better (imho). But once, you are into to it, you will like it.

Using heartbeat, you might be under the false assumption, that you have a cluster. That, so i learned, is not the case. Heartbeat is now degraded to a simple messaging layer (or a cluster engine) and insofar rather part of the whole cluster stack. So how is this any useful to you? Well, you can (correctly) assume that heartbeat is substitutable, namely with corosync (a fork from OpenAIS, stripped by everything not needed by pacemaker). The first time you encounter this diametrical difference is when you try to install heartbeat on a squeeze machine:

aptitude install heartbeat -s

The following NEW packages will be installed:

ca-certificates{a} cluster-agents{a} cluster-glue{a} fancontrol{a} file{a} gawk{a} heartbeat libcluster-glue{a} libcorosync4{a} libcurl3{a} libesmtp5{a} libheartbeat2{a} libidn11{a} libltdl7{a} libmagic1{a} libnspr4-0d{a} libnss3-1d{a} libopenhpi2{a} libopenipmi0{a} libperl5.10{a} libsensors4{a} libsnmp-base{a} libsnmp15{a} libssh2-1{a} libtimedate-perl{a} libxml2-utils{a} libxslt1.1{a} lm-sensors{a} mime-support{a} openhpid{a} openssl{a} pacemaker{a} python{a} python-central{a} python-minimal{a} python2.6{a} python2.6-minimal{a}0 packages upgraded, 37 newly installed, 0 to remove and 19 not upgraded.

Need to get 16.1MB of archives. After unpacking 48.4MB will be used.

Thats the point where you have to decide whether you stick with your old ha.cf and haresources files (and rebuild the package with fewer dependencies) or dare to welcome the future My native play instinct compelled me to do the latter.

Ok, back to the topic. What is the difference? Well, at first glance not very much. You end up with a cluster of your nodes, whereas one is the master and one the slave. That is, if you want to keep it that simple. With pacemaker, you can build clusters of more then two nodes. There is no limit (that i am aware of apart from the common sense). Furthermore, pacemaker allows you to build also N+1 and N-to-N clusters. A good example for the former is a 3-node cluster, in which the third is the failover node for both. You assume that not both (active) nodes will fail at the same time and preserve therefore only one passive node which can overtake the services, which is of course much more economic and realisitic then two active-passive clusters. The N-to-N cluster, you can imagine when thinking about four nodes, which all have the same resources and export (actively) the same services. Your assumption would be, that three of the four nodes can bear the load of all – at least for the time you require to bring the failed node up again. You probably can carry the though further by yourself, eg a cluster of 10 nodes where 2 are passive and 8 active and so on (think: Raid1, Raid5, Raid6, ..).

Before i go on, here some conventions and assumtptions:

Assuming you have installed heartbeat 3.0.2 + pacemaker (1.0.9) with the simple one-liner from above, we can directly begin with the first required changes. (If you have only lenny available, use backports). If you are upgrading from an existing heartbeat 2 cluster, shut it down beforehand!

Open the /etc/ha.d/ha.cf file with your favorite editor and change it, so that is looks kind of this (replace with your IPs and your node names):

# enable pacemaker, without stonith

crm yes

# log where ?

logfacility local0

# warning of soon be dead

warntime 10

# declare a host (the other node) dead after:

deadtime 20

# dead time on boot (could take some time until net is up)

initdead 120

# time between heartbeats

keepalive 2

# the nodes

node nfs4test1

node nfs4test2

# heartbeats, over dedicated replication interface!

ucast eth0 192.168.178.39 # ignored by node1 (owner of ip)

ucast eth0 192.168.178.40 # ignored by node2 (owner of ip)

# ping the switch to assure we are online

ping 192.168.178.1

Then assure that you have each host in each /etc/hosts file:

192.168.178.39 nfs4test1

182.168.178.40 nfs4test2

Now you should setup the secret key in /etc/ha.d/authkeys. It should look something like this (change to 0600, only owner access afterwards!):

auth 1

1 sha1 your-secret-password

Everything on both nodes, of course!

Old haresources: If you have an old /etc/ha.d/haresources file, do not remove it, cause it will come in handy later.

So far, nothing new, besides the second line in the ha.cf: “crm yes”. This is, where you tell heartbeat: you are controlled by pacemaker. So do not miss it.

Now let’s start heartbeat on both nodes:

#> /etc/init.d/heartbeat start

As soon it finishes with success, go to any of the two nodes and let’s examine the monster – i meant the pacemaker config:

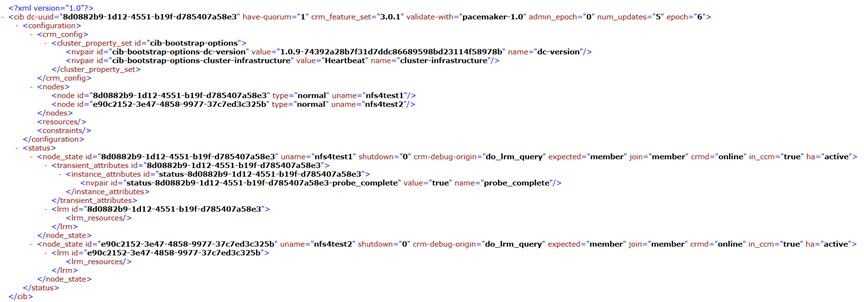

#> cibadmin -Q

This should output a large XML file. You can see the contents of my initial start here.

The first and most important task for you is: don’t get scared and do not bother about all the funny long id-attributes.You will not have to deal with the pure XML files – or let’s say: only very seldom (eg backup and recover).

What you should see are the following four lines:

<nodes>

<node id="8d0882b9-1d12-4551-b19f-d785407a58e3" uname="nfs4test1" type="normal"/>

<node id="e90c2152-3e47-4858-9977-37c7ed3c325b" uname="nfs4test2" type="normal"/>

</nodes>

Those are in a configuration-tag, which is under the root cib-tag. They are generated from your ha.cf file and declare the two nodes of our new cluster. For now, they don’t do very much. Now let us have a look at on of the most important pacemaker components: the cluster resource manger monitor, or in short: crm_mon. If you create larger clusters later on by yourself, you might want to know where a particular resource (eg a virtual IP address) is for the moment.

#> crm_mon -1r

============

Last updated: Wed Nov 11 04:57:19 2009

Stack: Heartbeat

Current DC: nfs4test1 (8d0882b9-1d12-4551-b19f-d785407a58e3) - partition with quorum

Version: 1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b

2 Nodes configured, unknown expected votes

0 Resources configured.

============

Online: [ nfs4test1 nfs4test2 ]

Full list of resources:

The “-1″ means: show once (without it acts like top) and the “-r” stands for “show also inactive ressources”. What you can learn from the output is that both of the nodes are online. You can amuse yourself by killing one and see what happens. Then again, maybe you postpone this for later.

Before we will add resources, i will introduce another very important command line tool: crm, which is the actual pacemaker shell.

#> crm status

This will show the same as the crm_mon command above, but crm_mon can do more, so stick with it for monitoring.

#> crm configure show

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" nfs4test1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" nfs4test2

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat"

This shows your configuration, much the same way as “cibadmin -Q” did, but readable (no XML). Here again, are your two nodes. The other output can be ignored for now.

You do not have to always run the whole line from your linux shell, you can directly enter the crm shell by typing crm, without args:

#> crm

crm(live)# configure

crm(live)configure# show

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" nfs4test1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" nfs4test2

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat"

crm(live)configure# exit

bye

You will use the crm shell whenever you have to add a complex resource or so, because you can make all changes and execute then a “commit” (by typing so). Otherwise, you might produce unhealthy states in the process.

Before adding the resource, better disable stonith, because, if you have no such device it will produce a lot of errors (if you do not know what it is, even more so):

#> crm configure property stonith-enabled=false

Now we will add the first actual resource and give the whole cluster manger a sense in live. We use the heartbeat OCF scripts for this.

#> crm configure primitive FAILOVER-IP ocf:heartbeat:IPaddr params ip="192.168.178.41" op monitor interval="10s"

The resource is added and should be immediately online. The “op monitor interval=10s” part is very important, cause without it, pacemaker will not check wheter the resource is online (on the nodes). Check the resource by asking crm_mon:

#> crm_mon -1

============

Last updated: Wed Nov 11 05:20:23 2009

Stack: Heartbeat

Current DC: nfs4test1 (8d0882b9-1d12-4551-b19f-d785407a58e3) - partition with quorum

Version: 1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b

2 Nodes configured, unknown expected votes

1 Resources configured.

============

Online: [ nfs4test1 nfs4test2 ]

FAILOVER-IP (ocf::heartbeat:IPaddr): Started nfs4test1

You can see, that is is on the host nfs4test1, in my case. Very interesting is also the crm_resource command:

#> crm_resource -r FAILOVER-IP -W

resource FAILOVER-IP is running on: nfs4test1

Now let us look again in the configuration. But not on the node, where you have been working on: on the other.

#nfs4test2> crm configure show

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" nfs4test1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" nfs4test2

primitive FAILOVER-IP ocf:heartbeat:IPaddr \

params ip="192.168.178.41" \

op monitor interval="10s"

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1257846495"

As you can see, the second node is totally aware of everything we did. It is not necessary, as with heartbeat < 3, to deploy the haresources file on each node.

In this topic, i will highlight some useful practices, which you will probably like to know in most real-world deployments within the first 15 minutes.

One thing, you might want to do is remove a resource, this can be achieved by running:

#> crm configure delete FAILOVER-IP

You will probably receive an error message, saying: “WARNING: resource FAILOVER-IP is running, can’t delete it“. Ok then. Let us disable the resource beforehand:

#> crm resource stop FAILOVER-IP

#> crm configure delete FAILOVER-IP

And now it works! This behavior might save your a** eventually.. Ok, add the resource again (above command).

If you ever need to stop / (re)start a resource, just do as follows:

#> crm resource stop FAILOVER-IP

#> # wait some time..

#> crm resource start FAILOVER-IP

Also very common is the moving of a single resource to another node:

#nfs4test1> crm resource move FAILOVER-IP nfs4test2

As always, check with “crm_mon -1″..

Resources are not only virtual IP addresses or single services (like apache, nfs-kernel-server and so on), but also resource groups. I will show a usage example later on.

Before we proceed to special cases, let’s cover the basic admin tasks. The most important is to force one node to takeover all resources (eg cause you want to restart the other). Here is command (execute it on the node which has currently not the virtual ip):

#> crm node standby nfs4test1

Thats all. The other node takes over the resources (the virtual ip) and you can restart or whatever the standby node. Have also a look at the monitor output:

#> crm_mon -1r

============

Last updated: Tue Nov 10 11:16:57 2009

Stack: Heartbeat

Current DC: nfs4test1 (8d0882b9-1d12-4551-b19f-d785407a58e3) - partition with quorum

Version: 1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b

2 Nodes configured, unknown expected votes

1 Resources configured.

============

Node nfs4test1 (8d0882b9-1d12-4551-b19f-d785407a58e3): standby

Online: [ nfs4test2 ]

Full list of resources:

FAILOVER-IP (ocf::heartbeat:IPaddr): Started nfs4test2

As you can see, nfs4test1 is in standby, all resources are hosted by nfs4test2. For the sake of example, you might want to reboot the standby node now. While rebooting, the node will be listed as OFFLINE:

Node nfs4test1 (8d0882b9-1d12-4551-b19f-d785407a58e3): OFFLINE (standby)

It might take some seconds until the node is recognized as online again (but still standby), but it should show up eventually. You can activate it now again with:

#> crm node online nfs4test1

After this, the resource will re-migrate back to nfs4test1.

Above, we worked with a single resources. Normally, you would probably have multiple resources, which only work combined (eg: the virtual IP is for the host which exports the NFS storage).

So let’s add a nfs-storage:

#> crm configure primitive NFS-EXPORT lsb:nfs-kernel-server op monitor interval="60s"

LSB stands for Linux Standard Base and means, that the service you implement has to apply some standards. For debian this reads: most services in /etc/init.d/ can be used, as long as they can “start”, “stop” and “status”. Eg nginx under lenny can not. However, the nfs-kernel-server can.

#> crm configure show

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" nfs4test1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" nfs4test2

primitive FAILOVER-IP ocf:heartbeat:IPaddr \

params ip="192.168.178.42" \

op monitor interval="10s"

primitive NFS-EXPORT lsb:nfs-kernel-server

op monitor interval="60s"

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1257846495"

Ok, now you will see, why resource groups are mandatory. After adding the resource, it cam up (for me) on nfs4test2, whereas the IP came up at nfs4test1. This, of course, is totally rubbish.

Lets put them in a group.

#> crm configure group NFS-AND-IP FAILOVER-IP NFS-EXPORT

This declared a resource group named NFS-AND-IP, containing the two created resources. Unfortunately, it is not done yet. Being in a group only helps us moving the resource easily (we do not move FAILOPVER-IP, but NFS-AND-IP). We have to declare a dependency between those two.

#> crm configure colocation NFS-WITH-IP inf: FAILOVER-IP NFS-EXPORT

As soon as this is executed, the resources will re-locate themself on a single server only. In the above example, NFS-WITH-IP is the name of the colocation (also a resource, so it has to have a name) and inf is short for infinity, which assures the precedence of this dependency. You can work with multiple relations and numeric scores, i leave this to the reader to figure out.

Not enough for us, let us set the correct order in which the resources have to come up:

#> crm configure order IP-BEFORE-NFS inf: FAILOVER-IP NFS-EXPORT

This created another resource IP-BEFORE-NFS... Yes, everything is somehow a resource. Now we are done.

You can build dependencies (colocations), orders and groups of any kind of resource. Try not to break it, but play somewhat around. Review the resource commands above. They apply also on groups (eg stop a whole group, migrate and so on..).

Very simple, just add the resource like so:

#> crm configure primitive NAME lsb:INIT_D_NAME op monitor interval="123s"

Whereas INIT_D_NAME is the script name in /etc/init.d/ .. eg apache2 for /etc/init.d/apache2

Execute the following:

#> crm ra list ocf heartbeat

You can get a description about the resources and all parameters with

#> crm ra meta NAME

Have a look in /usr/lib/ocf/resource.d/heartbeat/ (debian) for starters. Look at the scripts and how they are written. Then add yours.

Try reset the failcounter:

#> crm resource failcount RESOURCE-NAME set NODE-NAME 0

and bring it online afterwards:

#> crm resource start RESOURCE-NAME

Use

#> crm resource meta RESOURCE-NAME set is-managed false

do what you have to do, and set it managed again:

#> crm resource meta RESOURCE-NAME set is-managed true

Pacemaker will not migrate it or try to set it online somewhere else, until you enabled managing again (afaik).

You can either save/reload your configuration in/from CIB format or XML.

CIB:

# Save backup

#> crm configure save /path/to/backup

# Restore backup

#> crm configure load replace /path/to/backup

# .. or, if you have only slight "update" modifications

#> crm configure load update /path/to/backup

XML:

# Save backup

#> cibadmin -Q > /path/to/backup

# Restore backup

#> cibadmin --replace --xml-file /tmp/resources.xml

Well... probably not. That’s where the “op monitor ...” resource parameters come in. On the other hand, it does only perform the checks on the host itself. With mon, you can test wheter a particular service is available from any other point of your network. It depends on your structures/setup, i guess.

Yes you can.

#> crm configure property default-resource-stickiness=1

As always: Do not blame me if this destroys your servers or makes you drink three more beer. No warranties or guaranties of any kind.

http://foaa.de/old-blog/2010/10/intro-to-pacemaker-part-2-advanced-topics/trackback/index.html

Not everything fitted in the first article and i felt a too long “first steps” article might alienate beginners. This time, i will go into some more advanced matters such as: stickiness vs location, N+1 clusters, advanced resource locations, clones and master/slave situations.

Before reading this article, you should at least get your feed wed playing around with pacemaker. I’ve read the pacemaker configuration A-Z guide before getting the basics straight and became very soon very lost with many described concepts. Shortly after i actually began using pacemaker (even only on my testing nodes), i discovered for myself what features seem to be missing and what would be nice to have – guess what: the guide somehow began to make sense all of a sudden. But maybe it’s just me.

Each concept introduced, will be interluded by a short use case, showing you a practical application before explaining the how-to. All of the examples will show a cluster using at least a virtual IP and one additional service (some more). The services are chosen because they somehow made sense or were easy to install – it is not about the services, but about pacemaker.

If not otherwise announced, i will use the following names, definitions and conventions:

Assumptions to consider (please read carefully):

Anyway, let’s get into it..

We have the nodes node1 and node2. node1 is active and at the beginning your resources will run here. However, if node1 fails, node2 takes over and begins serving. If node1 comes back again, he shall NOT take over the resources (back again)! They should stay where they are, at node2! More so: you plan to deploy this configuration on multiple active-passive pairs (for whatever reason) and you want to assure, that node1 (in each deployed cluster) always has the resources from the start (until the first failover occurs).

First of, here is the initial config

#> crm configure show

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" node1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" node2

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1257846495"

Let us quickly create the resources, group them and make them dependent.

#> crm configure primitive VIRTUAL-IP ocf:heartbeat:IPaddr params ip="10.0.0.100" op monitor interval="10s"

#> crm configure primitive PROFTPD-SERVER lsb:proftpd op monitor interval="60s"

#> crm configure group FTP-AND-IP VIRTUAL-IP PROFTPD-SERVER

#> crm configure colocation FTP-WITH-IP inf: VIRTUAL-IP PROFTPD-SERVER

Next thing is to make the resources sticky, which means: stay on the server you are on, even if it was formerly the passive and the active is now online again. Or for hearbeat2 users: “auto_failover no”.

#> crm configure property default-resource-stickiness=1

We like to have the resources on node1 for starters.

#> crm configure location PREFER-NODE1 FTP-AND-IP 100: node1

Have a look with crm_mon, where we are:

crm_mon -1r

============

Last updated: Wed Nov 11 09:40:37 2009

Stack: Heartbeat

Current DC: node2 (e90c2152-3e47-4858-9977-37c7ed3c325b) - partition with quorum

Version: 1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b

2 Nodes configured, unknown expected votes

2 Resources configured.

============

Online: [ node1 node2 ]

Full list of resources:

NFS-EXPORT (lsb:proftpd): Started node1

Resource Group: FTP-AND-IP

VIRTUAL-IP (ocf::heartbeat:IPaddr): Started node1

PROFTPD-SERVER (lsb:proftpd): Started node1

Alright, looks good so far. Now lets reboot node1, and see how node2 takes over. You can use “crm_mon” (without “-1″) to see a top-like status change.

And what happens ? node2 takes over the resources, as expected.. but as soon as node1 is online again: all resources migrate back. Thats not what we wanted.

Ok, what went wrong is that the location (score/preference) of 100 outnumbers the default-resources-stickiness of 1. So what can we do to assure that the stickiness outweighs the location preference? In short: set it higher. So how high do you think? Well, something like 1000 would probably do it. But this article is not about guessing, but understanding. Let’s try to determine the lowest (integer) value possible, which you can set and while STILL migrating back to the preferred location. Increment this value by 1 will give us the lowest possible score with which it will NOT re-migrates the resources.

Let’s start with 99, which is lower than 100, so it should do it. No, it doesn’t. You have more resources then one and you have to compare the summarized stickiness of the resources. Then again: how many resources are there? One virtual IP and the proftpd service, so 2? Nope, wrong again: you have three, you missed the group for which you set the location score. Those scores inherit upwards: total group score = group score + sum of (scores of all resources). So the lowest possible value with which it will STILL MIGRATE back is 33, cause a stickiness of 33 * 3 = 99 (group + virtual ip + protfpd) is smaller than a preference of 100. So the smallest default value for stickiness is 34 to achieve NO MIGRATION (34 * 3 = 102 > 100).

It will certainly work if you set the default property to >= 34, but then again: not quite elegant, though? What we really want, is to drop the default stickiness and set a stickiness to the actual group, for which we’ve already set the location preference. We could do a much easier math and will not run into problems as soon we begin using more than one resources group and more then 2-nodes (with up to n-1 location preferences). E.g., imagine the use case: we have 4 nodes: node1...node4. If a node fails, the resource will be handed down to the next (node1 -> node2 -> node3 -> node4). However, if one of the higher nodes will come up, we want the resource either to stay, if it is on node1 to node3, which are the primary nodes, but to move back, if it is on node4, which is the failover node. There are probably countless scenarios you can construct, so let’s get to the matter.

We will keep the default stickiness of 1, cause we still want a non-re-migrate behavior for all services, for which we do not set a location preference. 1 is small enough to be compensated in our math.

So lets set a stickiness for the group:

#> crm resource meta FTP-AND-IP set resource-stickiness 101

As mentioned above, we still have a default-resource-stickiness of 1, so the actual stickiness of FTP-AND-IP is 103 (group + 2 members a 1). I think it still is more readable then the required 99 (+2), because your config dump will make sense to you and, if you disable the default stickiness, it will still be enough. Here we go:

crm configure show

INFO: building help index

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" nfs4test1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" nfs4test2

primitive PROFTPD-SERVER lsb:proftpd \

op monitor interval="60s"

primitive VIRTUAL-IP ocf:heartbeat:IPaddr \

params ip="192.168.178.41" \

op monitor interval="10s"

group FTP-AND-IP VIRTUAL-IP PROFTPD-SERVER \

meta resource-stickiness="101"

location PREFER-NODE1 FTP-AND-IP 100: nfs4test1

colocation FTP-WITH-IP inf: VIRTUAL-IP PROFTPD-SERVER

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1257846495" \

default-resource-stickiness="1"

To assure yourself that everything works as expected, you might want to set a stickiness of 97 and reboot, to see how it DOES migrate back (97 + 1 + 1 = 99 < 100) and then try our 101 and reboot again (it will not migrate back).

Do not set an equal stickiness (in this example 98 + 1 + 1 = 100 == 100), cause pacemaker then can decide himself, which might look like random to you!

If you manually move a resource using “crm resource move“, “crm resource migrate” or “crm_resource ..“, you might overwrite your stickiness settings. Pacemaker will create a location-rule entry, which looks kind of the following:

location cli-prefer-FTP-AND-IP FTP-AND-IP \

rule $id="cli-prefer-rule-FTP-AND-IP" inf: #uname eq node1

This entry assigns an score of infinity to the preference on node1. So, you have to remove this preference again, to assure your stickiness betters the location preference, as you expect.

You have three nodes, two of them shall be active serving NFS and FTP (each one). If one of the active nodes fails, the failover node shall take over it’s resources, which means: start the NFS/FTP and up the virtual IP. However, you don’t know which node will fail, you just want the services online.

First off, there will be no SAN storage in the example. We just work with NFS exporting a local dir and a virtual IP, cause i have tried all those configurations myself and did not wanted to waste time on setting up 2 more nodes (providing SAN). So, you require three nodes. If you already have them, you can skip the first topic where i’ll explain how to add a third node.

Skip, if you already have 3. If not, do as following:

Assure that all nodes have the other nodes in their /etc/hosts files:

# ..

10.0.0.1 node1

10.0.0.2 node2

10.0.0.3 node3

# ..

Assure that all nodes have all other nodes in their /etc/ha.d/ha.cf file:

# ..

# the nodes

node node1

node node2

node node3

# heartbeats, over dedicated replication interface!

ucast eth0 10.0.0.1

ucast eth0 10.0.0.2

ucast eth0 10.0.0.3

# ..

Copy the /etc/ha.d/authkeys file to the new node (chmod 0600).

Now shut down your cluster on all nodes! This is very important – and unpleasant, i know. (There is supposed to be a way with autojoin in ha.cf and later hb_addnode, but i read this to late and am to lazy to try it.).

Restart your cluster on all nodes. Have a look in “crm_mon -1r” and see the third node.

Add your virtual IPs

#> crm configure primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr params ip="10.0.0.100" op monitor interval="10s"

#> crm configure primitive VIRTUAL-IP2 ocf:heartbeat:IPaddr params ip="10.0.0.101" op monitor interval="10s"

Create the NFS and FTP services

#> crm configure primitive NFS-SERVER lsb:nfs-kernel-server op monitor interval="60s"

#> crm configure primitive PROFTPD-SERVER lsb:proftpd op monitor interval="60s"

Group and relate the first virtual IP with NFS and the second with FTP

#> crm configure group NFS-AND-IP VIRTUAL-IP1 NFS-SERVER

#> crm configure colocation NFS-WITH-IP inf: VIRTUAL-IP1 NFS-SERVER

#> crm configure group FTP-AND-IP VIRTUAL-IP2 PROFTPD-SERVER

#> crm configure colocation FTP-WITH-IP inf: VIRTUAL-IP2 PROFTPD-SERVER

Have a look at crm_mon, it should kind of look like this:

#> crm_mon -1r

============

Last updated: Wed Oct 6 21:23:40 2010

Stack: Heartbeat

Current DC: node3 (055bb37b-6437-4bd7-8817-0aff3ab5549b) - partition with quorum

Version: 1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b

3 Nodes configured, unknown expected votes

2 Resources configured.

============

Online: [ node1 node2 node3 ]

Full list of resources:

Resource Group: NFS-AND-IP

VIRTUAL-IP1 (ocf::heartbeat:IPaddr): Started node1

NFS-SERVER (lsb:nfs-kernel-server): Started node1

Resource Group: FTP-AND-IP

VIRTUAL-IP2 (ocf::heartbeat:IPaddr): Started node1

PROFTPD-SERVER (lsb:proftpd): Started node1

Ok here are the requirements formalized:

Thats easy to say, and easy to implement. Here we go:

“node1 shall serve NFS, as long as he is online”

#> crm configure location NFS-PREFER-1 NFS-AND-IP 100: node1

“node2 shall serve FTP, as long as he is online”

#> crm configure location FTP-PREFER-1 FTP-AND-IP 100: node2

“If node1 fails, node3 shall take over. If node3 is offline, node2 shall.”

#> crm configure location NFS-PREFER-2 NFS-AND-IP 50: node3

#> crm configure location NFS-PREFER-3 NFS-AND-IP 25: node2

“If node2 fails, node3 shall take over. If node3 is offline, node1 shall.”

#> crm configure location FTP-PREFER-2 FTP-AND-IP 50: node3

#> crm configure location FTP-PREFER-3 FTP-AND-IP 25: node1

The last “If node1 or node2 become online again, the resources shall be migrated back to them.” is implicit, because we are not working with stickiness.

Well, that was easy, wasn’t it ? Here is what your final config should look like:

#> crm configure show

node $id="055bb37b-6437-4bd7-8817-0aff3ab5549b" node3

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" node1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" node2

primitive NFS-SERVER lsb:nfs-kernel-server \

op monitor interval="60s"

primitive PROFTPD-SERVER lsb:proftpd \

op monitor interval="60s"

primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr \

params ip="10.0.0.100" \

op monitor interval="10s"

primitive VIRTUAL-IP2 ocf:heartbeat:IPaddr \

params ip="10.0.0.101" \

op monitor interval="10s"

group FTP-AND-IP VIRTUAL-IP2 PROFTPD-SERVER

group NFS-AND-IP VIRTUAL-IP1 NFS-SERVER

location FTP-PREFER-1 FTP-AND-IP 100: node2

location FTP-PREFER-2 NFS-AND-IP 50: node3

location FTP-PREFER-3 NFS-AND-IP 25: node1

location NFS-PREFER-1 NFS-AND-IP 100: node1

location NFS-PREFER-2 NFS-AND-IP 50: node3

location NFS-PREFER-3 NFS-AND-IP 25: node2

colocation FTP-WITH-IP inf: VIRTUAL-IP2 PROFTPD-SERVER

colocation NFS-WITH-IP inf: VIRTUAL-IP1 NFS-SERVER

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1286393008"

You can now begin wildly rebooting the nodes in any order and watch the resources move according to plan. As long as any of the 3 servers is online, all services are available.

You can probably see how this will very much stabilize your infrastructure. Of course, it depends on how easy the resources can be moved (eg: a MySQL server with it’s local database). But what i wanted to show is, how simple you can lay the base for a stable multi-node deployment. It’s really not much more complex then your good old heartbeat2 2-node cluster (as soon as you begin to like the crm configuration syntax).

Of course you can increase the amount of nodes and decide complexer rules (resource X shall be on one of those three nodes, whereas resources Y can be served from any of the 5, ..) and also combine with stickiness (let the resource stay on the last node it was, as long as it is one of XYZ). Especially in the last scenario “crm_resource -r NFS-AND-IP -W” is where helpful, cause it shows you, where your resource is right now (it would be so nice, if this would work with my keys).

The same as for the N+1 cluster, but with a difference: We know that each node can only serve one resource (FTP or NFS) at a time (eg: due to load). We have to make a choice whether NFS or FTP is more important. We go with NFS. So the difference is, that node2 can only moves to node3, if it is empty. node1, on the other hand, goes to node3 if it is online. If node2′s resources are already there, they will be shut down. If node1 and node3 are down, NFS will migrate to node2 and force FTP to shut down (thats the reckless part).

Keep the N+1 Cluster config, if you still have it, you only need to modify it. What we want is “mutual exclusion” of resources along with high priority resources.

Can be skipped, if you still have the N+1 config..

# ** Add your virtual IPs

#> crm configure primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr params ip="10.0.0.100" op monitor interval="10s"

#> crm configure primitive VIRTUAL-IP2 ocf:heartbeat:IPaddr params ip="10.0.0.101" op monitor interval="10s"

# ** Create the NFS and FTP services

#> crm configure primitive NFS-SERVER lsb:nfs-kernel-server op monitor interval="60s"

#> crm configure primitive PROFTPD-SERVER lsb:proftpd op monitor interval="60s"

# ** group and relate

#> crm configure group NFS-AND-IP VIRTUAL-IP1 NFS-SERVER

#> crm configure colocation NFS-WITH-IP inf: VIRTUAL-IP1 NFS-SERVER

#> crm configure group FTP-AND-IP VIRTUAL-IP2 PROFTPD-SERVER

#> crm configure colocation FTP-WITH-IP inf: VIRTUAL-IP2 PROFTPD-SERVER

Here comes the difference. First let’s begin with the preferences for NFS-AND-IP, they haven’t changed:

#> crm configure location NFS-PREFER-1 FTP-AND-IP 100: node1

#> crm configure location NFS-PREFER-2 FTP-AND-IP 50: node2

#> crm configure location NFS-PREFER-3 FTP-AND-IP 25: node3

Now the preferences for FTP-AND-IP, which have changed – but only the last (if you have still your config, remove FTP-PREFER-3 beforehand via “crm configure delete FTP-PREFER-3“)

#> crm configure location FTP-PREFER-1 FTP-AND-IP 100: node2

#> crm configure location FTP-PREFER-2 FTP-AND-IP 50: node3

#> crm configure location FTP-PREFER-3 FTP-AND-IP -inf: node1

Setting “-inf” as priority for FTP-PREFER-3 on node1 will enforce that FTP-AND-IP will never migrate to node1. Which is of course a good thing, cause as long as node1 is online it serves NFS and as defined in the requirements: one server cannot server both. So if node2 and node3 are down FTP will be down!

Now comes the real clue which assures that NFS can use not only node3 for failover but also node2, if node1 and node3 are down:

#> crm configure colocation NFS-NOTWITH-FTP -inf: FTP-AND-IP NFS-AND-IP

So how does this work? Defining the negative (-infinity) colocation in exactly this order states: FTP-AND-IP can never be served (on the same node) where NFS-AND-IP runs. The order is important.

If you reverse it, you will have achieved something quite different: node1 can failover to node3, but if node2 also goes offline, FTP will be served from node3 – not NFS. Also: if node1 and node3 fail NFS will stay offline and not migrate to node2.

So try to read the part behind the colon as: “FTP-AND-IP will never exist on the same node as NFS-AND-IP and will rather shut itself down if NFS -AND-IP migrates to the node where it is.” It took me half an hour to identify the source of the reversed-behavior i described and figure out how it works (my first attempt to achieve the required scenario via rules and priorities)..

And here again is the full configuration your convenience:

node $id="055bb37b-6437-4bd7-8817-0aff3ab5549b" node3

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" node1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" node2

primitive NFS-SERVER lsb:nfs-kernel-server \

op monitor interval="60s"

primitive PROFTPD-SERVER lsb:proftpd \

op monitor interval="60s"

primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr \

params ip="10.0.0.100" \

op monitor interval="10s"

primitive VIRTUAL-IP2 ocf:heartbeat:IPaddr \

params ip="10.0.0.101" \

op monitor interval="10s"

group FTP-AND-IP VIRTUAL-IP2 PROFTPD-SERVER

group NFS-AND-IP VIRTUAL-IP1 NFS-SERVER

location FTP-PREFER-1 FTP-AND-IP 100: node2

location FTP-PREFER-2 FTP-AND-IP 50: node3

location FTP-PREFER-3 FTP-AND-IP -inf: node1

location NFS-PREFER-1 FTP-AND-IP 100: node1

location NFS-PREFER-2 FTP-AND-IP 50: node3

location NFS-PREFER-3 FTP-AND-IP 25: node2

colocation FTP-WITH-IP inf: VIRTUAL-IP2 PROFTPD-SERVER

colocation NFS-NOTWITH-FTP -inf: FTP-AND-IP NFS-AND-IP

colocation NFS-WITH-IP inf: VIRTUAL-IP1 NFS-SERVER

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1286393008" \

default-resource-stickiness="0" \

no-quorum-policy="ignore"

As you can see, with only location and colocation you can cope with quite complex scenarios. As mentioned, i initially meant to write this topic about rules and priorities, but in the process if noticed that it was possible with a far less complex configuration. It seems, that you can create endless complex mutual exclusions with this technique – as long as you care about the order. An interesting article about about further informations about colocation can be found here.

Your cluster consists of 3 nodes: two are serving FTP, one does no but has the FTP server up and running. The two active have different virtual IPs (eg: which you load balance from somewhere), the third has not – he is waiting to take over the load of any failing node. All your servers have mounted the same storage (howsoever they did). Whenever one of the first two nodes fail, the switch is as fast as possible: proftpd is already up and running (and the storage is mounted) on the idle node – all that it missing is the VIP. If two nodes fail, the left over node shall end up with two IPs – but only one proftpd instance (so that your silly load balancer still thinks he does good, but actually one node servers all).

It might look as another variation of the N+1 Cluster but it is not. It is more like an N-to-(N-1) cluster, cause all are actually kind of active in respective the proftpd resource, but only one or two are active respective the virtual IP. What i’d like to introduce is the clone-concept. If you understand it, you probably will be able to convert this example in a real N-to-N cluster and more complex scenarios. However, i will use the hot-standby node to make a point.

I’ll keep it short, we need one FTP resource and two IP resources:

#> crm configure primitive PROFTPD-SERVER lsb:proftpd op monitor interval="60s"

#> crm configure primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr params ip="10.0.0.100" op monitor interval="10s"

#> crm configure primitive VIRTUAL-IP2 ocf:heartbeat:IPaddr params ip="10.0.0.101" op monitor interval="10s"

This is also very easy.. first the command, then the explanation:

#> crm configure clone CLONE-FTP-SERVER PROFTPD-SERVER meta clone-max=3 clone-node-max=1

Ok, first of: we have a new resource called CLONE-FTP-SERVER. Interesting are the meta attributes which we have set. The first, clone-max, determines how many ACTIVE clones we will run on all nodes together. Setting it to three in our cluster of three nodes means: on each node will run a proftpd server. Actually we would not have to set it cause it defaults to the amount of nodes. The other param is clone-node-max which states: run at max one instance of this resource per node. If we would set it to 2 pacemaker would try to start 2 instances on one node which will neither work (with this particular service) nor would we want that. 1 is also the default value, so is redundant to set it (but descriptive).

The next thing we will do is set preferences for the clone. Because we have 3 servers and want to run 3 instances it will not matter. But if you have 4 nodes and run max 3 instances it certainly would!

#> crm configure location FTP-PREFER-1 CLONE-FTP-SERVER 100: node1

#> crm configure location FTP-PREFER-2 CLONE-FTP-SERVER 50: node2

#> crm configure location FTP-PREFER-3 CLONE-FTP-SERVER 25: node3

Again: imagine a fourth node, for which you would set the priority to 10 would assure that the first (other) node failing will start another instance (we want 3 running altogether) on node4.

And some more location preferences, this time for the IPs. It will assure that node1 (as long as online) has the first virtual IP and node2 (if online) the second. If node1 fails, node3 will get the IP and straight begin serving, cause the FTP server is already up and running. If node2 fails -> he will also migrate to node3. If node1 and node2 fail, the first virtual IP will migrate to node2 (as in the first N+1 cluster). The same happens for the second VIP if node2 and node3 fail, but ending on node1 of course. And the last possible scenario: if node1 and 3 fail, all IPs are on node2. Here we go:

#> crm configure location IP1-PREFER-1 VIRTUAL-IP1 100: node1

#> crm configure location IP1-PREFER-2 VIRTUAL-IP1 50: node3

#> crm configure location IP1-PREFER-3 VIRTUAL-IP1 25: node2

#> crm configure location IP2-PREFER-1 VIRTUAL-IP2 100: node2

#> crm configure location IP2-PREFER-2 VIRTUAL-IP2 50: node3

#> crm configure location IP2-PREFER-3 VIRTUAL-IP2 25: node1

And that’s all. We have 3 (hot and running) instances of the FTP server. The IPs are moved in our cluster with preferences to their “home” nodes. And what is the difference to the N+1 Cluster again? Again: we have “hot” nodes, the failover will be much faster. In technical means the major difference is that we use no groups/colocations of IP+FTP, but clones which makes it less complex.

But there are still some scenarios which you cannot implement with just clones. Think about the following: You have two MySQL nodes. One is the master, the other the replicated slave. When the master fails, you not only want to move the VIP, you also need to promote the Slave to master. Or the good old DRBD example: You have a storage server, which servers eg per NFS the storage to all clients. The storage itself is on a DRBD device for which the active node is the master and the passive node the slave. So if the master fails, the slave shall become the new master (not starting a new service, but changing it’s “role”) and start NFS + up the IP. This will be covered in the next and last chapter.

One node is the master, the other a replicated slave. You have one virtual-IP which stays with them master. If the master goes down, you want to move the VIP to the slave and promote him to master. Also: after the original master comes up again, you want him to become slave and replicate himself.

Let me begin with a theoretical summary of what multi-state is, how and under which circumstances it works and then proceed to the actual implementation.

Multi-state is in itself a simple concept, which expresses: we have a resource and it is in exact one of multiple possible states. Those states are master and slave, thus a node is either master or slave. Further more, we define additional requirements, such as: a resource can only be on one node in the cluster master and one node can only have one master of a kind (yes, something like clones.. i will go into that later).

So what it is good for ? The classic (cause best documented) scenario is a cluster of two nodes running DRBD. In short, if you are not familiar with DRBD, what this service does (very rough and superficial):

With DRBD you can sync a storage between two servers via your network. It is settled under the actual filesystem (eg ext3) but above the physical device (egt /dev/sda). This means, writing a file will handle the write request (simplified) to your filesystem (ext3), then to DRBD (which will sync it to the other DRBD server) and then to the actual disk. With this you can achieve identical copies of one storage on two nodes. The downside is, if you don’t use a cluster filesystem (such as GFS2, OCFS2, …) only one node can mount the filesystem (ext3) at a time, otherwise it would corrupt very (very!) soon. Which leads us to the multi-state scenario: only one is primary (master) and has mounted the fs, the other is secondary (slave) and has not – but both run the DRBD resource. Here is the exact description.

Ok, so having no cluster fs, you have to assure that one node in your pacemaker cluster is master and the other slave. As mentioned, both nodes still run the same resource (DRBD). What brings this to mind ? Clones of course. They also implement running the same resource on multiple nodes. Actually, a multi-state resource is a variation of a clone (in programmer terms: multi-state inherits from clone). I hope you get an impression of where this might lead. I will now stop using DRBD as an example, cause it is already far better described then i can do.

To bring something new to the table i have chosen OpenLDAP, cause it is dead simple to setup a master-slave replication. Since you might not have used OpenLDAP beforehand, and i do not want to make it unnecessarily difficult for you to replay my example, i will deviate from not providing any resource configurations and give you with the slapd.conf you’ll require (or setup yourself).

First install OpenLDAP

#all> aptitute install slapd

Here are the minimal configurations you need to deploy on your nodes (have a look at them, the slave configs only vary in the provider URL)

Those will overwrite your /etc/ldap/slapd.conf automatic via the later on provided OCF resource script. For now, you should only stop the slapd servers and remove them from init runlevels (both nodes):

#> /etc/init.d/slapd stop

#> update-rc.d -f slapd remove

We will need two nodes, one virtual IP and our multi-state resource. If you still have 3 nodes from the above example: stop heartbeat on all nodes, remove nodes from ha.cf on node1 and node2, start heartbeat, run the following:

#> crm node delete node3

Now setup the virtual IP:

#> crm configure primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr params ip="10.0.0.100" op monitor interval="10s"

Now it is getting tricky. You cannot use slapd only as an LSB resource, because LSB resources are not stateful. Furthermore, there is no OpenLDAP stateful resource in the heartbeat package. But dont panic, i wrote one. Before you download and install it, please remark: It is an example implementation meant to run in a testing-only environment. I have not testet it anywhere beside this tutorial and i strongly suggest not to use it for real. Even in your testing it is at your own risk. However, i hope it works at least.

So here it is: slapd-ms

--------------------------------------------------------------------------------------

#!/bin/sh

#

# This is an slapd master / slave OCF Resource Agent

# (Based on the example of a stateful OCF Resource Agent)

#

#

# Copyright (c) 2006 Andrew Beekhof

#

# This program is free software; you can redistribute it and/or modify

# it under the terms of version 2 of the GNU General Public License as

# published by the Free Software Foundation.

#

# This program is distributed in the hope that it would be useful, but

# WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.

#

# Further, this software is distributed without any warranty that it is

# free of the rightful claim of any third person regarding infringement

# or the like. Any license provided herein, whether implied or

# otherwise, applies only to this software file. Patent licenses, if

# any, provided herein do not apply to combinations of this program with

# other software, or any other product whatsoever.

#

# You should have received a copy of the GNU General Public License

# along with this program; if not, write the Free Software Foundation,

# Inc., 59 Temple Place - Suite 330, Boston MA 02111-1307, USA.

#

#######################################################################

# Initialization:

: ${OCF_FUNCTIONS_DIR=${OCF_ROOT}/resource.d/heartbeat}

. ${OCF_FUNCTIONS_DIR}/.ocf-shellfuncs

CRM_MASTER="${HA_SBIN_DIR}/crm_master -l reboot"

MD5_SUM="/usr/bin/md5sum"

DEFAULT_SLAPD_PID="/var/run/slapd/slapd.pid"

#######################################################################

meta_data() {

cat <<END

<?xml version="1.0"?>

<!DOCTYPE resource-agent SYSTEM "ra-api-1.dtd">

<resource-agent name="slapd-ms" version="1.0">

<version>1.0</version>

<longdesc lang="en">

This is an example resource agent that impliments two states

</longdesc>

<shortdesc lang="en">

Example of a slapd stateful resource agent

</shortdesc>

<parameters>

<parameter name="config" unique="1">

<longdesc lang="en">

Path to the slapd.conf file.. default: /etc/ldap/slapd.conf. The master and slave files are assumed as [config].master and [config].slave

</longdesc>

<shortdesc lang="en">

Path to slapd.conf

</shortdesc>

<content type="string" default="/etc/ldap/slapd.conf" />

</parameter>

<parameter name="lsb_script" unique="1">

<longdesc lang="en">

Path to lsb start stript.. normally /etc/init.d/slapd

</longdesc>

<shortdesc lang="en">

Path to lsb start stript

</shortdesc>

<content type="string" default="/etc/init.d/slapd" />

</parameter>

</parameters>

<actions>

<action name="start" timeout="20s" />

<action name="stop" timeout="20s" />

<action name="monitor" depth="0" timeout="20" interval="10"/>

<action name="meta-data" timeout="5" />

<action name="validate-all" timeout="20s" />

</actions>

</resource-agent>

END

exit $OCF_SUCCESS

}

#######################################################################

slapd_ms_usage() {

cat <<END

usage: $0 {start|stop|promote|demote|monitor|validate-all|meta-data}

Expects to have a fully populated OCF RA-compliant environment set.

END

exit $1

}

slapd_ms_log() {

msg=$1

ocf_log err "SLAPD-MSG[$RESOURCE]: $msg"

}

slapd_ms_is_running() {

pidfile=`grep pidfile "$OCF_RESKEY_config" | sed ‘s%pidfile *%%‘`

if [ "X$pidfile" == "X" ]; then

pidfile=$DEFAULT_SLAPD_PID

fi

ocf_pidfile_status $pidfile

res=$?

slapd_ms_log " res for running: $res (0=ok, 1=nope)"

return $res

}

#

# Returns 0 if OK and > 0 if error / wrong / failed

#

slapd_ms_check_state() {

target=$1

md5_current=`$MD5_SUM "$OCF_RESKEY_config" | sed ‘s%\([a-f0-9]*\).*%\1%‘`

md5_master=`$MD5_SUM "$OCF_RESKEY_config.master" | sed ‘s%\([a-f0-9]*\).*%\1%‘`

md5_slave=`$MD5_SUM "$OCF_RESKEY_config.slave" | sed ‘s%\([a-f0-9]*\).*%\1%‘`

slapd_ms_log "COMPARE CUR $md5_current, MASTER $md5_master, SLAVE $md5_slave"

# check wheter we are master

if [ "X$target" == "Xmaster" ]; then

if [ "X$md5_current" != "X$md5_master" ]; then

slapd_ms_log " check is master -> is not"

return 1

fi

# check wheter we are slave

elif [ "X$target" == "Xslave" ]; then

if [ "X$md5_current" != "X$md5_slave" ]; then

slapd_ms_log " check is slave -> is not"

return 1

fi

fi

# check wheter sldapd running

slapd_ms_is_running

return $?

}

#

# Set state to slave or master

#

slapd_ms_set_state() {

target=$1

slapd_ms_log " Set new state: master"

if [ "X$target" == "Xmaster" ]; then

cp "${OCF_RESKEY_config}.master" "${OCF_RESKEY_config}"

else

cp "${OCF_RESKEY_config}.slave" "${OCF_RESKEY_config}"

fi

}

#

# Start or restart slapd service

#

slapd_ms_start_server() {

slapd_ms_is_running

if [ $? -eq 0 ]; then

slapd_ms_log " Start server"

${OCF_RESKEY_lsb_script} start

else

slapd_ms_log " Reload server"

${OCF_RESKEY_lsb_script} force-reload

fi

}

#

# Stops slapd service

#

slapd_ms_stop_server() {

slapd_ms_is_running

if [ $? -gt 0 ]; then

slapd_ms_log " Stop server"

${OCF_RESKEY_lsb_script} stop

fi

}

#

# Start. Always as slave

#

slapd_ms_start() {

# check wheter (wrongly) master ? should not happen!

slapd_ms_check_state "master"

if [ $? -eq 0 ]; then

slapd_ms_log " Is already running as master, not good"

return $OCF_RUNNING_MASTER

fi

slapd_ms_set_state "slave"

slapd_ms_start_server

}

#

# Stop. Full shutdown of slapd server

#

slapd_ms_stop() {

slapd_ms_stop_server

}

#

# Promote to master

#

slapd_ms_promote() {

# check wheter (wrongly) master ? should not happen!

slapd_ms_check_state "master"

if [ $? -eq 0 ]; then

return $OCF_RUNNING_MASTER

fi

slapd_ms_set_state "master"

slapd_ms_start_server

}

#

# Demote to slave

#

slapd_ms_demote() {

# check wheter (wrongly) master ? should not happen!

slapd_ms_check_state "slave"

if [ $? -eq 0 ]; then

return $OCF_SUCCESS

fi

slapd_ms_set_state "slave"

slapd_ms_start_server

}

#

# Monititor.. what we are, what we do

#

slapd_ms_monitor() {

# we are master

slapd_ms_check_state "master"

if [ $? -eq 0 ]; then

slapd_ms_log "RUNING MASTER"

return $OCF_RUNNING_MASTER

fi

# check wheter (wrongly) master ? should not happen!

slapd_ms_check_state "slave"

if [ $? -eq 0 ]; then

slapd_ms_log "RUNING SLAVE"

return $OCF_SUCCESS

fi

slapd_ms_log "RUNING WTF?"

# oops, should not happen

return $OCF_ERR_GENERIC

}

#

# Validate wheter we are what we shall be

#

slapd_ms_validate() {

if [ ! -f "${OCF_RESKEY_config}.master" ]; then

ocf_log err "No master config file present, setup ${OCF_RESKEY_config}.master"

return $OCF_ERR_CONFIGURED

fi

if [ ! -f "${OCF_RESKEY_config}.slave" ]; then

ocf_log err "No slave config file present, setup ${OCF_RESKEY_config}.slave"

return $OCF_ERR_CONFIGURED

fi

if [ "$OCF_RESKEY_CRM_meta_clone_max" -ne 2 ] \

|| [ "$OCF_RESKEY_CRM_meta_clone_node_max" -ne 1 ] \

|| [ "$OCF_RESKEY_CRM_meta_master_node_max" -ne 1 ] \

|| [ "$OCF_RESKEY_CRM_meta_master_max" -ne 1 ] ; then

ocf_log err "Clone options misconfigured."

exit $OCF_ERR_CONFIGURED

fi

# oops, should not happen

return $OCF_SUCCESS

}

case $__OCF_ACTION in

meta-data) meta_data;;

start) slapd_ms_start;;

promote) slapd_ms_promote;;

demote) slapd_ms_demote;;

stop) slapd_ms_stop;;

monitor) slapd_ms_monitor;;

validate-all) slapd_ms_validate;;

usage|help) slapd_ms_usage $OCF_SUCCESS;;

*) slapd_ms_usage $OCF_ERR_UNIMPLEMENTED;;

esac

exit $?

--------------------------------------------------------------------------------------

Download, untar and copy it on both nodes to /usr/lib/ocf/resource.d/custom/slapd-ms, afterwards set it executable.

We still need to add the resource in heartbeat. Therefore we first create a primitive and encapsulate it then within a ms (multi-state) resource:

#> crm configure primitive LDAP-SERVER ocf:custom:slapd-ms op monitor interval="30s"

#> crm configure ms MS-LDAP-SERVER LDAP-SERVER params config="/etc/ldap/slapd.conf" \

lsb_script="/etc/init.d/slapd" meta clone-max="2" clone-node-max="1" master-max="1" \

master-node-max="1" notify="false"

Both params tell the resource where the init script (/etc/init.d/slapd) and the config file (/etc/ldap/slapd.conf ) are. The clone meta attributes you already now, the master attributes are now. The first master-max defines that in the whole cluster only one instance can have the msater state and the other defines the same for a single node. Both are default settings.

The next thing we want is to colocate the IP with the master OpenLDAP server. We also will assure that the IP will up before slapd is started.

#> crm configure order LDAP-AFTER-IP inf: VIRTUAL-IP1 MS-LDAP-SERVER

#> crm configure colocation LDAP-WITH-IP inf: VIRTUAL-IP1 MS-LDAP-SERVER

One last last thing: i set the default-resource-stickiness to 1 in this example, because i would like the ldap server to stay on the last online node and do not migrate back.

Here is the full config:

#> crm configure show

INFO: building help index

node $id="8d0882b9-1d12-4551-b19f-d785407a58e3" node1

node $id="e90c2152-3e47-4858-9977-37c7ed3c325b" node2

primitive LDAP-SERVER ocf:custom:slapd-ms \

op monitor interval="30s"

primitive VIRTUAL-IP1 ocf:heartbeat:IPaddr \

params ip="10.0.0.100" \

op monitor interval="10s"

ms MS-LDAP-SERVER LDAP-SERVER \

params config="/etc/ldap/slapd.conf" lsb_script="/etc/init.d/slapd" \

meta clone-max="2" clone-node-max="1" master-max="1" master-node-max="1" notify="false" \

target-role="Slave"

colocation LDAP-WITH-IP inf: VIRTUAL-IP1 MS-LDAP-SERVER

order LDAP-AFTER-IP inf: VIRTUAL-IP1 MS-LDAP-SERVER

property $id="cib-bootstrap-options" \

dc-version="1.0.9-74392a28b7f31d7ddc86689598bd23114f58978b" \

cluster-infrastructure="Heartbeat" \

stonith-enabled="false" \

last-lrm-refresh="1258039093" \

default-resource-stickiness="1" \

no-quorum-policy="ignore"

Ok, where does this leave us ? We can now handle resources with multiple-states. Think MySQL replication or most databases for that matter. You can reboot a little and see how the slave gets the IP and becomes master while the old master, after boot, will stay the new slave. If you fill your LDAP with real data, it should also replicate fine.

Well, this is the “fault” of your init-runlevels. Eg, if you install proftpd in debian, if will install itself in all runlevels with start or stop links (have a look at /etc/rc[0-6].d). So, if your offline node boots again, it will start those services, according to runlevel settings. However, pacemaker will take them probably down again, as soon as it is aware. So, thats why you might see a short flicker, though. Depending on the service, this might do no harm or be a real problem. In any case, you can remove the services from runleveles, which i prefer to do, like so:

# check in which runlevels they are, save output maybe for later resetting

#> update-rc.d -n -f proftpd remove

# remove them

#> update-rc.d -f proftpd remove

Maybe you check after an upgrade of the debian package, whether they have been added again (which they shouldn’t, but..).

Update (from Erik): In Ubuntu 10.04 an later you can just disable the resources instead of removing them permanently:

update-rc.d proftpd disable

This will be my last blog entry about pacemaker, but there is still far more to know. There are things called rules, with which you can add a time component to your clusters behaviour. Also very complex colocation and location settings are possible with them. Also priorities can help you to prefer single resources with a finer granulation and more dynamic then you could with colocations… just to give you a hint about what is possible.

If you find any mistakes in my configuration or interpretations, please share.

Of course: no warranties or guaranties of any kind are given. Messed up system-configurations are likely. Headaches probable.

Intro to pacemaker,布布扣,bubuko.com

原文:http://www.cnblogs.com/popsuper1982/p/3885179.html