保证爬虫文件的parse方法中有可迭代类型对象(通常为列表或字典)的返回,该返回值可以通过终端指令的形式写入指定格式的文件进行持久化操作

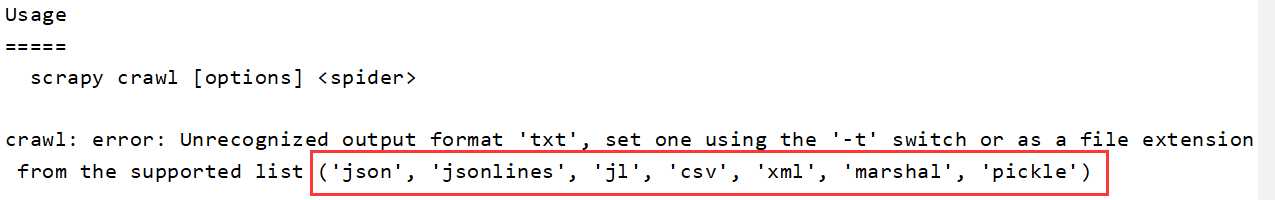

执行输出指定格式进行存储:将爬取到的数据写入不同格式的文件中进行存储 scrapy crawl 爬虫文件名 -o xxx.json

注意:持久化存储的文件格式只支持如下图的几种,下图是文件名不符合的报错信息

scrapy框架中已经为我们专门集成好了高效、便捷的持久化操作功能,我们直接使用即可。要想使用scrapy的持久化操作功能,我们首先来认识如下两个文件:

持久化流程:

1.使用scrapy crawl xxx -o data.csv命令

# -*- coding: utf-8 -*- import scrapy from qiubaiPro.items import QiubaiproItem class QiubaiSpider(scrapy.Spider): name = ‘qiubai‘ # allowed_domains = [‘www.xxx.com‘] start_urls = [‘https://www.qiushibaike.com/text/‘] #终端指令的持久化存储:只可以将parse方法的返回值存储到磁盘文件 def parse(self, response): div_list = response.xpath(‘//div[@id="content-left"]/div‘) all_data = [] for div in div_list: # author = div.xpath(‘./div[1]/a[2]/h2/text()‘)[0].extract() author = div.xpath(‘./div[1]/a[2]/h2/text()‘).extract_first() content = div.xpath(‘./a/div/span//text()‘).extract() content = ‘‘.join(content) dic = { ‘author‘:author, ‘content‘:content } all_data.append(dic) return all_data

2.使用管道存储

# -*- coding: utf-8 -*- import scrapy from qiubaiPro.items import QiubaiproItem class QiubaiSpider(scrapy.Spider): name = ‘qiubai‘ # allowed_domains = [‘www.xxx.com‘] start_urls = [‘https://www.qiushibaike.com/text/‘] #基于管道的持久化存储 def parse(self, response): div_list = response.xpath(‘//div[@id="content-left"]/div‘) all_data = [] for div in div_list: # author = div.xpath(‘./div[1]/a[2]/h2/text()‘)[0].extract() author = div.xpath(‘./div[1]/a[2]/h2/text()‘).extract_first() content = div.xpath(‘./a/div/span//text()‘).extract() content = ‘‘.join(content) #实例化一个item类型的对象 item = QiubaiproItem() #使用中括号的形式访问item对象中的属性 item[‘author‘] = author item[‘content‘] = content #将item提交给管道 yield item

piplines.py

可以将数据存储在redis,mysql,以及本地文件:

# -*- coding: utf-8 -*- # Define your item pipelines here # # Don‘t forget to add your pipeline to the ITEM_PIPELINES setting # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html #一个类表示的是将解析/爬取到的数据存储到一个平台 import pymysql from redis import Redis class QiubaiproPipeline(object): fp = None def open_spider(self,spider): print(‘开始爬虫......‘) self.fp = open(‘./qiubai.txt‘,‘w‘,encoding=‘utf-8‘) #可以将item类型的对象中存储的数据进行持久化存储 def process_item(self, item, spider): author = item[‘author‘] content = item[‘content‘] self.fp.write(author+‘:‘+content+‘\n‘) return item #返回给了下一个即将被执行的管道类 def close_spider(self,spider): print(‘结束爬虫!!!‘) self.fp.close() class MysqlPipeLine(object): conn = None cursor = None def open_spider(self,spider): self.conn = pymysql.Connect(host=‘127.0.0.1‘,port=3306,user=‘root‘,password=‘‘,db=‘qiubai‘,charset=‘utf8‘) print(self.conn) def process_item(self, item, spider): self.cursor = self.conn.cursor() try: self.cursor.execute(‘insert into qiubai values("%s","%s")‘%(item[‘author‘],item[‘content‘])) self.conn.commit() except Exception as e: print(e) self.conn.rollback() return item def close_spider(self,spider): self.cursor.close() self.conn.close() class RedisPipeLine(object): conn = None def open_spider(self,spider): self.conn = Redis(host=‘127.0.0.1‘,port=6379) print(self.conn) def process_item(self,item,spider): dic = { ‘author‘:item[‘author‘], ‘content‘:item[‘content‘] } self.conn.lpush(‘qiubai‘,dic)

原文:https://www.cnblogs.com/robertx/p/10957529.html