Value-Iteration Algorithm:

For each iteration k+1:

a. calculate the optimal state-value function for all s∈S;

b. untill algorithm converges.

end up with an optimal state-value function

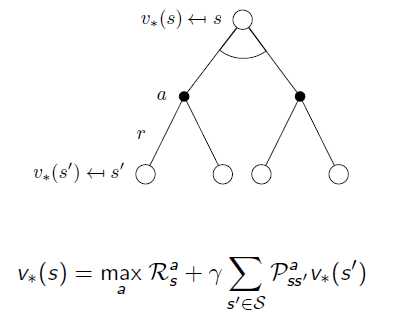

Optimal State-Value Function

As mentioned on the previous post, the method to pick up Optimal State-Value Function is shown below. From state s, we have multiple possible actions, what we will do is choose the best combination of immediate reward and state-value function from the next state.

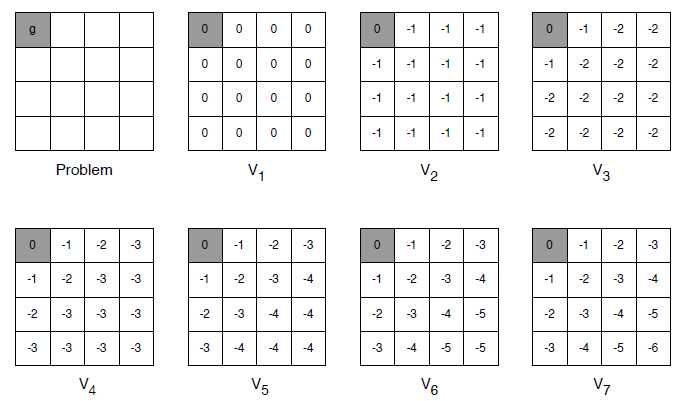

Example for a grid game, it is quite like information propagate from the terminal states backward:

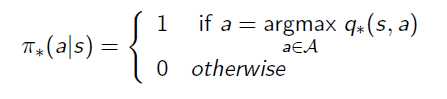

From State-Value Function to Policy

After we‘ve got the Optimal State-Value Function, the Optimal Policy can be aquired by maxmizing the Action-Value Function. This means we try all possible actions from state s, and then choose the one that has the maximum reward.

Value Iteration Algorithm for MDP

原文:https://www.cnblogs.com/rhyswang/p/11206150.html