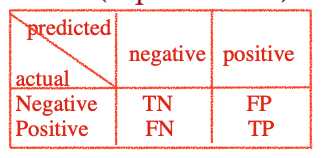

ACC = (TP+TN)/(P+N)

ERR = (FP+FN)/(P+N) = 1-ACC

Precision = TP/(TP+FP)-- positive predictive value

Recall= TP/(TP+FN) -- true positive rate

F1=1/(1/precision+1/recall)

True positive rate (TPR): the ratio of positive instances that are correctly classified as positive

TPR = TP/(TP+FN) = recall

True negative rate (TNR): the ratio of negative instances that are correctly classified as negative

TNR = TN/(TN+FP) = specify

False positive rate (FPR): the ratio of negative instances that are incorrectly classified as positive.

FPR = FN/(TN+FP) = 1-specify

ROC: TPR vs FPR

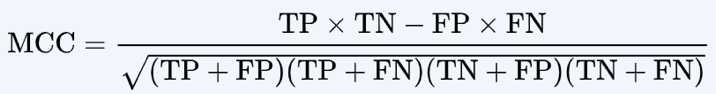

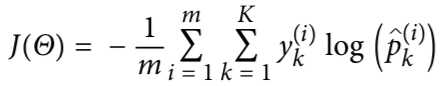

Evaluation metrics for classification

原文:https://www.cnblogs.com/sherrydatascience/p/11817087.html