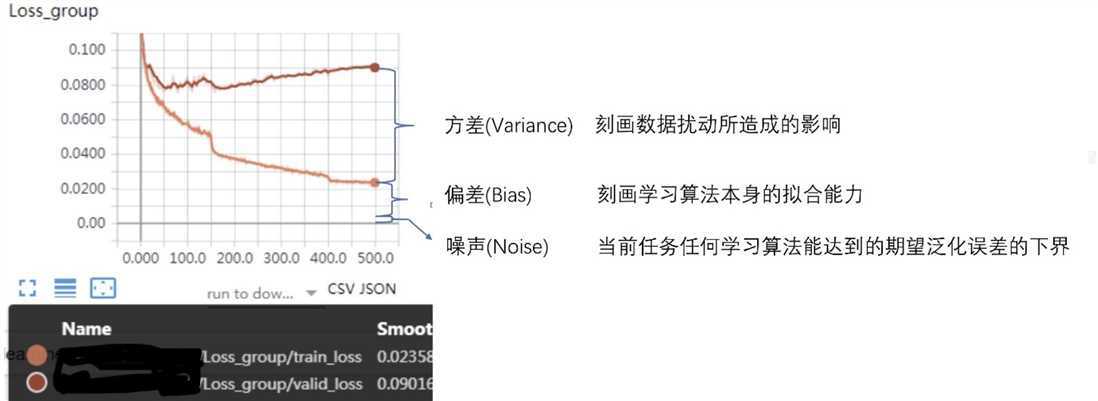

误差可分解为:偏差,方差与噪声之和。即误差 = 偏差 + 方差 + 噪声之和

偏差度量了学习算法的期望预测与真实结果的偏离程度,即刻画了学习算法本身的拟合能力

方差度量了同样大小的训练集的变动所导致的学习性能的变化,即刻画了数据扰动所造成的影响

噪声则表达了在当前任务上任何学习算法所能达到的期望泛化误差的下界

准确地来说方差指的是不同针对 不同 数据集的预测值期望的方差,而非训练集和测试集Loss的差异。

损失函数(Loss Function):\(Loss = f(\hat y , y)\)

代价函数(Cost Function):\(Cost = \frac{1}{N}\sum_i f(\hat y_i, y_i)\)

目标函数(Objective Function):\(Obj = Cost + Regularization\)

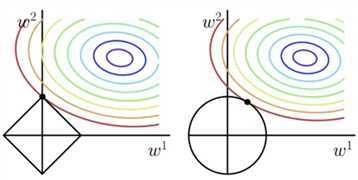

L1 Regularization: \(\sum_i |w_i|\) 因为常在坐标轴(顶点)上取极值,容易训练出稀疏参数

L2 Regularization: \(\sum_i w_i^2\) \(w_{i+1} = w_i - Obj‘ = w_i - (\frac{\partial Loss}{\partial w_i}+\lambda * w_i) = w_i(1-\lambda) - \frac{\partial Loss}{\partial w_i}\) ,因此常被称为权重衰减

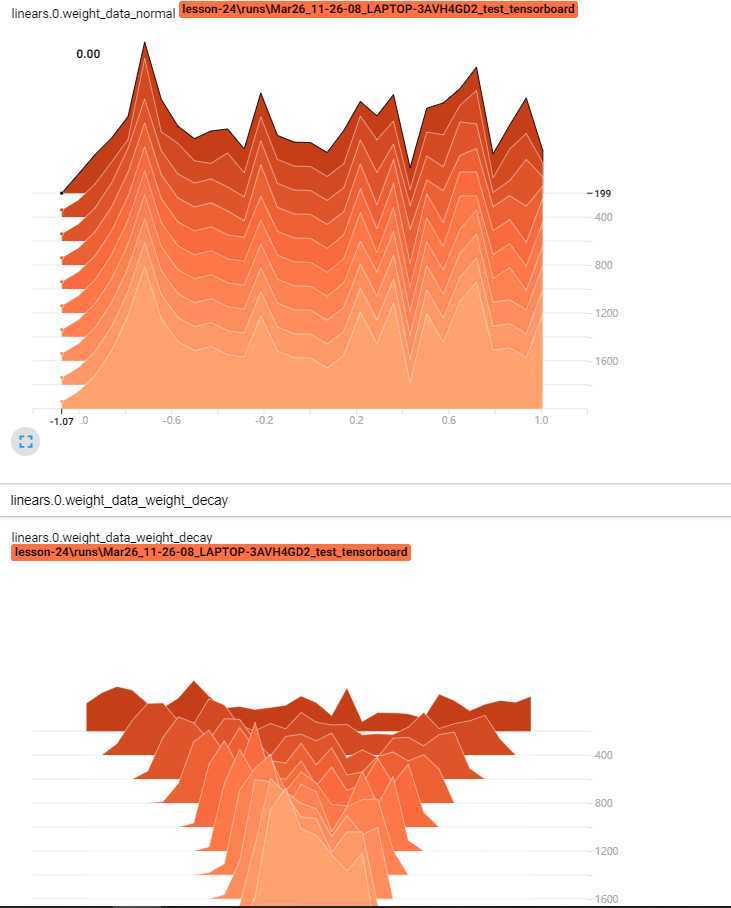

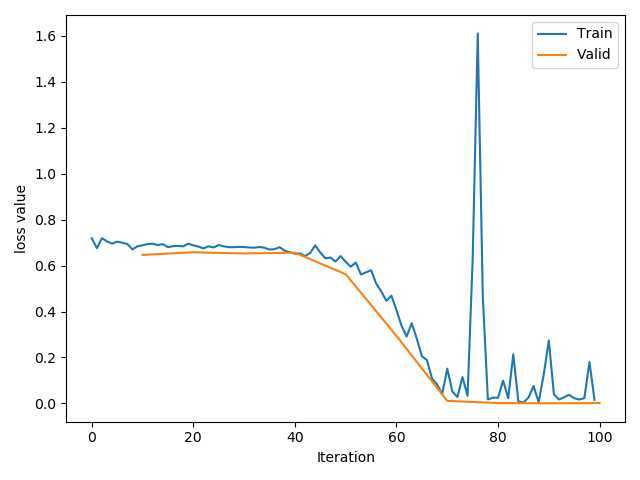

同时构建使用weight_dacay和没有的模型:可以看到,随着训练次数增加,不含正则项的模型loss趋于0.

# ============================ step 1/5 数据 ============================

def gen_data(num_data=10, x_range=(-1, 1)):

w = 1.5

train_x = torch.linspace(*x_range, num_data).unsqueeze_(1)

train_y = w*train_x + torch.normal(0, 0.5, size=train_x.size())

test_x = torch.linspace(*x_range, num_data).unsqueeze_(1)

test_y = w*test_x + torch.normal(0, 0.3, size=test_x.size())

return train_x, train_y, test_x, test_y

train_x, train_y, test_x, test_y = gen_data(x_range=(-1, 1))

# ============================ step 2/5 模型 ============================

class MLP(nn.Module):

def __init__(self, neural_num):

super(MLP, self).__init__()

self.linears = nn.Sequential(

nn.Linear(1, neural_num),

nn.ReLU(inplace=True),

nn.Linear(neural_num, neural_num),

nn.ReLU(inplace=True),

nn.Linear(neural_num, neural_num),

nn.ReLU(inplace=True),

nn.Linear(neural_num, 1),

)

def forward(self, x):

return self.linears(x)

net_normal = MLP(neural_num=n_hidden)

net_weight_decay = MLP(neural_num=n_hidden)

# ============================ step 3/5 优化器 ============================

optim_normal = torch.optim.SGD(net_normal.parameters(), lr=lr_init, momentum=0.9)

optim_wdecay = torch.optim.SGD(net_weight_decay.parameters(), lr=lr_init, momentum=0.9, weight_decay=1e-2)

# 包含了weight_decay

# ============================ step 4/5 损失函数 ============================

loss_func = torch.nn.MSELoss()

# ============================ step 5/5 迭代训练 ============================

writer = SummaryWriter(comment=‘_test_tensorboard‘, filename_suffix="12345678")

for epoch in range(max_iter):

# forward

pred_normal, pred_wdecay = net_normal(train_x), net_weight_decay(train_x)

loss_normal, loss_wdecay = loss_func(pred_normal, train_y), loss_func(pred_wdecay, train_y)

optim_normal.zero_grad()

optim_wdecay.zero_grad()

loss_normal.backward()

loss_wdecay.backward()

optim_normal.step()

optim_wdecay.step()

...

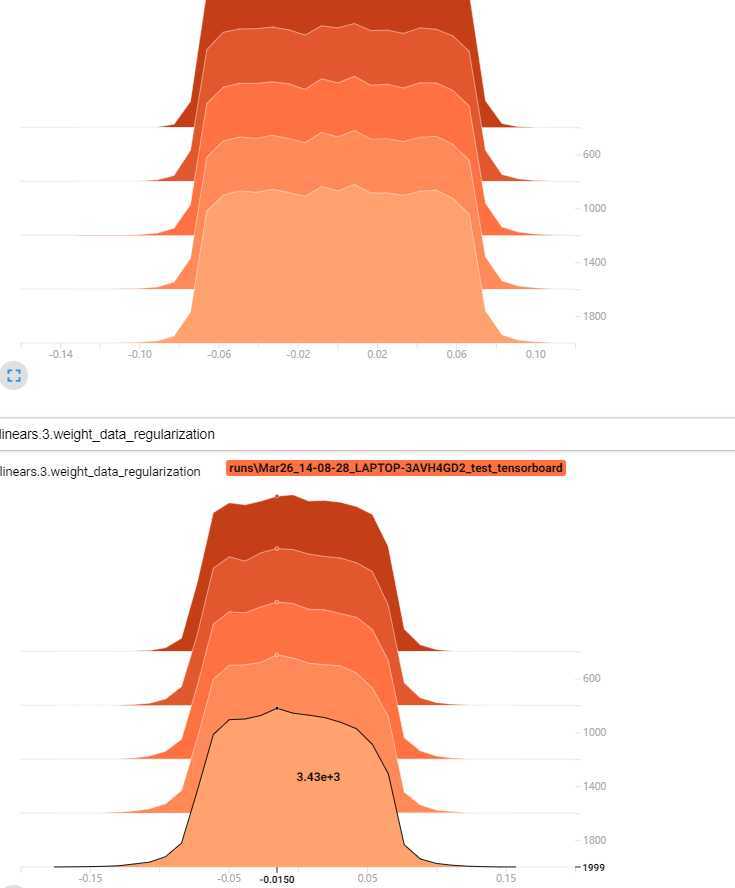

通过tensorborad查看参数变化,可以明显看出使用L2的模型参数更集中:

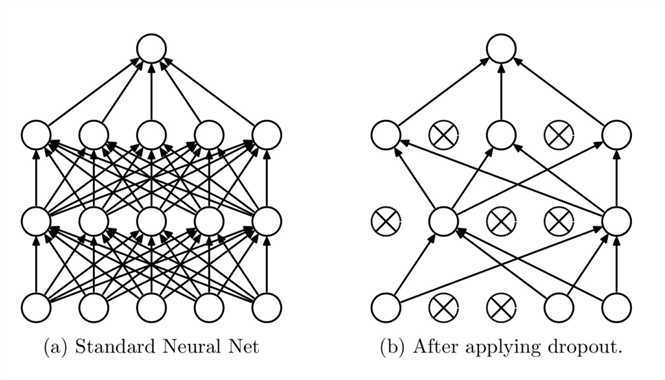

失活:weight = 0

指该层任何一个神经元都有prob的可能性失活,而非有prob的神经元会失活。

假设prob = 0.3,在测试时不使用dropout,为了抵消这种尺度上的变化,需要在训练期间对权重除 \((1-p)\)

Test: \(100 = \sum_{100} W_x\)

Train: \(70 = \sum_{70} W_x \Longrightarrow 100 = \sum_{70} W_x/(1-p)\)

因此,在两种状态下经过网络层,得到的结果近似:

net = Net(input_num, d_prob=0.5)

net.linears[1].weight.detach().fill_(1.)

net.train() # 测试结束后调整回运行状态

y = net(x)

print("output in training mode", y)

net.eval() # 测试开始时使用

y = net(x)

print("output in eval mode", y)

output in training mode tensor([9942.], grad_fn=<ReluBackward1>)

output in eval mode tensor([10000.], grad_fn=<ReluBackward1>)

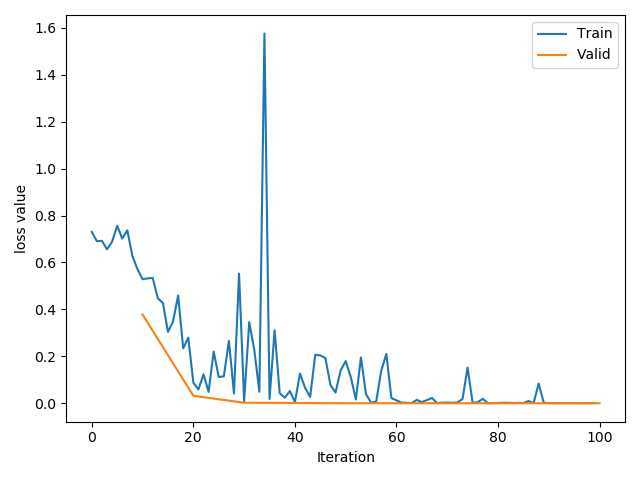

同时构建有dropout和没有的模型:随着训练次数增加,含有dropout的模型会更加平滑。

class MLP(nn.Module):

def __init__(self, neural_num, d_prob=0.5):

super(MLP, self).__init__()

self.linears = nn.Sequential(

nn.Linear(1, neural_num),

nn.ReLU(inplace=True),

nn.Dropout(d_prob),

nn.Linear(neural_num, neural_num),

nn.ReLU(inplace=True),

nn.Dropout(d_prob),

nn.Linear(neural_num, neural_num),

nn.ReLU(inplace=True),

nn.Dropout(d_prob),

nn.Linear(neural_num, 1),

)

def forward(self, x):

return self.linears(x)

net_prob_0 = MLP(neural_num=n_hidden, d_prob=0.)

net_prob_05 = MLP(neural_num=n_hidden, d_prob=0.5)

我们观察线性层的权重分布,可以明显看出来含有dropout的模型参数更集中,峰值更高

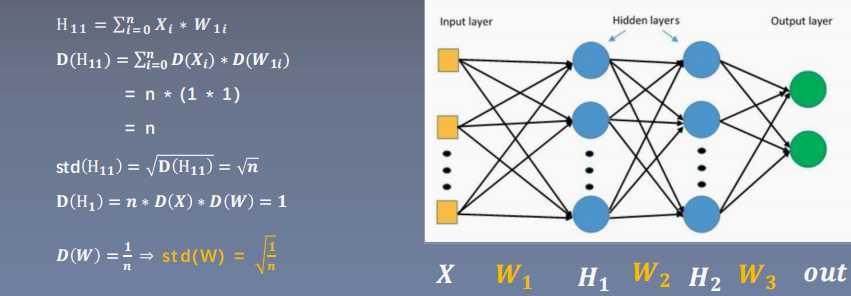

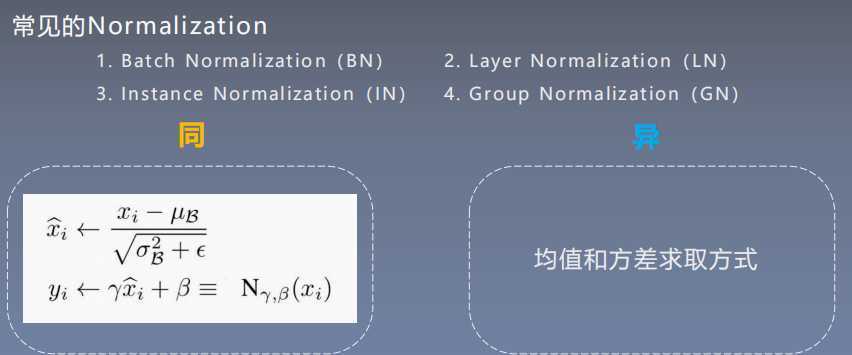

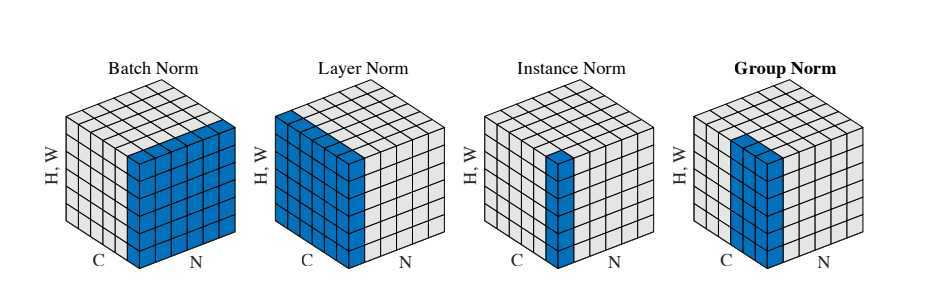

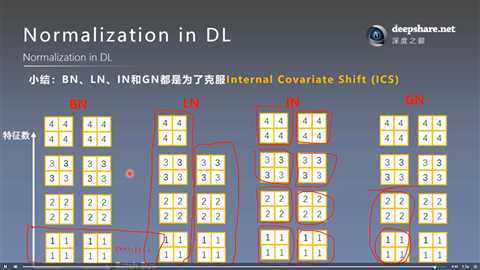

批:一批数据,通常为mini-batch

标准化:0均值,1方差

优点:

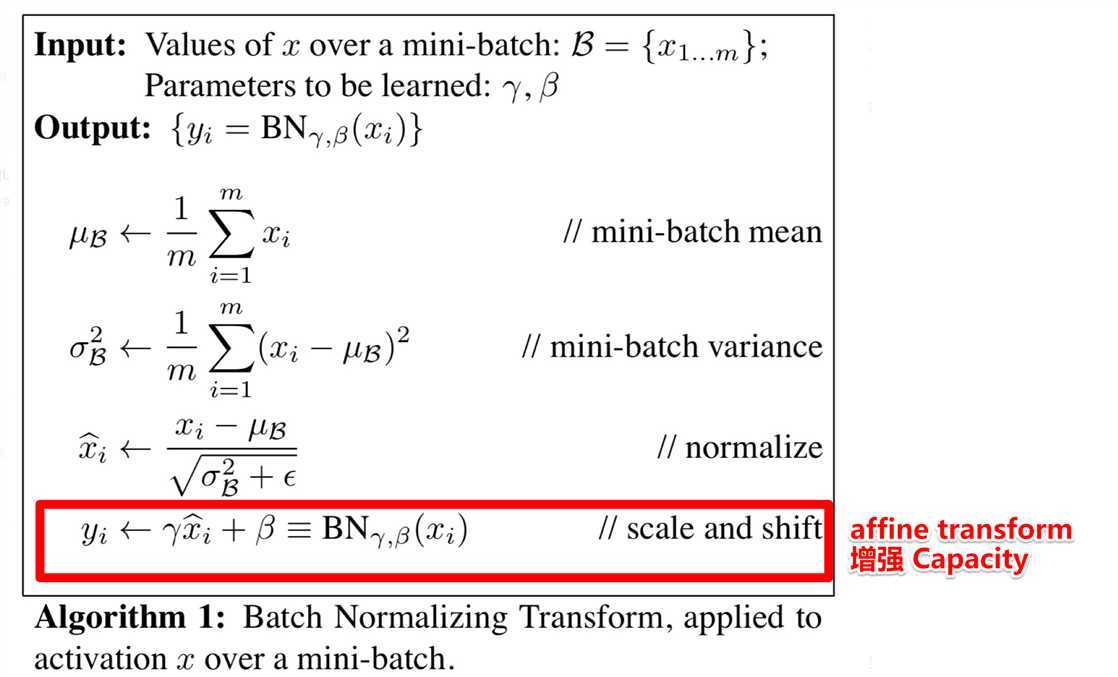

注意到,\(\gamma, \beta\)是可学习的参数,如果该层不想进行BN,最后学习出来\(\gamma = \sigma_{\Beta}, \beta = \mu_{\Beta}\),即恒等变换。

详见《Batch Normalization Accelerating Deep Network Training by Reducing Internal Covariate Shift》阅读笔记与实现

防止因为数据尺度/分布的不均使得梯度消失或爆炸,导致训练困难。

第四节提到的其他Normalization都是为了避免ICS.

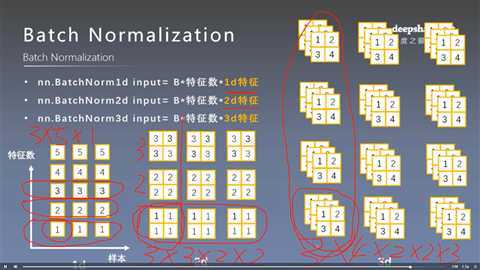

pytorch中nn.BatchNorm1d nn.BatchNorm2d nn.BatchNorm3d 都继承于_BatchNorm,并且有以下参数:

__init__(self, num_features, # 一个样本特征数量(最重要)

eps=1e-5, # 分母修正项

momentum=0.1, # 指数加权平均估计当前mean/var

affine=True, # 是否需要affine transform

track_running_stats=True) # 是训练状态,还是测试状态

BatchNorm层主要参数:

训练:均值和方差采用指数加权平均计算

running_mean = (1 - momentum) * running_mean + momentum * mean_t

running_var = (1 - momentum) * running_var + momentum * var_t

测试:当前统计值

如上图所示:在1D,2D,3D中,特征数分别指 特征、特征图、特征核的数目。而BN是对于每个特征对应的所有样本求的均值和方差,故如上图中三种情况样本数分别为5,3,3,而对应的\(\gamma, \beta\)维数即5,3,3

我们原始的网络在卷积和线性层之后加入了BN层,注意其中卷积层后是2D BN,线性层后是1D

class LeNet_bn(nn.Module):

def __init__(self, classes):

super(LeNet_bn, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.bn1 = nn.BatchNorm2d(num_features=6)

self.conv2 = nn.Conv2d(6, 16, 5)

self.bn2 = nn.BatchNorm2d(num_features=16)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.bn3 = nn.BatchNorm1d(num_features=120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, classes)

def forward(self, x):

out = self.conv1(x)

out = self.bn1(out)

out = F.relu(out)

out = F.max_pool2d(out, 2)

out = self.conv2(out)

out = self.bn2(out)

out = F.relu(out)

out = F.max_pool2d(out, 2)

out = out.view(out.size(0), -1)

out = self.fc1(out)

out = self.bn3(out)

out = F.relu(out)

out = F.relu(self.fc2(out))

out = self.fc3(out)

return out

def initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.xavier_normal_(m.weight.data)

if m.bias is not None:

m.bias.data.zero_()

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight.data, 0, 1)

m.bias.data.zero_()

net = LeNet(classes=2)不经过初始化:

net.initialize_weights():

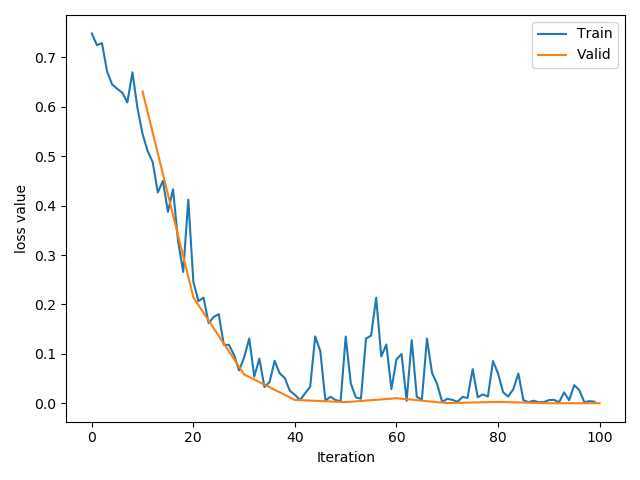

net = LeNet_bn(classes=2)结果如下:即使Loss有不稳定的区间,其最大值不像前两种超过1.5

起因:BN不适用于变长的网络,如RNN

思路:逐层计算均值和方差

注意事项:

nn.LayerNorm(normalized_shape, # 该层特征形状

eps=1e-05,

elementwise_affine=True # 是否需要affine transform

)

注意,这里的normalized_shape可以是输入后面任意维特征。比如[8, 6, 3, 4]为batch的输入,可以是[6,3,4], [3,4],[4],但不能是[6,3]

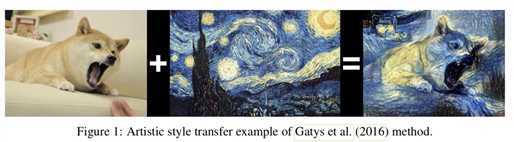

起因:BN在图像生成(Image Generation)中不适用,图像中输入的Batch各不相同,不能逐Batch标准化

思路:逐Instance(channel)计算均值和方差

nn.InstanceNorm2d(num_features,

eps=1e-05,

momentum=0.1,

affine=False,

track_running_stats=False)

# 同样还有1d, 3d

图像风格迁移就是一种不能BN的应用,输入的图片各不相同,只能逐通道求方差和均值

起因:小batch样本中,BN估计的值不准

思路:数据不够,通道来凑

nn.GroupNorm(num_groups, # 分组个数,必须是num_channel的因子

num_channels,

eps=1e-05,

affine=True)

注意事项:

应用场景:大模型(小batch size)任务

当num_groups=num时,相当于LN

当num_groups=1时,相当于IN

原文:https://www.cnblogs.com/RyanSun17373259/p/12575866.html