| 主机名 | ip | 配置 | 系统 |

| heboan-hadoop-000 | 10.1.1.15 | 8C/8G | CentOS7.7 |

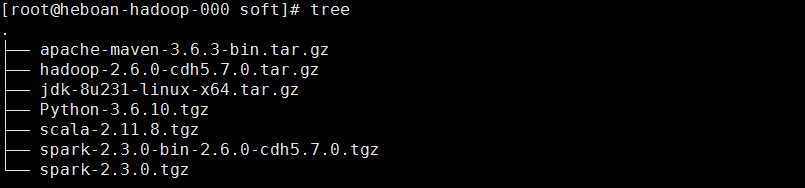

工具包

配置本机ssh免密

时间校准

文件描述符优化

tar xf jdk-8u231-linux-x64.tar.gz -C /srv/ vim ~/.bash_profile export JAVA_HOME=/srv/jdk1.8.0_231 export PATH=$JAVA_HOME/bin:$PATH source ~/.bash_profile

tar xf apache-maven-3.6.3-bin.tar.gz -C /srv/ vim ~/.bash_profile export MAVEN_HOME=/srv/apache-maven-3.6.3 export PATH=$MAVEN_HOME/bin:$PATH source ~/.bash_profile #更改本地仓库存路径 mkdir /data/maven_repository vim /srv/apache-maven-3.6.3/conf/settings.xml

yum install gcc gcc-c++ openssl-devel readline-devel unzip -y tar xf Python-3.6.10.tgz cd Python-3.6.10 ./configure --prefix=/srv/python36 --enable-optimizations make && make install vim ~/.bash_profile export PATH=/srv/python36/bin:$PATH source ~/.bash_profile

tar xf scala-2.11.8.tgz -C /srv/ vim ~/.bash_profile export SCALA_HOME=/srv/scala-2.11.8 export PATH=$SCALA_HOME/bin:$PATH source ~/.bash_profile

下载安装

wget http://archive.cloudera.com/cdh5/cdh/5/hadoop-2.6.0-cdh5.7.0.tar.gz tar xf hadoop-2.6.0-cdh5.7.0.tar.gz -C /srv/ vim ~/.bash_profile export HADOOP_HOME=/srv/hadoop-2.6.0-cdh5.7.0 export PATH=$HADOOP_HOME/bin:$PATH source ~/.bash_profile

配置hadoop

cd /srv/hadoop-2.6.0-cdh5.7.0/etc/hadoop

#export JAVA_HOME=${JAVA_HOME} export JAVA_HOME=/srv/jdk1.8.0_231

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://heboan-hadoop-000:8020</value>

</property>

</configuration>

mkdir -p /data/hadoop/{namenode,datanode} <configuration> <property> <name>dfs.namenode.name.dir</name> <value>/data/hadoop/namenode</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/data/hadoop/datanode</value> </property> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

cp mapred-site.xml.template mapred-site.xml <configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

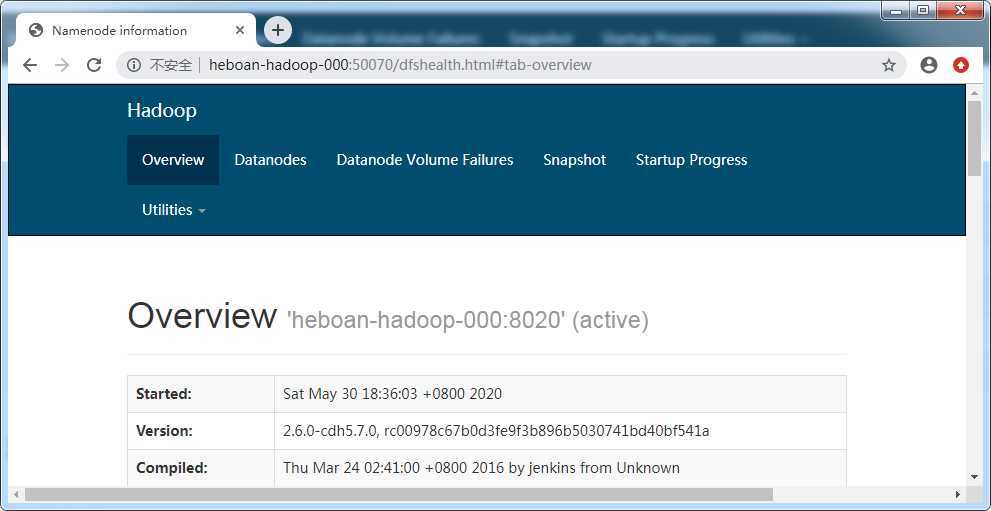

初始化hdfs

cd /srv/hadoop-2.6.0-cdh5.7.0/bin ./hadoop namenode -format

启动hdfs

jps查看是否启动进程: DataNode NameNode SecondaryNameNode

/srv/hadoop-2.6.0-cdh5.7.0/sbin ./start-dfs.sh

测试创建一个目录

# hdfs dfs -mkdir /test # hdfs dfs -ls / drwxr-xr-x - root supergroup 0 2020-05-30 10:19 /test

浏览器访问http://heboan-hadoop-000:50070

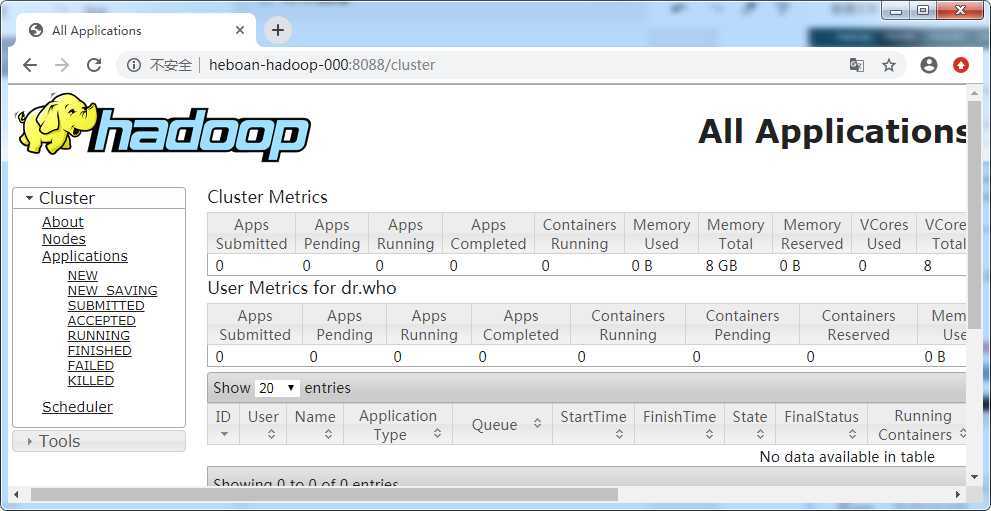

启动yarn

jps查看是否启动进程: ResourceManager NodeManager

cd /srv/hadoop-2.6.0-cdh5.7.0/sbin/ ./start-yarn.sh

浏览器访问http://heboan-hadoop-000:8088

进入spark下载页面:http://spark.apache.org/downloads.html

可以看下编译spark的文档,里面有相关的编译注意事项,比如mavn,jdk的版本要求,机器配置等

http://spark.apache.org/docs/latest/building-spark.html

下载spark-2.3.0.tzg后,进行解压编译

tar xf spark-2.3.0.tgz cd spark-2.3.0 ./dev/make-distribution.sh --name 2.6.0-cdh5.7.0 --tgz -Pyarn -Phadoop-2.6 -Phive -Phive-thriftserver -Dhadoop.version=2.6.0-cdh5.7.0

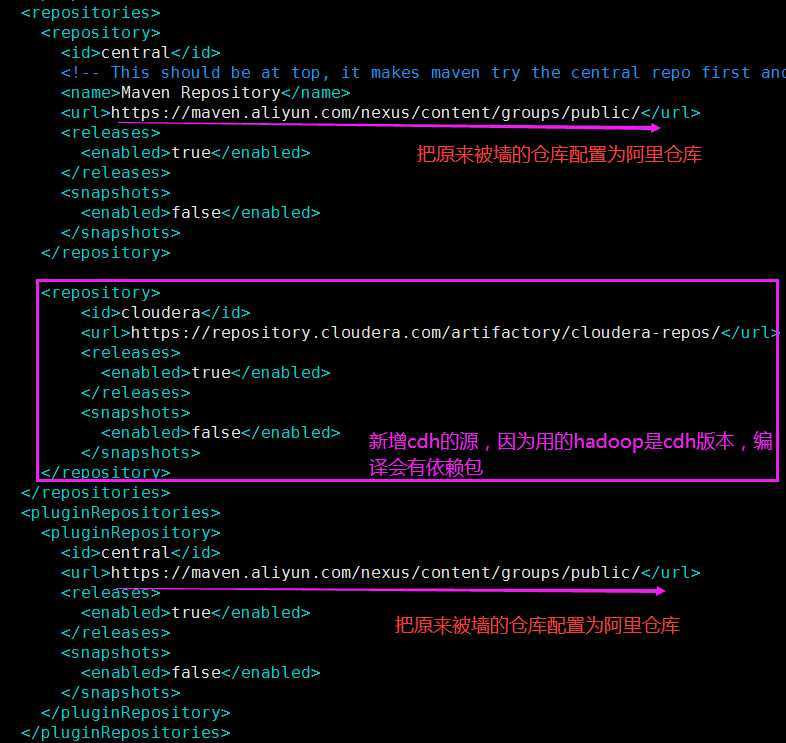

编译依赖网络环境,默认情况下,指定的仓库源被墙无法访问

mvn配置settings.conf添加阿里仓库加速

<mirrors>

<mirror>

<id>alimaven</id>

<name>aliyun maven</name>

<url>https://maven.aliyun.com/nexus/content/groups/public/</url>

<mirrorOf>central</mirrorOf>

</mirror>

<mirrors>

修改spark的pom.xml,更改仓库地址为阿里源地址,并添加cdh源

vim spark-2.3.0/pom.xml

替换maven为自己安装的

vim dev/make-distribution.sh #MVN="$SPARK_HOME/build/mvn" MVN="${MAVEN_HOME}/bin/mvn"

编译时间长,取决于你的网络环境,编译完成后,会打一个包命名为:spark-2.3.0-bin-2.6.0-cdh5.7.0.tgz

本文所有工具包已传至网盘,不想折腾的同学可以小额打赏联系博主

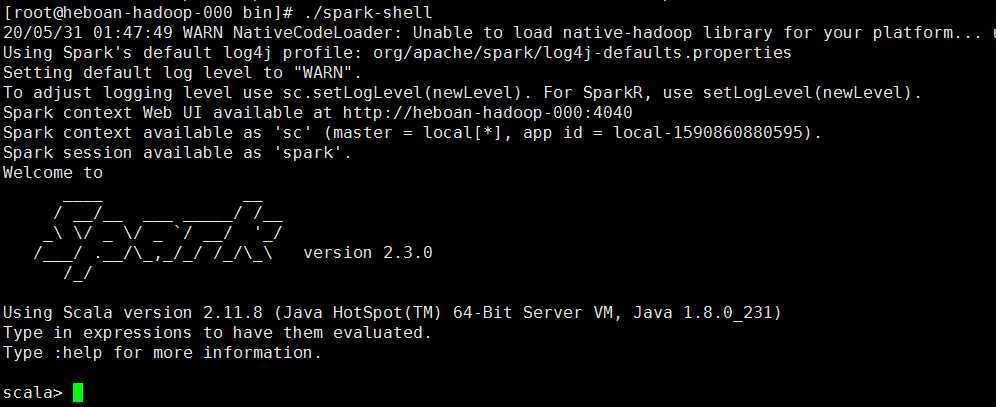

tar xf spark-2.3.0-bin-2.6.0-cdh5.7.0.tgz -C /srv/ cd /srv/spark-2.3.0-bin-2.6.0-cdh5.7.0/bin/ ./spark-shell

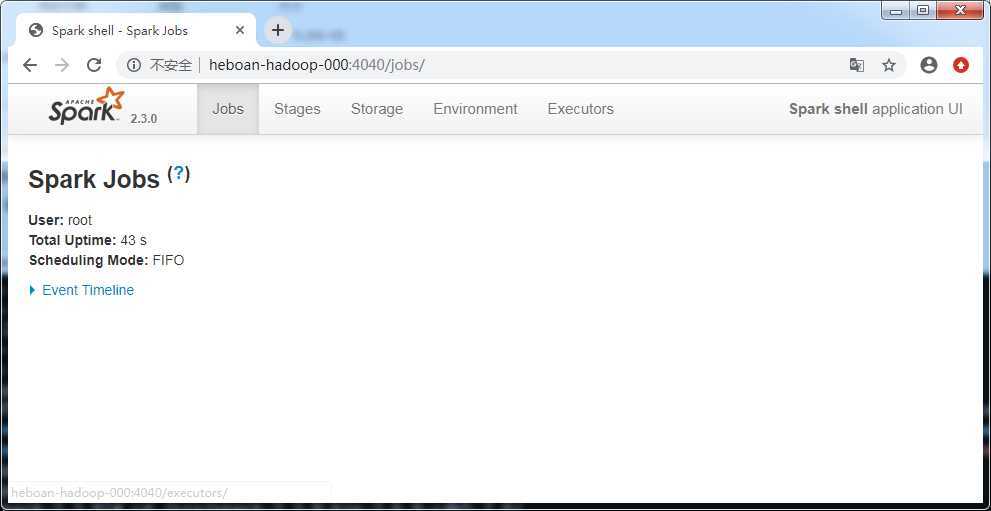

浏览器访问http://heboan-hadoop-000:4040

原文:https://www.cnblogs.com/sellsa/p/12994006.html