(一)用requeses库的个体()函数访问必应主页20次,打印返回状态,text内容,并且计算text()属性和content属性返回网页内容的长度

1 import requests 2 def getHTMLText(url): 3 try: 4 for i in range(0,20): #访问20次 5 r = requests.get(url, timeout=30) 6 r.raise_for_status() #如果状态不是200,引发异常 7 r.encoding = ‘utf-8‘ #无论原来用什么编码,都改成utf-8 8 return r.status_code,r.text,r.content,len(r.text),len(r.content) ##返回状态,text和content内容,text()和content()网页的长度 9 except: 10 return "" 11 url = "https://cn.bing.com/?toHttps=1&redig=731C98468AFA474D85AECB7DB98B95D9" 12 print(getHTMLText(url))

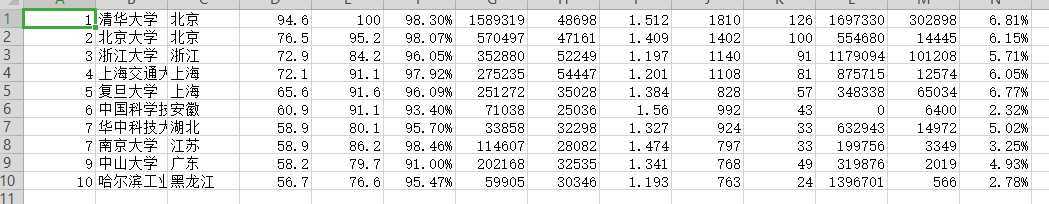

(二)爬取的2019年中国最好大学的排名(这里只显示排名前十的学校了)并且把它保存为csv文件

import requests import csv import os import codecs from bs4 import BeautifulSoup allUniv = [] def getHTMLText(url): try: r = requests.get(url, timeout=30) r.raise_for_status() r.encoding = ‘utf-8‘ return r.text except: return "" def fillUnivList(soup): data = soup.find_all(‘tr‘) for tr in data: ltd = tr.find_all(‘td‘) if len(ltd)==0: continue singleUniv = [] for td in ltd: singleUniv.append(td.string) allUniv.append(singleUniv) def printUnivList(num): print("{:^4}{:^10}{:^5}{:^8}{:^10}".format("排名","学校名称","省市","总分","培养规模")) for i in range(num): u=allUniv[i] print("{:^4}{:^10}{:^5}{:^8}{:^10}".format(u[0],u[1],u[2],u[3],u[6])) def writercsv(save_road,num,title): if os.path.isfile(save_road): with open(save_road,‘a‘,newline=‘‘)as f: csv_write=csv.writer(f,dialect=‘excel‘) for i in range(num): u=allUniv[i] csv_write.writerow(u) else: with open(save_road,‘w‘,newline=‘‘)as f: csv_write=csv.writer(f,dialect=‘excel‘) csv_write.writerow(title) for i in range(num): u=allUniv[i] csv_write.writerow(u) title=["排名","学校名称","省市","总分","生源质量","培养结果","科研规模","科研质量","顶尖成果","顶尖人才","科技服务","产学研究合作","成果转化"] save_road="F:\\python\csvData.csv" def main(): url = ‘http://www.zuihaodaxue.cn/zuihaodaxuepaiming2019.html‘ html = getHTMLText(url) soup = BeautifulSoup(html, "html.parser") fillUnivList(soup) printUnivList(10) writercsv(‘F:\\python\csvData.csv‘,10,title) main()

(三)打开文件

原文:https://www.cnblogs.com/qinan-nlx/p/13208102.html