Splash, 就是一个Javascript渲染服务。它是一个实现了HTTP API的轻量级浏览器,Splash是用Python实现的,同时使用Twisted和QT。Twisted(QT)用来让服务具有异步处理能力,以发挥webkit的并发能力。Splash的特点如下:

Scrapy也有其不足之处,即Scrapy没有JS engine, 因此它无法爬取JavaScript生成的动态网页,只能爬取静态网页,而在现代的网络世界中,大部分网页都会采用JavaScript来丰富网页的功能。所以结合Splash实现等待页面动态渲染后,将页面数据进行爬取 。

这里安装Splash使用Docker形式,更加简单便捷

docker run -p 8050:8050 scrapinghub/splash

执行完出现如下提示:

J-pro:scrapy will$ docker run -p 8050:8050 scrapinghub/splash 2020-07-05 07:43:32+0000 [-] Log opened. 2020-07-05 07:43:32.605417 [-] Xvfb is started: [‘Xvfb‘, ‘:190283716‘, ‘-screen‘, ‘0‘, ‘1024x768x24‘, ‘-nolisten‘, ‘tcp‘] QStandardPaths: XDG_RUNTIME_DIR not set, defaulting to ‘/tmp/runtime-splash‘ 2020-07-05 07:43:33.109852 [-] Splash version: 3.4.1 2020-07-05 07:43:33.356860 [-] Qt 5.13.1, PyQt 5.13.1, WebKit 602.1, Chromium 73.0.3683.105, sip 4.19.19, Twisted 19.7.0, Lua 5.2 2020-07-05 07:43:33.357321 [-] Python 3.6.9 (default, Nov 7 2019, 10:44:02) [GCC 8.3.0] 2020-07-05 07:43:33.357536 [-] Open files limit: 1048576 2020-07-05 07:43:33.357825 [-] Can‘t bump open files limit 2020-07-05 07:43:33.385921 [-] proxy profiles support is enabled, proxy profiles path: /etc/splash/proxy-profiles 2020-07-05 07:43:33.386193 [-] memory cache: enabled, private mode: enabled, js cross-domain access: disabled 2020-07-05 07:43:33.657176 [-] verbosity=1, slots=20, argument_cache_max_entries=500, max-timeout=90.0 2020-07-05 07:43:33.657856 [-] Web UI: enabled, Lua: enabled (sandbox: enabled), Webkit: enabled, Chromium: enabled 2020-07-05 07:43:33.659508 [-] Site starting on 8050 2020-07-05 07:43:33.659701 [-] Starting factory <twisted.web.server.Site object at 0x7fcc3cbb7160> 2020-07-05 07:43:33.660750 [-] Server listening on http://0.0.0.0:8050

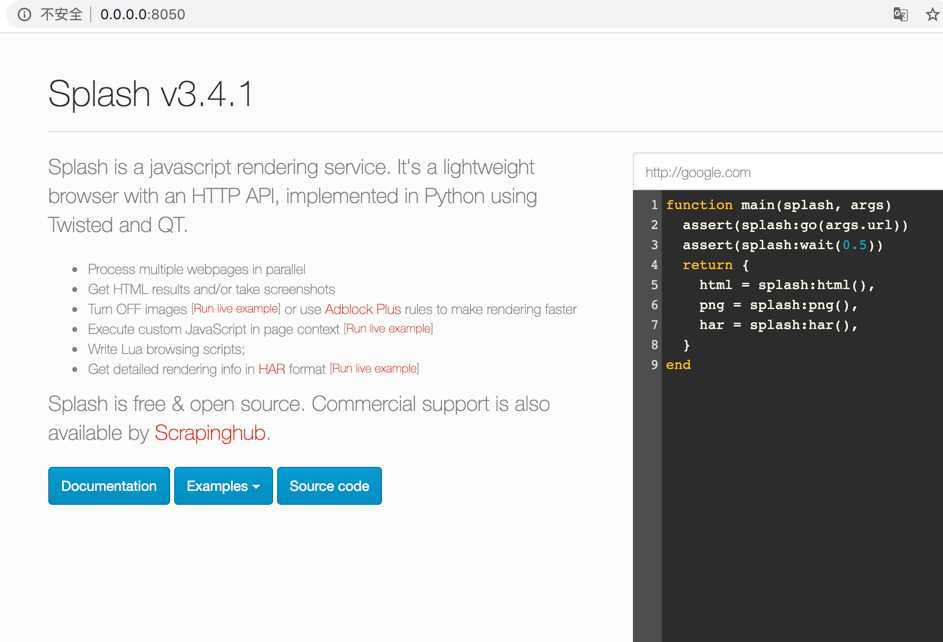

访问:http://0.0.0.0:8050,出现如下页面则标识Splash安装成功

pip3 install scrapy-splash

1. 创建Scrapy项目,如果还没创建则参考https://www.cnblogs.com/will-xz/p/13111048.html,进行安装

2. 找到settings.py文件,增加配置

SPLASH_URL = ‘http://0.0.0.0:8050‘ DOWNLOADER_MIDDLEWARES = { ‘scrapy_splash.SplashCookiesMiddleware‘: 723, ‘scrapy_splash.SplashMiddleware‘: 725, ‘scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware‘: 810, } SPIDER_MIDDLEWARES = { ‘scrapy_splash.SplashDeduplicateArgsMiddleware‘: 100, } DUPEFILTER_CLASS = ‘scrapy_splash.SplashAwareDupeFilter‘ HTTPCACHE_STORAGE = ‘scrapy_splash.SplashAwareFSCacheStorage‘

3. 实践Demo,创建demo_splash.py文件,代码如下:

# -*- coding: UTF-8 -*-

import scrapy

from scrapy_splash import SplashRequest

class DemoSplash(scrapy.Spider):

"""

name:scrapy唯一定位实例的属性,必须唯一

allowed_domains:允许爬取的域名列表,不设置表示允许爬取所有

start_urls:起始爬取列表

start_requests:它就是从start_urls中读取链接,然后使用make_requests_from_url生成Request,

这就意味我们可以在start_requests方法中根据我们自己的需求往start_urls中写入

我们自定义的规律的链接

parse:回调函数,处理response并返回处理后的数据和需要跟进的url

log:打印日志信息

closed:关闭spider

"""

# 设置name

name = "demo_splash"

allowed_domains = []

start_urls = [

‘http://yao.xywy.com/class.htm‘,

]

def parse(self, response):

print("开始抓取")

## 爬取指定地址,设置等待秒数

yield SplashRequest("http://yao.xywy.com/class/201-0-0-1-0-1.htm", callback=self.parse_page, args={‘wait‘: 0.5})

def parse_page(self, response):

print(response.xpath(‘//div‘))

## 这里可以对数据进行解析存储了

4. 执行抓取命令

scrapy crawl demo_splash

5. 完成!

原文:https://www.cnblogs.com/will-xz/p/13246828.html