原文:

https://data-flair.training/blogs/kafka-architecture/

In our last Kafka Tutorial, we discussed Kafka Use Cases and Applications.

Today, in this Kafka Tutorial, we will discuss Kafka Architecture.

In this Kafka Architecture article, we will see API’s in Kafka.

Moreover, we will learn about Kafka Broker, Kafka Consumer, Zookeeper, and Kafka Producer.

Also, we will see some fundamental concepts of Kafka.

So, let’s start Apache Kafka Architecture.

Apache Kafka Architecture has four core APIs, producer API, Consumer API, Streams API, and Connector API. Let’s discuss them one by one:

In order to publish a stream of records to one or more Kafka topics, the Producer API allows an application.

Did you check an amazing article on – Kafka Security

This API permits an application to subscribe to one or more topics and also to process the stream of records produced to them.

Moreover, to act as a stream processor,

consuming an input stream from one or more topics and producing an output stream to one or more output topics,

effectively transforming the input streams to output streams, the streams API permits an application.

While it comes to building and running reusable producers or consumers that connect Kafka topics to existing applications or data systems, we use the Connector API.

For example, a connector to a relational database might capture every change to a table.

Have a look at Top 5 Apache Kafka Books.

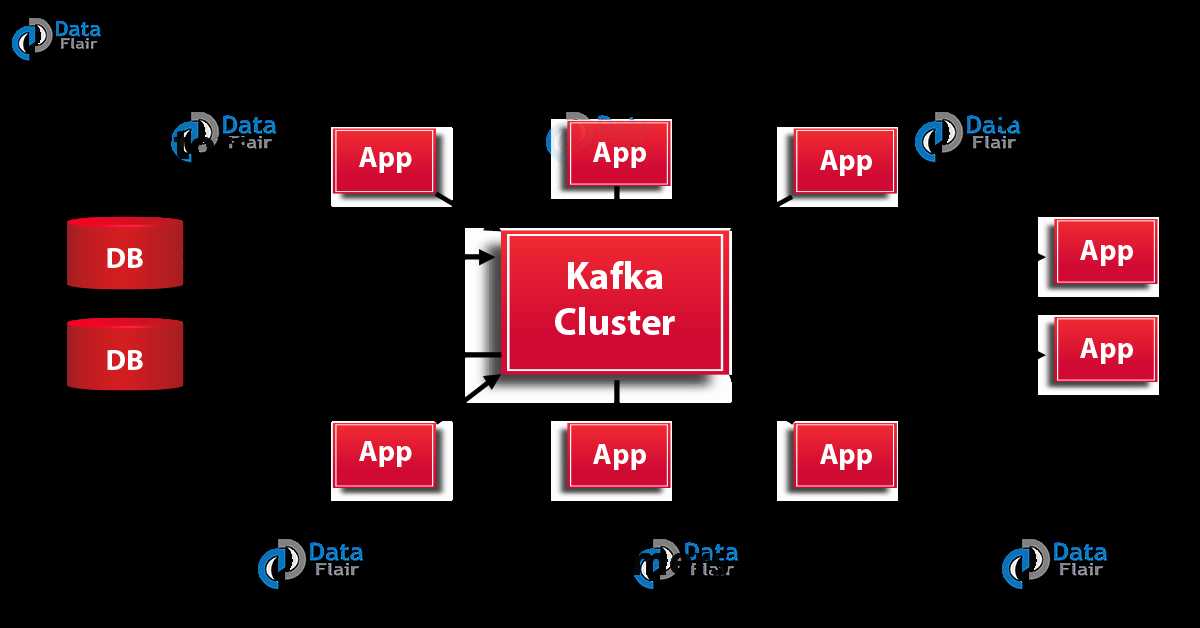

The below diagram shows the cluster diagram of Apache Kafka:

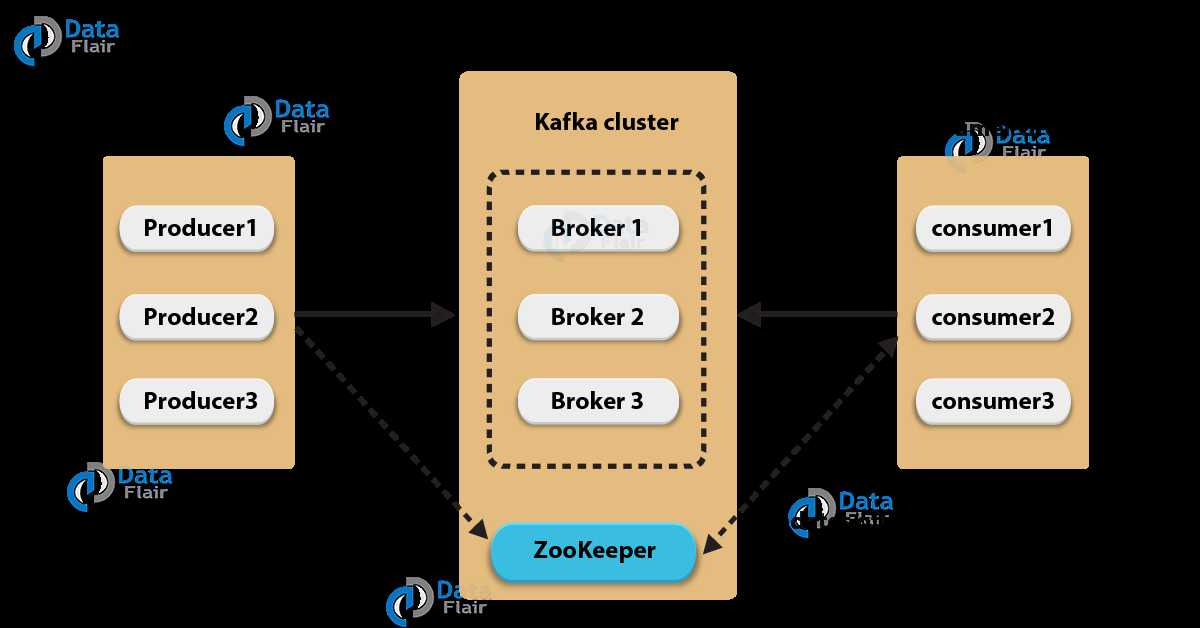

Let’s describe each component of Kafka Architecture shown in the above diagram:

Basically, to maintain load balance Kafka cluster typically consists of multiple brokers.

However, these are stateless, hence for maintaining the cluster state they use ZooKeeper.

Although, one Kafka Broker instance can handle hundreds of thousands of reads and writes per second. Whereas, without performance impact, each broker can handle TB of messages.

In addition, make sure ZooKeeper performs Kafka broker leader election.

For the purpose of managing and coordinating, Kafka broker uses ZooKeeper.

Also, uses it to notify producer and consumer about the presence of any new broker in the Kafka system or failure of the broker in the Kafka system.

As soon as Zookeeper send the notification regarding presence or failure of the broker then producer and consumer, take the decision and starts coordinating their task with some other broker.

Basically, by using partition offset the Kafka Consumer maintains that how many messages have been consumed because Kafka brokers are stateless.

Moreover, you can assure that the consumer has consumed all prior messages once the consumer acknowledges a particular message offset.

Also, in order to have a buffer of bytes ready to consume, the consumer issues an asynchronous pull request to the broker.

Then simply by supplying an offset value, consumers can rewind or skip to any point in a partition.

In addition, ZooKeeper notifies Consumer offset value.

Here, we are listing some of the fundamental concepts of Kafka Architecture that you must know:

The topic is a logical channel to which producers publish message and from which the consumers receive messages.

Below is the image which shows the relationship between Kafka Topics and Partitions:

In a Kafka cluster, Topics are split into Partitions and also replicated across brokers.

While designing a Kafka system, it’s always a wise decision to factor in topic replication.

As a result, its topics’ replicas from another broker can solve the crisis, if a broker goes down. For example, we have 3 brokers and 3 topics. Broker1 has Topic 1 and Partition 0, its replica is in Broker2, so on and so forth. It has got a replication factor of 2; it means it will have one additional copy other than the primary one. Below is the image of Topic Replication Factor:

Don’t forget to check – Apache Kafka Streams Tutorial

Some key points –

So, this was all about Apache Kafka Architecture. Hope you like our explanation.

Kafka Architecture and Its Fundamental Concepts(转发)

原文:https://www.cnblogs.com/panpanwelcome/p/13533944.html