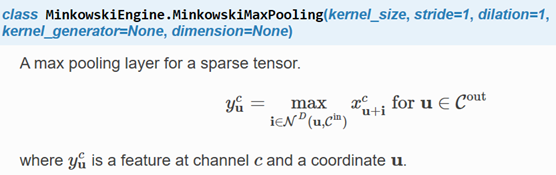

MinkowskiPooling池化(上)

如果内核大小等于跨步大小(例如kernel_size = [2,1],跨步= [2,1]),则引擎将更快地生成与池化函数相对应的输入输出映射。

如果使用U网络架构,请使用相同功能的转置版本进行上采样。例如pool = MinkowskiSumPooling(kernel_size = 2,stride = 2,D = D),然后使用 unpool = MinkowskiPoolingTranspose(kernel_size = 2,stride = 2,D = D)。

stride (int, or list, optional):卷积层的步幅大小。如果使用非同一性,则输出坐标至少为stride ×× tensor_stride 远。给出列表时,长度必须为D;每个元素将用于特定轴的步幅大小。

dilation (int, or list, optional):卷积内核的扩展大小。给出列表时,长度必须为D,并且每个元素都是轴特定的膨胀。所有元素必须> 0。

kernel_generator (MinkowskiEngine.KernelGenerator, optional):定义自定义内核形状。

dimension(int):定义所有输入和网络的空间的空间尺寸。例如,图像在2D空间中,网格和3D形状在3D空间中。

当kernel_size ==跨度时,不支持自定义内核形状。

cpu() → T

将所有模型参数和缓冲区移至CPU。

Returns:

Module: self

cuda(device: Optional[Union[int, torch.device]] = None) → T

将所有模型参数和缓冲区移至GPU。

这也使关联的参数并缓冲不同的对象。因此,在构建优化程序之前,如果模块在优化过程中可以在GPU上运行,则应调用它。

Arguments:

device (int, optional): if specified, all parameters will be

copied to that device

Returns:

Module: self

double() → T

将所有浮点参数和缓冲区强制转换为double数据类型。

返回值:

模块:self

float() →T

将所有浮点参数和缓冲区强制转换为float数据类型。

返回值:

模块:self

forward(input: SparseTensor.SparseTensor, coords: Union[torch.IntTensor, MinkowskiCoords.CoordsKey, SparseTensor.SparseTensor] = None)?

input (MinkowskiEngine.SparseTensor):输入稀疏张量以对其进行卷积。

coords ((torch.IntTensor, MinkowskiEngine.CoordsKey, MinkowskiEngine.SparseTensor), optional):如果提供,则在提供的坐标上生成结果。默认情况下没有。

Moves and/or casts the parameters and buffers.

This can be called as

to(device=None, dtype=None, non_blocking=False)

to(tensor, non_blocking=False)

to(memory_format=torch.channels_last)

其签名类似于torch.Tensor.to(),但仅接受所需dtype的浮点s。另外,此方法将仅将浮点参数和缓冲区强制转换为dtype (如果给定的话)。device如果给定了整数参数和缓冲区 ,dtype不变。当 non_blocking被设置时,它试图转换/如果可能异步相对于移动到主机,例如,移动CPU张量与固定内存到CUDA设备。

此方法local修改模块。

Args:

device (torch.device): the desired device of the parameters

and buffers in this module

dtype (torch.dtype): the desired floating point type of

the floating point parameters and buffers in this module

tensor (torch.Tensor): Tensor whose dtype and device are the desired

dtype and device for all parameters and buffers in this module

memory_format (torch.memory_format): the desired memory

format for 4D parameters and buffers in this module (keyword only argument)

Returns:

Module: self

Example:

>>> linear = nn.Linear(2, 2)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]])

>>> linear.to(torch.double)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]], dtype=torch.float64)

>>> gpu1 = torch.device("cuda:1")

>>> linear.to(gpu1, dtype=torch.half, non_blocking=True)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16, device=‘cuda:1‘)

>>> cpu = torch.device("cpu")

>>> linear.to(cpu)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16)

Casts all parameters and buffers to dst_type.

Arguments:

dst_type (type or string): the desired type

Returns:

Module: self

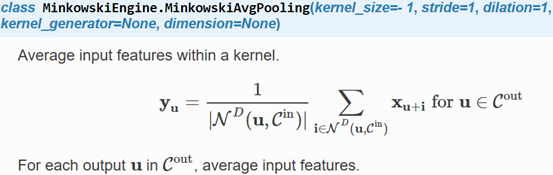

MinkowskiAvgPooling

平均层首先计算输入要素的基数,每个输出的输入要素数,然后将输入要素的总和除以基数。对于密集的张量,基数是一个常数,即内核的体积。但是,对于张量稀疏,基数取决于每个输出的输入特征的数量而变化。因此,稀疏张量的平均池化不等于传统的密集张量的平均池化层。请参考MinkowskiSumPooling等效层。

如果内核大小等于跨步大小(例如kernel_size = [2,1],跨步= [2,1]),则引擎将更快地生成与池化函数相对应的输入输出映射。

如果使用U网络架构,请使用相同功能的转置版本进行上采样。例如pool = MinkowskiSumPooling(kernel_size = 2,stride = 2,D = D),然后使用 unpool = MinkowskiPoolingTranspose(kernel_size = 2,stride = 2,D = D)。

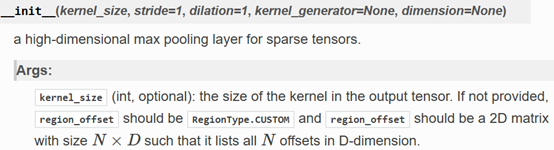

__init__(kernel_size=- 1, stride=1, dilation=1, kernel_generator=None, dimension=None)

高维稀疏平均池化层。

kernel_size (int, optional): the size of the kernel in the output tensor. If not provided, region_offset should be RegionType.CUSTOM and region_offset should be a 2D matrix with size N×DN×D such that it lists all NN offsets in D-dimension.

stride (int, or list, optional): stride size of the convolution layer. If non-identity is used, the output coordinates will be at least stride ×× tensor_stride away. When a list is given, the length must be D; each element will be used for stride size for the specific axis.

dilation (int, or list, optional): dilation size for the convolution kernel. When a list is given, the length must be D and each element is an axis specific dilation. All elements must be > 0.

kernel_generator (MinkowskiEngine.KernelGenerator, optional): define custom kernel shape.

dimension (int): the spatial dimension of the space where all the inputs and the network are defined. For example, images are in a 2D space, meshes and 3D shapes are in a 3D space.

当kernel_size ==跨度时,不支持自定义内核形状。

cpu() →T

将所有模型参数和缓冲区移至CPU。

返回值:

模块:self

cuda(device: Optional[Union[int, torch.device]] = None) → T

所有模型参数和缓冲区移至GPU。

这也使关联的参数并缓冲不同的对象。因此,在构建优化程序之前,如果模块在优化过程中可以在GPU上运行,则应调用它。

Arguments:

device (int, optional): if specified, all parameters will be

copied to that device

Returns:

Module: self

double() → T

将所有浮点参数和缓冲区强制转换为double数据类型。

返回值:

模块:self

float() →T

将所有浮点参数和缓冲区强制转换为float数据类型。

返回值:

模块:self

forward(input: SparseTensor.SparseTensor, coords: Union[torch.IntTensor, MinkowskiCoords.CoordsKey, SparseTensor.SparseTensor] = None)?

input (MinkowskiEngine.SparseTensor): Input sparse tensor to apply a convolution on.

coords ((torch.IntTensor, MinkowskiEngine.CoordsKey, MinkowskiEngine.SparseTensor), optional): If provided, generate results on the provided coordinates. None by default.

Moves and/or casts the parameters and buffers.

This can be called as

to(device=None, dtype=None, non_blocking=False)

to(tensor, non_blocking=False)

to(memory_format=torch.channels_last)

其签名类似于torch.Tensor.to(),但仅接受所需dtype的浮点s。另外,此方法将仅将浮点参数和缓冲区强制转换为dtype (如果给定的话)。device如果给定了整数参数和缓冲区 ,dtype不变。当 non_blocking被设置时,它试图转换/如果可能异步相对于移动到主机,例如,移动CPU张量与固定内存到CUDA设备。

This method modifies the module in-place.

Args:

device (torch.device): the desired device of the parameters

and buffers in this module

dtype (torch.dtype): the desired floating point type of

the floating point parameters and buffers in this module

tensor (torch.Tensor): Tensor whose dtype and device are the desired

dtype and device for all parameters and buffers in this module

memory_format (torch.memory_format): the desired memory

format for 4D parameters and buffers in this module (keyword only argument)

Returns:

Module: self

Example:

>>> linear = nn.Linear(2, 2)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]])

>>> linear.to(torch.double)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]], dtype=torch.float64)

>>> gpu1 = torch.device("cuda:1")

>>> linear.to(gpu1, dtype=torch.half, non_blocking=True)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16, device=‘cuda:1‘)

>>> cpu = torch.device("cpu")

>>> linear.to(cpu)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16)

Casts all parameters and buffers to dst_type.

Arguments:

dst_type (type or string): the desired type

Returns:

Module: self

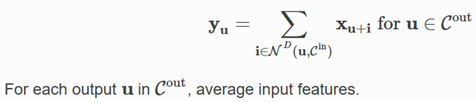

class MinkowskiEngine.MinkowskiSumPooling(kernel_size, stride=1, dilation=1, kernel_generator=None, dimension=None)?

Sum all input features within a kernel.

平均层首先计算输入要素的基数,每个输出的输入要素数,然后将输入要素的总和除以基数。对于密集的张量,基数是一个常数,即内核的体积。但是,对于张量稀疏,基数根据每个输出的输入特征数而变化。因此,用基数对输入特征求平均可能不等于密集张量的常规平均池。该层提供了一种不将总和除以基数的方法。

如果内核大小等于跨步大小(例如kernel_size = [2,1],跨步= [2,1]),则引擎将更快地生成与池化函数相对应的输入输出映射。

如果使用U网络架构,请使用相同功能的转置版本进行上采样。例如pool = MinkowskiSumPooling(kernel_size = 2,stride = 2,D = D),然后使用 unpool = MinkowskiPoolingTranspose(kernel_size = 2,stride = 2,D = D)。

a high-dimensional sum pooling layer

Args:

kernel_size (int, optional): the size of the kernel in the output tensor. If not provided, region_offset should be RegionType.CUSTOM and region_offset should be a 2D matrix with size N×DN×D such that it lists all NN offsets in D-dimension.

stride (int, or list, optional): stride size of the convolution layer. If non-identity is used, the output coordinates will be at least stride ×× tensor_stride away. When a list is given, the length must be D; each element will be used for stride size for the specific axis.

dilation (int, or list, optional): dilation size for the convolution kernel. When a list is given, the length must be D and each element is an axis specific dilation. All elements must be > 0.

kernel_generator (MinkowskiEngine.KernelGenerator, optional): define custom kernel shape.

dimension (int): the spatial dimension of the space where all the inputs and the network are defined. For example, images are in a 2D space, meshes and 3D shapes are in a 3D space.

当kernel_size ==跨度时,不支持自定义内核形状。

cpu() →T

将所有模型参数和缓冲区移至CPU。

返回值:

模块:self

cuda(device:Optional[Union[int, torch.device]]=None) → T

将所有模型参数和缓冲区移至GPU。

这也使关联的参数并缓冲不同的对象。因此,在构建优化程序之前,如果模块在优化过程中可以在GPU上运行,则应调用它。

参数:

device (int, optional): if specified, all parameters will be

copied to that device

Returns:

Module: self

double() → T

将所有浮点参数和缓冲区强制转换为double数据类型。

返回值:

模块:self

float() →T

将所有浮点参数和缓冲区强制转换为float数据类型。

返回值:

模块:self

forward(input: SparseTensor.SparseTensor, coords: Union[torch.IntTensor, MinkowskiCoords.CoordsKey, SparseTensor.SparseTensor] = None)?

input (MinkowskiEngine.SparseTensor): Input sparse tensor to apply a convolution on.

coords ((torch.IntTensor, MinkowskiEngine.CoordsKey, MinkowskiEngine.SparseTensor), optional): If provided, generate results on the provided coordinates. None by default.

Moves and/or casts the parameters and buffers.

This can be called as

to(device=None, dtype=None, non_blocking=False)

to(tensor, non_blocking=False)

to(memory_format=torch.channels_last)

其签名类似于torch.Tensor.to(),但仅接受所需dtype的浮点s。另外,此方法将仅将浮点参数和缓冲区强制转换为dtype (如果给定的话)。device如果给定了整数参数和缓冲区 ,dtype不变。当 non_blocking被设置时,它试图转换/如果可能异步相对于移动到主机,例如,移动CPU张量与固定内存到CUDA设备。

请参见下面的示例。

Args:

device (torch.device): the desired device of the parameters

and buffers in this module

dtype (torch.dtype): the desired floating point type of

the floating point parameters and buffers in this module

tensor (torch.Tensor): Tensor whose dtype and device are the desired

dtype and device for all parameters and buffers in this module

memory_format (torch.memory_format): the desired memory

format for 4D parameters and buffers in this module (keyword only argument)

Returns:

Module: self

Example:

>>> linear = nn.Linear(2, 2)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]])

>>> linear.to(torch.double)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1913, -0.3420],

[-0.5113, -0.2325]], dtype=torch.float64)

>>> gpu1 = torch.device("cuda:1")

>>> linear.to(gpu1, dtype=torch.half, non_blocking=True)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16, device=‘cuda:1‘)

>>> cpu = torch.device("cpu")

>>> linear.to(cpu)

Linear(in_features=2, out_features=2, bias=True)

>>> linear.weight

Parameter containing:

tensor([[ 0.1914, -0.3420],

[-0.5112, -0.2324]], dtype=torch.float16)

Casts all parameters and buffers to dst_type.

Arguments:

dst_type (type or string): the desired type

Returns:

Module: self

原文:https://www.cnblogs.com/wujianming-110117/p/14226752.html