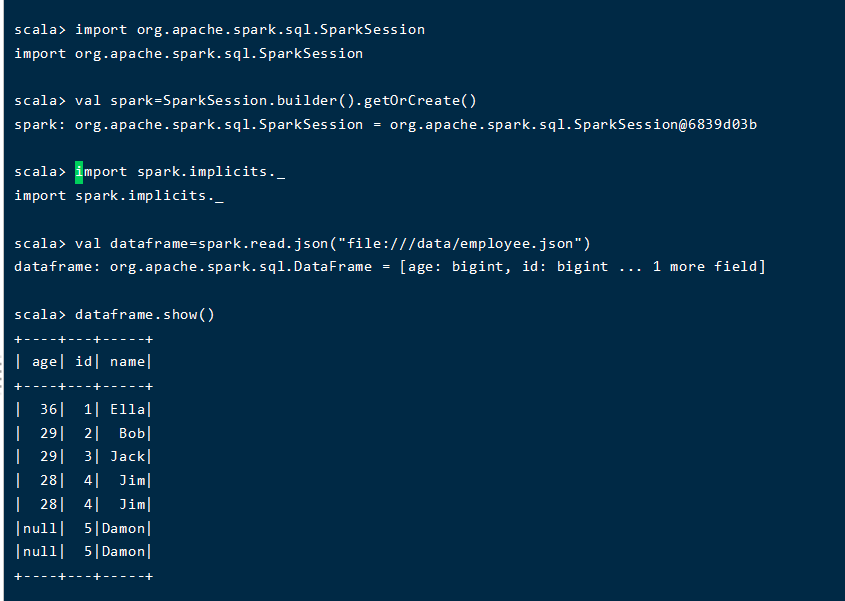

将下列 JSON 格式数据复制到 Linux 系统中,并保存命名为 employee.json。

{ "id":1 , "name":" Ella" , "age":36 }

{ "id":2, "name":"Bob","age":29 }

{ "id":3 , "name":"Jack","age":29 }

{ "id":4 , "name":"Jim","age":28 }

{ "id":4 , "name":"Jim","age":28 }

{ "id":5 , "name":"Damon" }

{ "id":5 , "name":"Damon" }

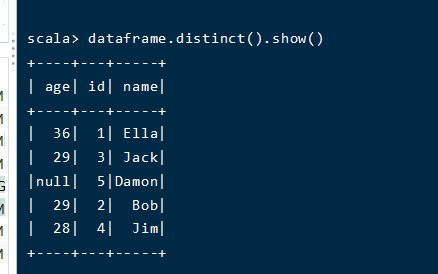

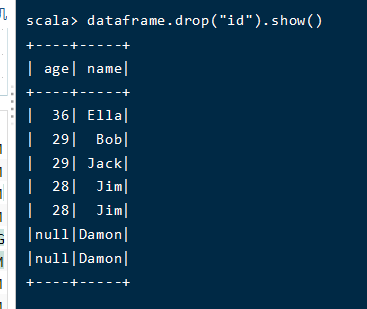

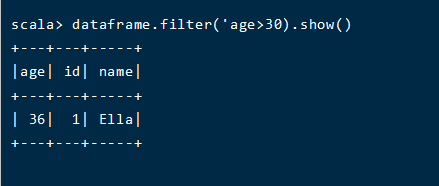

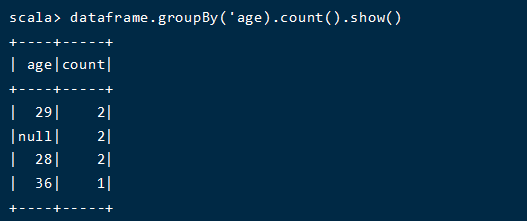

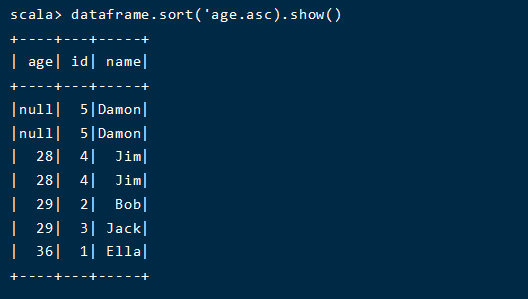

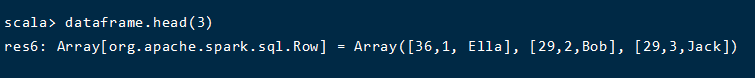

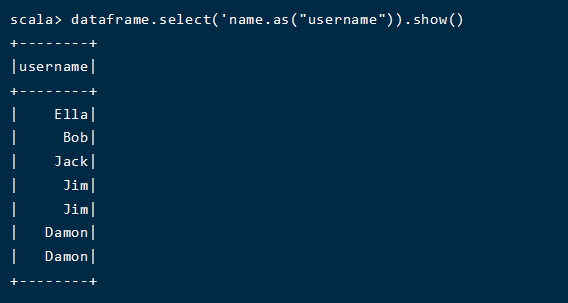

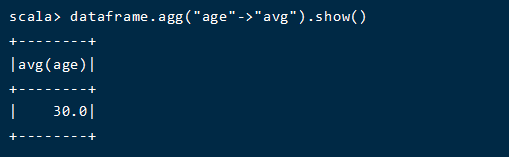

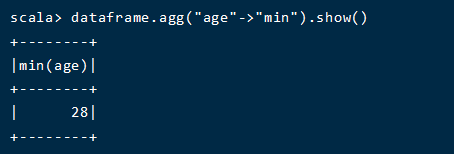

为 employee.json 创建 DataFrame,并写出 Scala 语句完成下列操作:

源文件内容如下(包含 id,name,age):

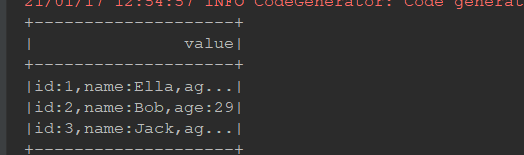

1,Ella,36 2,Bob,29 3,Jack,29

请将数据复制保存到 Linux 系统中,命名为 employee.txt,实现从 RDD 转换得到 DataFrame,并按“id:1,name:Ella,age:36”的格式打印出 DataFrame 的所有数据。请写出程序代码。

class woyesql { @Test def test(): Unit ={ val spark=SparkSession.builder() .appName("datafreame1") .master("local[6]") .getOrCreate() import spark.implicits._ val df=spark.sparkContext.textFile("dataset/employee.txt").map(_.split(",")) .map(item => Employee(item(0).trim.toInt,item(1),item(2).trim.toInt)) .toDF() df.createOrReplaceTempView("employee")//创工作空间 val dfRDD=spark.sql("select * from employee") dfRDD.map(it => "id:"+it(0) +",name:"+it(1)+",age:"+it(2) ) .show() } } case class Employee(id:Int,name:String,age:Long)

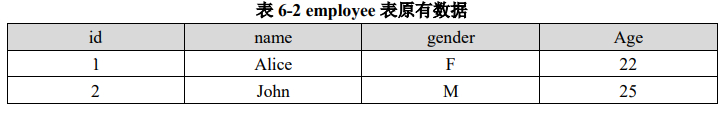

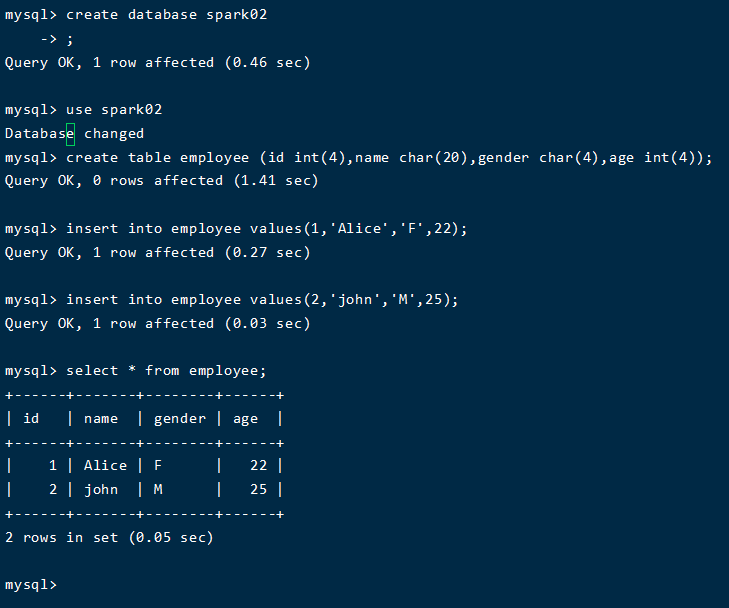

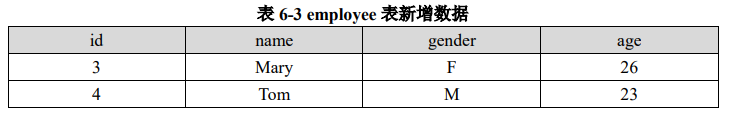

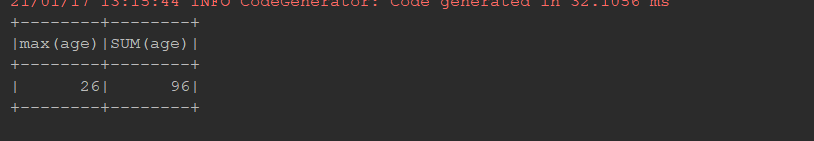

@Test def sqlwrite():Unit={ val spark = SparkSession .builder() .appName("mysql example") .master("local[6]") .getOrCreate() val schema = StructType( List( StructField("id", IntegerType), StructField("name", StringType), StructField("gender", StringType), StructField("age", IntegerType) ) ) val studentDF = spark.read //分隔符:制表符 .option("delimiter", ",") .schema(schema) .csv("dataset/stu") studentDF.write .format("jdbc") .mode(SaveMode.Append)//模式是追加 .option("url", "jdbc:mysql://hadooplinux01:3306/spark02") .option("dbtable", "employee") .option("user", "root") .option("password", "511924") .save() spark.read .format("jdbc") .option("url", "jdbc:mysql://hadooplinux01:3306/spark02") .option("dbtable","(select max(age),SUM(age) from employee) as emp") .option("user", "root") .option("password", "511924") .load() .show() }

原文:https://www.cnblogs.com/dazhi151/p/14274789.html