A multiprocessor computer system is provided having a multiplicity of sub-systems and a main memory coupled to a system controller. An interconnect module, interconnects the main memory and sub-systems in accordance with interconnect control signals received from the system controller. At least two of the sub-systems are data processors, each having a respective cache memory that stores multiple blocks of data and a respective master cache index. Each master cache index has a set of master cache tags (Etags), including one cache tag for each data block stored by the cache memory. Each data processor includes a master interface having master classes for sending memory transaction requests to the system controller. The system controller includes memory transaction request logic for processing each memory transaction request by a data processor. The system controller maintains a duplicate cache index having a set of duplicate cache tags (Dtags) for each data processor. Each data processor has a writeback buffer for storing the data block previously stored in a victimized cache line until its respective writeback transaction is completed and an Nth+1 Dtag for storing the cache state of a cache line associated with a read transaction which is executed prior to an associated writeback transaction of a read-writeback transaction pair. Accordingly, upon a cache miss, the interconnect may execute the read and writeback transactions in parallel relying on the writeback buffer or Nth+1 Dtag to accommodate any ordering of the transactions.

The present invention relates generally to multiprocessor computer systems in which the processors share memory resources, and particularly to a multiprocessor computer system that utilizes an interconnect architecture and cache coherence methodology to minimize memory access latency by parallelizing read and writeback transactions for improved system throughput.

The need to maintain "cache coherence" in multiprocessor systems is well known. Maintaining "cache coherence" means, at a minimum, that whenever data is written into a specified location in a shared address space by one processor, the caches for any other processors which store data for the same address location are either invalidated, or updated with the new data.

There are two primary system architectures used for maintaining cache coherence. One, herein called the cache snoop architecture, requires that each data processor‘s cache include logic for monitoring a shared address bus and various control lines so as to detect when data in shared memory is being overwritten with new data, determining whether its data processor‘s cache contains an entry for the same memory location, and updating its cache contents and/or the corresponding cache tag when data stored in the cache is invalidated by another processor. Thus, in the cache snoop architecture, every data processor is responsible for maintaining its own cache in a state that is consistent with the state of the other caches.

In a second cache coherence architecture, herein called the memory directory architecture, main memory includes a set of status bits for every block of data that indicate which data processors, if any, have the data block stored in cache. The main memory‘s status bits may store additional information, such as which processor is considered to be the "owner" of the data block if the cache coherence architecture requires storage of such information.

In these cache coherence architectures, read-writeback transaction pairs arise when a read miss requires victimizing a cache line which has modified data, thereby necessitating a writeback to main memory. In the prior art, these transactions normally are strictly ordered, with the victimizing read transaction executing prior to the writeback transaction in order to allow the requesting processor to receive the data right away. In addition to the strict ordering, cache coherence architectures of the prior art required these read and writeback transactions be sequentially executed, not allowing for any other coherent transactions to be executed from the same processor between the read and the writeback transactions, even when transactions are directed to a different cache index. Accordingly, an architecture which supported parallelized transactions would provide reduced latency in processing the individual read-writeback transaction pairs along with an improvement in the overall transaction throughput.

In summary, the present invention is a multiprocessor computer system that has a multiplicity of sub-systems and a main memory coupled to a system controller. An interconnect module, interconnects the main memory and sub-systems in accordance with interconnect control signals received from the system controller.

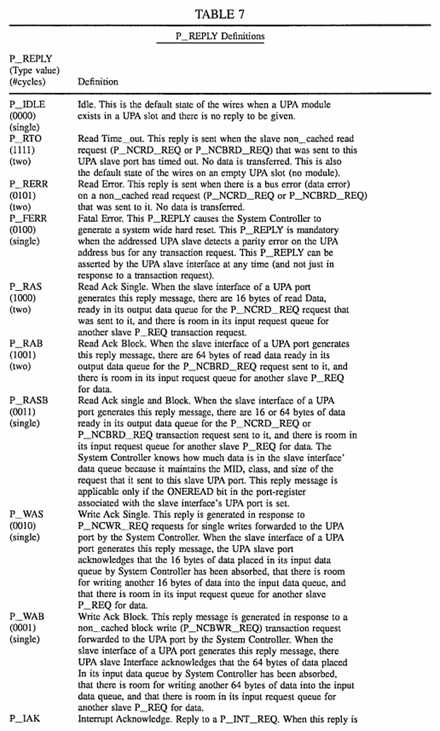

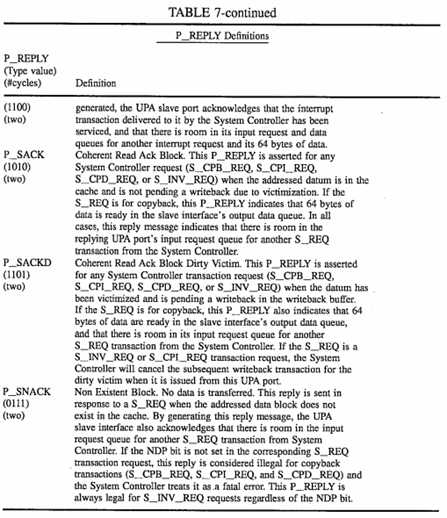

All of the sub-systems include a port that transmits and receives data as data packets of a fixed size. At least two of the sub-systems are data processors, each having a respective cache memory that stores multiple blocks of data and a set of master cache tags (Etags), including one cache tag for each data block stored by the cache memory.

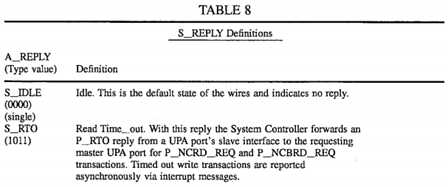

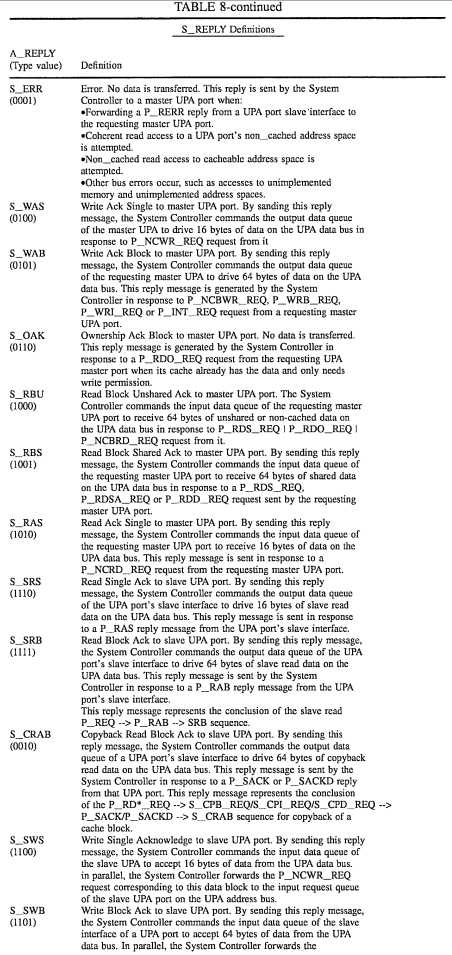

Each data processor includes a master interface having master classes for sending memory transaction requests to the system controller and for receiving cache access requests from the system controller corresponding to memory transaction requests by other ones of the data processors. The master classes allow for the simultaneous launching of read and writeback transactions. The system controller includes memory transaction request logic for processing each memory transaction request by a data processor, for determining which one of the cache memories and main memory to couple to the requesting data processor, for sending corresponding interconnect control signals to the interconnect module so as to couple the requesting data processor to the determined one of the cache memories and main memory, and for sending a reply message to the requesting data processor to prompt the requesting data processor to transmit or receive one data packet to or from the determined one of the cache memories and main memory.

The system controller maintains a set of duplicate cache tags (Dtags) for each of the data processors, the set of duplicate cache tags for each data processor having an equal number of cache tags as the corresponding set of master cache tags. Each master cache tag denotes a master cache state and an address tag; the duplicate cache tag corresponding to each master cache tag denotes a second cache state and the same address tag as the corresponding master cache tag.

The system controller includes further includes logic for executing a read-writeback pair of transactions in parallel, including an Nth+1 Dtag and a transient writeback buffer for each data processor. The Nth+1 Dtag for each processor stores the cache state and address tag of the cache line associated with a read transaction which is executed prior to an associated writeback transaction of a read-writeback transaction pair. The system controller contains Dtag update logic for transferring the Dtag value stored in the Nth+1 Dtag entry to its proper Dtag location upon the execution of the associated writeback transaction.

The writeback buffer in each data processor stores the data block previously stored in a victimized cache line until the associated writeback transaction is completed. Accordingly, upon a cache miss, the interconnect may execute the read and writeback transactions in parallel relying on the transient writeback buffer and the Nth+1 Dtag entry to accommodate any ordering of the transactions. As a result, read request and writeback request of a read-writeback transaction pair are processed such that processing of either of said read request and writeback request may be completed prior to the other in accordance with resource availability for processing those requests. For instance, if the read and writeback transactions reference two different main memory banks, one of those memory banks may be busy while the other is available for immediate use. Thus, using the present invention the transaction which references the available bank memory will be processed first, regardless of whether that transaction is the read transaction or the writeback transaction. This is in direct contrast with other systems in which read-writeback pairs are handled in a fixed order, and thus do not make optimal use of system resources.

Additional objects and features of the invention will be more readily apparent from the following detailed description and appended claims when taken in conjunction with the drawings, in which:

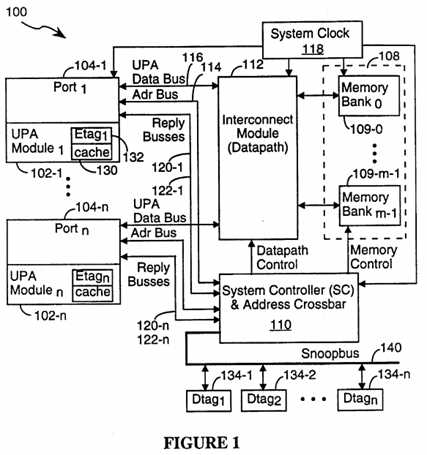

FIG. 1 is a block diagram of a computer system incorporating the present invention.

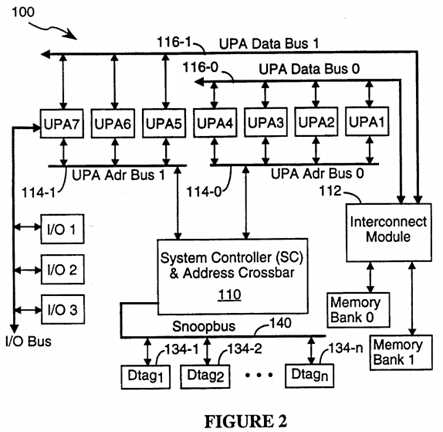

FIG. 2 is a block diagram of a computer system showing the data bus and address bus configuration used in one embodiment of the present invention.

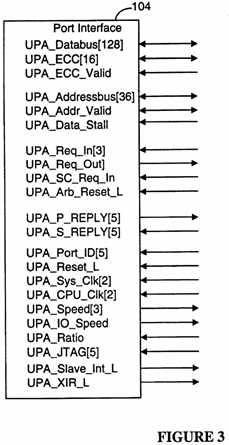

FIG. 3 depicts the signal lines associated with a port in a preferred embodiment of the present invention.

FIG. 4 is a block diagram of the interfaces and port ID register found in a port in a preferred embodiment of the present invention.

FIG. 5 is a block diagram of a computer system incorporating the present invention, depicting request and data queues used while performing data transfer transactions.

FIG. 6 is a block diagram of the System Controller Configuration register used in a preferred embodiment of the present invention.

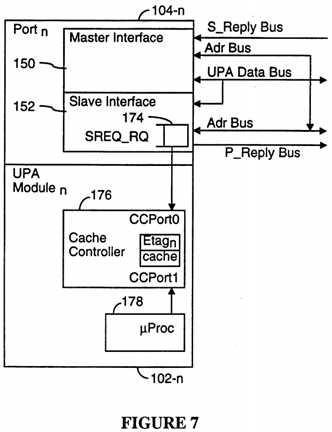

FIG. 7 is a block diagram of a caching UPA master port and the cache controller in the associated UPA module.

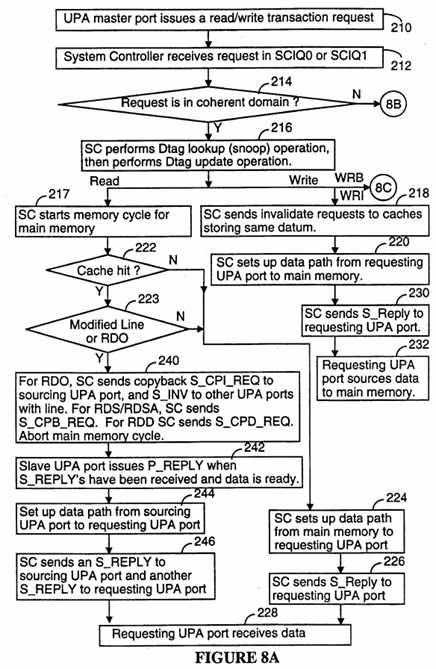

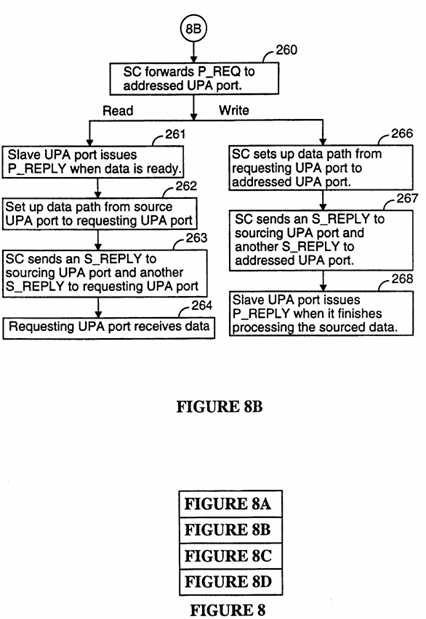

FIGS. 8, 8A, 8B, 8C, and 8D show a simplified flow chart of typical read/write data flow transactions in a preferred embodiment of the present invention.

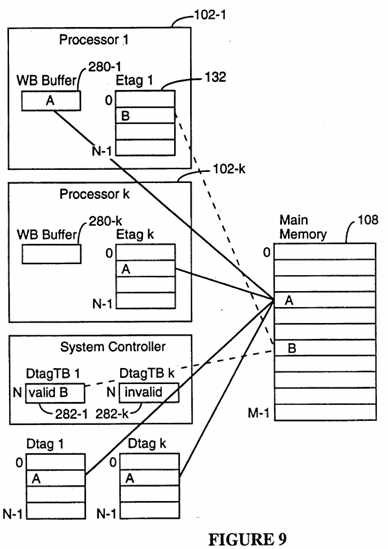

FIG. 9 depicts the writeback buffer and Dtag Transient Buffers used for handling coherent cache writeback operations.

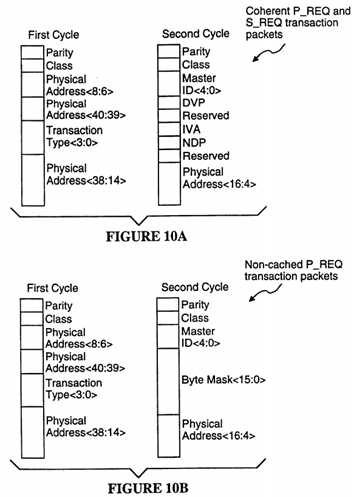

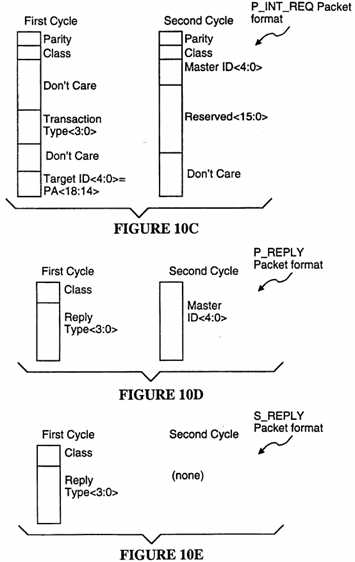

FIGS. 10A-10E shows the data packet formats for various transaction request packets.

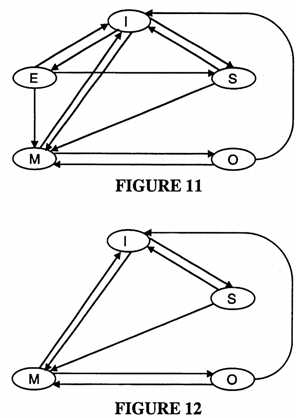

FIG. 11 is a state transition diagram of the cache tag line states for each cache entry in an Etag array in a preferred embodiment of the present invention.

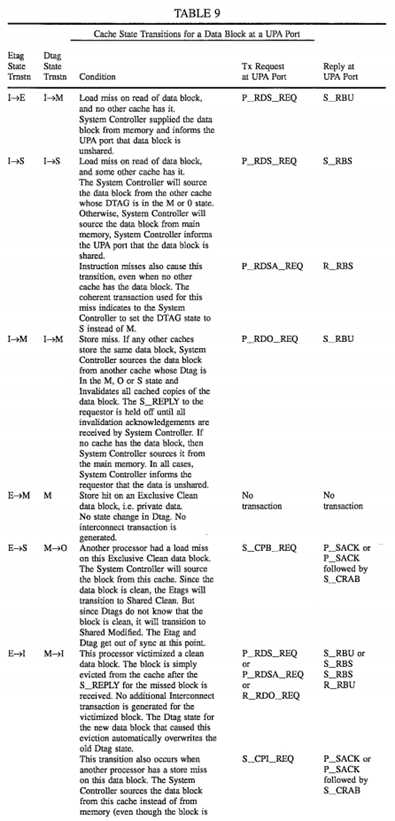

FIG. 12 is a state transition diagram of the cache tag line states for each cache entry in an Dtag array in a preferred embodiment of the present invention.

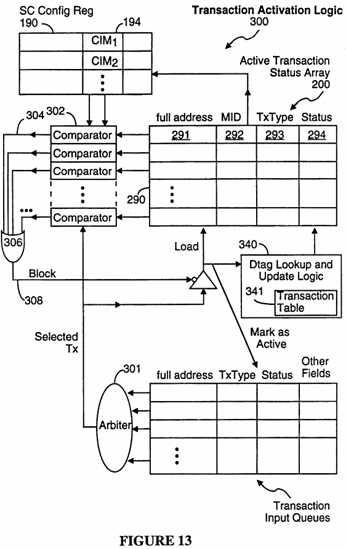

FIG. 13 depicts the logic circuitry for activating transactions.

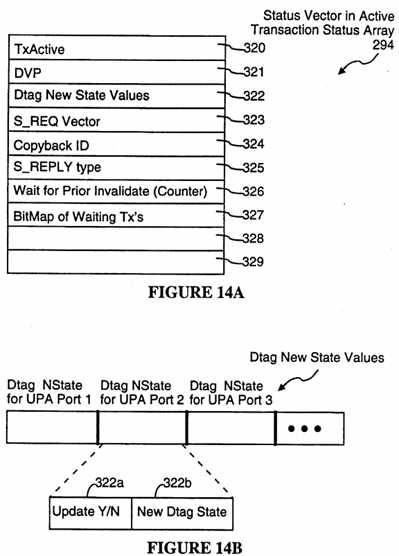

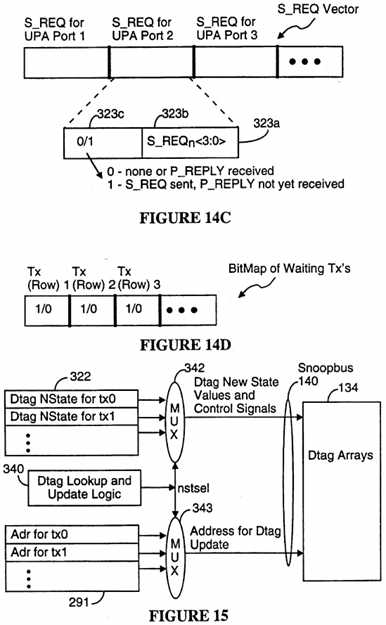

FIGS. 14A-14D are block diagrams of status information data structures used by the system controller in a preferred embodiment of the present invention.

FIG. 15 is a block diagram of the Dtag lookup and update logic in the system controller in a preferred embodiment of the present invention.

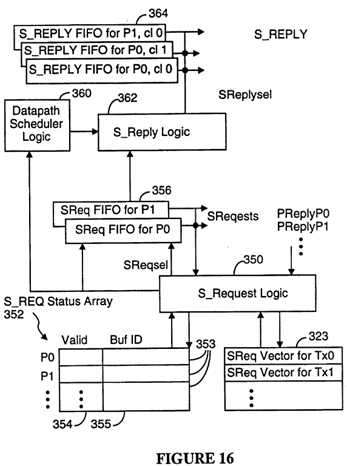

FIG. 16 is a block diagram of the S_Request and S_Reply logic in the system controller in a preferred embodiment of the present invention.

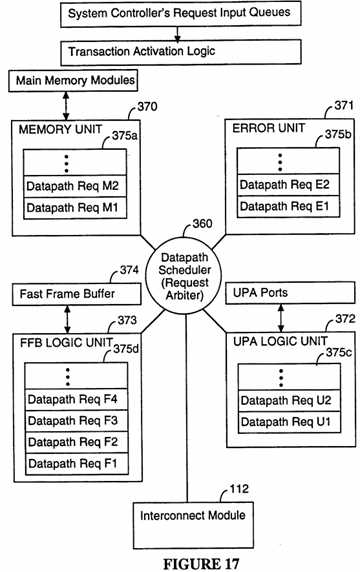

FIG. 17 is a block diagram of the datapath scheduler in a preferred embodiment of the present invention.

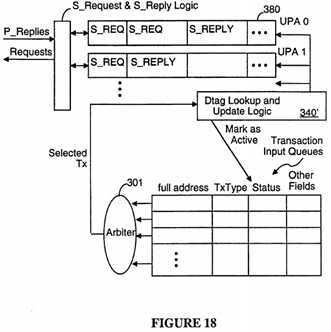

FIG. 18 is a block diagram of the S_Request and S_Reply logic in the system controller in a second preferred embodiment of the present invention.

The following is a glossary of terms used in this document.

Cache Coherence: keeping all copies of each data block consistent.

Tag: a tag is a record in a cache index for indicating the status of one cache line and for storing the high order address bits of the address for the data block stored in the cache line.

Etag: the primary array of cache tags for a cache memory. The Etag array is accessed and updated by the data processor module in a UPA port.

Dtag: a duplicate array of cache tags maintained by the system controller.

Interconnect: The set of system components that interconnect data processors, I/O processors and their ports. The "interconnect" includes the system controller 110, interconnect module 112, data busses 116, address busses 114, and reply busses 120 (for S_REPLY‘s), 122 (for P_REPLY‘s) in the preferred embodiment.

Victim: a data block displaced from a cache line.

Dirty Victim: a data block that was updated by the associated data processor prior to its being displaced from the cache by another data block. Dirty victims must normally be written back to main memory, except that in the present invention the writeback can be canceled if the same data block is invalidated by another data processor prior to the writeback transaction becoming "Active."

Line: the unit of memory in a cache memory used to store a single data block.

Invalidate: changing the status of a cache line to "invalid" by writing the appropriate status value in the cache line‘s tag.

Master Class: an independent request queue in the UPA port for a data processor. A data processor having a UPA port with K master classes can issue transaction requests in each of the K master classes. Each master class has its own request FIFO buffer for issuing transaction requests to the System Controller as well as its own distinct inbound data buffer for receiving data packets in response to transaction requests and its own outbound data buffer for storing data packets to be transmitted.

Writeback: copying modified data from a cache memory into main memory.

The following is a list of abbreviations used in this document:

DVMA: direct virtual memory access (same as DMA, direct memory access for purposes of this document)

DVP: dirty victim pending

I/O: input/output

IVP: Invalidate me Advisory

MOESI: the five Etag states: Exclusive Modified (M), Shared Modified (O), Exclusive Clean (E), Shared Clean (S), Invalid (I).

MOSI: the four Dtag states: Exclusive and Potentially Modified (M), Shared Modified (O), Shared Clean (S), Invalid (I).

NDP: no data tag present

PA[xxx]: physical address [xxx]

SC: System Controller

UPA: Universal Port Architecture

Referring to FIG. 1, there is shown a multiprocessor computer system 100 incorporating the computer architecture of the present invention. The multiprocessor computer system 100 includes a set of "UPA modules." UPA modules 102 include data processors as well as slave devices such as I/O handlers and the like. Each UPA module 102 has a port 104, herein called a UPA port, where "UPA" stands for "universal port architecture." For simplicity, UPA modules and their associated ports will often be called, collectively, "ports" or "UPA ports," with the understanding that the port or UPA port being discussed includes both a port and its associated UPA module.

The system 100 further includes a main memory 108, which may be divided into multiple memory banks 109 Bank0?to Bankm, a system controller 110, and an interconnect module 112 for interconnecting the ports 104 and main memory 108. The interconnect module 112, under the control of datapath setup signals from the System Controller 110, can form a datapath between any port 104 and any other port 104 or between any port 104 and any memory bank 109. The interconnect module 112 can be as simple as a single, shared data bus with selectable access ports for each UPA port and memory module, or can be a somewhat more complex crossbar switch having m ports for m memory banks and n ports for n UPA ports, or can be a combination of the two. The present invention is not dependent on the type of interconnect module 112 used, and thus the present invention can be used with many different interconnect module configurations.

A UPA port 104 interfaces with the interconnect module 112 and the system controller 110 via a packet switched address bus 114 and packet switched data bus 116 respectively, each of which operates independently. A UPA module logically plugs into a UPA port. The UPA module 102 may contain a data processor, an I/O controller with interfaces to I/O busses, or a graphics frame buffer. The UPA interconnect architecture in the preferred embodiment supports up to thirty-two UPA ports, and multiple address and data busses in the interconnect. Up to four UPA ports 104 can share the same address bus 114, and arbitrate for its mastership with a distributed arbitration protocol.

The System Controller 110 is a centralized controller and performs the following functions:

? Coherence control;

? Memory and Datapath control; and

? Address crossbar-like connectivity for multiple address busses.

The System Controller 110 controls the interconnect module 112, and schedules the transfer of data between two UPA ports 104, or between UPA port 104 and memory 108. The architecture of the present invention supports an arbitrary number of memory banks 109. The System Controller 110 controls memory access timing in conjunction with datapath scheduling for maximum utilization of both resources.

The System Controller 110, the interconnect module 112, and memory 108 are in the "interconnect domain," and are coupled to UPA modules 102 by their respective UPA ports 104. The interconnect domain is fully synchronous with a centrally distributed system clock signal, generated by a System Clock 118, which is also sourced to the UPA modules 104. If desired, each UPA module 102 can synchronize its private internal clock with the system interconnect clock. All references to clock signals in this document refer to the system clock, unless otherwise noted.

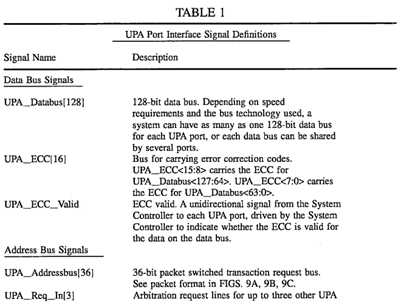

Each UPA address bus 114 is a 36-bit bidirectional packet switched request bus, and includes 1-bit odd-parity. It carries address bits PA[40:4] of a 41-bit physical address space as well as transaction identification information.

Referring to FIG. 1 and 2, there may be multiple address busses 114 in the system, with up to four UPA ports 104 on each UPA address bus 114. The precise number of UPA address busses is variable, and will generally be dependent on system speed requirements. Since putting more ports on an address bus 114 will slow signal transmissions over the address bus, the maximum number of ports per address bus will be determined by the signal transmission speed required for the address bus.

The datapath circuitry (i.e., the interconnect module 112) and the address busses 114 are independently scaleable. As a result, the number of address busses can be increased, or decreased, for a given number of processors so as to optimize the speed/cost tradeoff for the transmission of transaction requests over the address busses totally independently of decisions regarding the speed/cost tradeoffs associated with the design of the interconnect module 112.

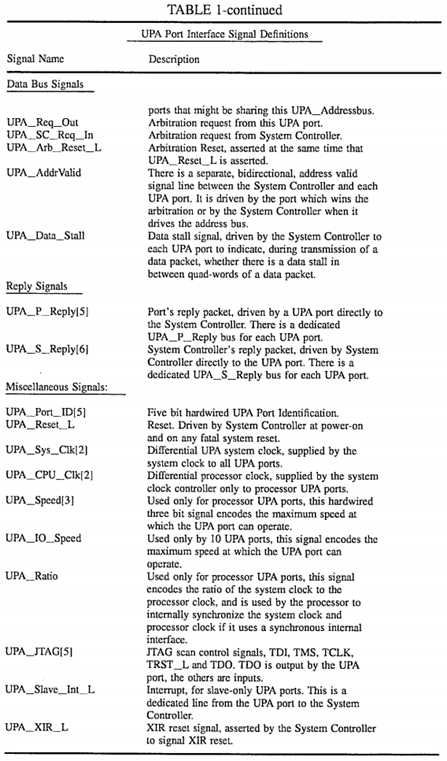

FIG. 3 shows the full set of signals received and transmitted by a UPA port having all four interfaces (described below) of the preferred embodiment. Table 1 provides a short description of each of the signals shown in FIG. 3.

A valid packet on the UPA address bus 114 is identified by the driver (i.e., the UPA port 104 or the System Controller 110) asserting the UPA_Addr_valid signal.

The System Controller 110 is connected to each UPA address bus 114 in the system 100. The UPA ports 104 and System Controller 110 arbitrate for use of each UPA address bus 114 using a distributed arbitration protocol. The arbitration protocol is described in patent application Ser. No. 08/414,559, filed Mar. 31, 1995, which is hereby incorporated by reference.

UPA ports do not communicate directly with other UPA ports on a shared UPA address bus 114. Instead, when a requesting UPA port generates a request packet that requests access to an addressed UPA port, the System Controller 110 forwards a slave access to the addressed UPA port by retransmitting the request packet and qualifying the destination UPA port with its UPA_Addr_valid signal.

A UPA port also does not "snoop" on the UPA address bus to maintain cache coherence. The System Controller 110 performs snooping on behalf of those UPA ports whose respective UPA modules include cache memory using a write-invalidate cache coherence protocol described below. The UPA address bus 114 and UPA data bus 116 coupled to any UPA port 104 are independent. An address is associated with its data through ordering rules discussed below.

The UPA data bus is a 128-bit quad-word bidirectional data bus, plus 16 additional ECC (error correction code) bits. A "word" is defined herein to be a 32-bit, 4-byte datum. A quad-word consists of four words, or 16 bytes. In some embodiments, all or some of the data busses 116 in the system 110 can be 64-bit double word bidirectional data bus, plus 8 additional bits for ECC. The ECC bits are divided into two 8-bit halves for the 128-bit wide data bus. Although the 64-bit wide UPA data bus has half as many signal lines, it carries the same number of bytes per transaction as the 128-bit wide UPA data bus, but in twice the number of clock cycles. In the preferred embodiment, the smallest unit of coherent data transfer is 64 bytes, requiring four transfers of 16 bytes during four successive system clock cycles over the 128-bit UPA data bus.

A "master" UPA port, also called a UPA master port, is herein defined to be one which can initiate data transfer transactions. All data processor UPA modules must have a master UPA port 104.

Note that graphics devices, which may include some data processing capabilities, typically have only a slave interface. Slave interfaces are described below. For the purposes of this document, a "data processor" is defined to be a programmable computer or data processing device (e.g., a microprocessor) that both reads and writes data from and to main memory. Most, but not necessarily all, "data processors" have an associated cache memory. For instance, an I/O controller is a data processor and its UPA port will be a master UPA port. However, in may cases an I/O controller will not have a cache memory (or at least not a cache memory for storing data in the coherence domain).

A caching UPA master port is a master UPA port for a data processor that also has a coherent cache. The caching UPA master port participates in the cache coherence protocol.

A "slave" UPA port is herein defined to be one which cannot initiate data transfer transactions, but is the recipient of such transactions. A slave port responds to requests from the System Controller. A slave port has an address space associated with it for programmed I/O. A "slave port" within a master UPA port (i.e., a slave interface within a master UPA port) also handles copyback requests for cache blocks, and handles interrupt transactions in a UPA port which contains a data processor.

Each set of 8 ECC bits carry Shigeo Kaneda‘s 64-bit SEC-DED-S4ED code. The interconnect does not generate or check ECC. Each UPA port sourcing data generates the corresponding ECC bits, and the UPA port receiving the data checks the ECC bits. UPA ports with master capability support ECC. Slave-only UPA port containing a graphics framebuffer need not support ECC (See UPA_ECC_Valid signal).

The UPA data bus 116 is not a globally shared common data bus. As shown in FIGS. 1 and 2, there may be more than one UPA data bus 116 in the system, and the precise number is implementation specific. Data is always transferred in units of 16 bytes per clock-cycle on the 128-bit wide UPA data bus, and in units of 16 bytes per two clock-cycles on the 64-bit wide UPA data bus.

The size of each cache line in the preferred embodiment is 64 bytes, or sixteen 32-bit words. As will be described below, 64 bytes is the minimum unit of data transfer for all transactions involving the transfer of cached data. That is, each data packet of cached data transferred via the interconnect is 64 packets. Transfers of non-cached data can transfer 1 to 16 bytes within a single quad-word transmission, qualified with a 16-bit bytemask to indicate which bytes within the quad-word contain the data being transferred.

System Controller 110 schedules a data transfer on a UPA data bus 116 using a signal herein called the S_REPLY. For block transfers, if successive quadwords cannot be read or written in successive clock cycles from memory, the UPA--Data_Stall signal is asserted by System Controller 110 to the UPA port.

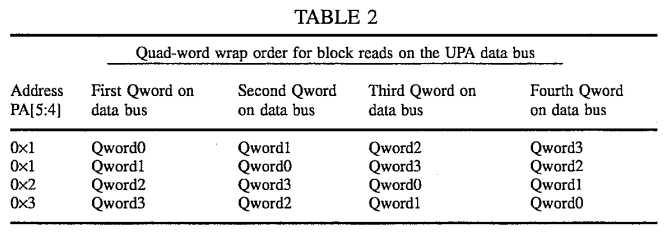

For coherent block read and copyback transactions of 64-byte data blocks, the quad-word (16 bytes) addressed on physical address bits PA[5:4] is delivered first, and the successive quad words are delivered in the wrap order shown in Table 2. The addressed quad-word is delivered first so that the requesting data processor can receive and begin processing the addressed quad-word prior to receipt of the last quad-word in the associated data block. In this way, latency associated with the cache update transaction is reduced. Non-cached block read and block writes of 64 byte data blocks are always aligned on a 64-byte block boundary (PA[5:4]=0×0).

Note that these 64-byte data packets are delivered without an attached address, address tag, or transaction tag. Address information and data are transmitted independently over independent busses. While this is efficient, in order to match up incoming data packets with cache miss data requests an ordering constraint must be applied: data packets must be transmitted to a UPA port in the same order as the corresponding requests within each master class. (There is no ordering requirement for data requests in different master classes.) When this ordering constraint is followed, each incoming data packet must be in response to the longest outstanding cache miss transaction request for the corresponding master class.

Request and Reply Messages

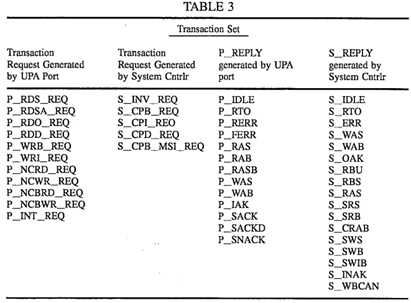

Transactions are initiated by "request" messages and are executed upon the receipt of a "reply" message. Any request by a UPA port is herein labelled P_REQ, which stands for "port request." A port request is transmitted via the UPA port‘s address bus 114. If the address bus 114 is shared by more than one UPA port, the requesting port transmits its request only after it successfully arbitrates for the address bus.

Each port request is acknowledged by the System Controller 110 via a reply message called S_REPLY. There is a dedicated point-to-point 5-bit system reply bus, S_REPLY bus 120, for each UPA port that unidirectionally sends 5-bit reply messages from the System Controller 110 to each UPA port. The System Controller 110 drives a reply code on the S_REPLY bus 120 in acknowledgment to a transaction request, and to coordinate the sourcing and sinking of data on the UPA data bus 116. More particularly, the System Controller 110 generates an S_REPLY in response to a P_REQ either when the System Controller 110 is ready to set up the data path needed to perform the requested transaction, or when the requested transaction is completed if the transaction (such as an invalidate transaction request) does not involve a data transfer. The S_REPLY message is generated by the System Controller at essentially the same time that the System Controller sends the corresponding setup signals to the interconnect module 112.

Each transaction initiated by the System Controller 110 is initiated by sending a S_REQ (i.e., system controller request) message over the address bus 114 coupled to the UPA port 104 to which the request is directed. Transactions initiated by the System Controller 110, are generally "nested transactions" performed by the System Controller 110 in response to a transaction request by a UPA port. For instance, a certain memory transaction request by a UPA port may require that all cache entries in other UPA ports for the requested data block be invalidated before the System Controller can transfer the requested data block to the requesting UPA port‘s cache. The cache invalidations are performed by the System Controller making transaction requests to each UPA port whose cache stores the requested data block.

Each UPA port 104 has a dedicated point-to-point 5-bit port reply bus, P_REPLY, 122 used by that port to acknowledge System Controller requests.

All system controller requests are directed to the "slave port" portion of the target UPA port. The UPA port‘s slave port drives a reply code on the P_REPLY bus 122 in acknowledgment to a transaction received from the System Controller 110 to indicate that requested data is ready for read requests, that transmitted data has been absorbed for write requests, that cache invalidations are complete for invalidation requests, and that an interrupt has been handled for interrupt requests.

The System Controller 110 uses the P_REPLY acknowledgement message received from the UPA slave port to trigger the transmission of its S_REPLY message to the requesting UPA port.

Cache Memories, Tags and Snoopbus

Referring to FIG. 1, for each UPA module 102 that includes a cache memory 130, a primary cache index 132 having a set of primary cache tags called Etags is maintained. It should be understood that in most implementations, the cache memory 130 is a "second level cache" or "third level cache," because the data processor 178 (shown in FIG. 7) in the UPA module 102 will typically include an embedded first level or second level cache. The duplicate cache tags are only maintained for the outer most direct mapped coherent cache for each data processor, and any other lower level caches are considered private to the UPA port and are kept coherent by the UPA port through perfect inclusion.

There is one Etag for each line of the cache memory 130, and each line of the cache stores one 64-byte (16-word) data block. In the preferred embodiment, each Etag stores tag state and a set of address bits that identify the address of the 64-byte block stored in the cache line.

As just stated, the cache block size is 64 bytes. The unit of cache coherence is also 64 bytes. Each UPA port can have a different sized cache. In addition, in the preferred embodiment, only direct mapped cache memories are used in data processor and I/O UPA ports. I/O UPA ports may have any of a variety of cache memory structures. System Controller support for such cache memory structures is implemented through dedicated duplicate tags of a like structure in the System Controller. In the preferred embodiment, the I/O UPA port has a plurality of fully associative coherent buffers with a corresponding number of Dtags in the System Controller.

As is standard, the number of address bits required to identify the data block depends on the size of the cache memory and the size of the address space mapped into the cache memory. For example, for an 8-gigabyte address space, and a 512 kilobyte direct mapped cache memory, 14 address bits are needed to identify the data block in each line of the cache memory. That is, given a 33-bit address PA[32:0] for a specific byte, and a 27-bit address PA[32:6] for the corresponding 64-byte data block stored in a 512 kilobyte cache memory with 64-byte lines, the 14 most significant address bits PA[32:19] of the data block‘s full address are stored in the cache tag to identify the data block, and the next 13 bits PA[18:6] of the data block‘s address determine which cache line in which the data block is stored. In system 100, with a 1-terabyte coherent address space PA[39:0] and a 512 kilobyte direct mapped cache memory 130, each Etag will need to store the 21 most significant bits of the full address of the data block stored in the corresponding line of the cache memory.

The number of address bits stored in the cache index and hence the cache size for each master UPA port is determined by system initialization software by probing the port ID register 158 of each UPA port, as will be explained in more detail below.

In order to avoid snoop interference with a processor‘s reference to its coherent cache in multiprocessor systems, a duplicate set of tags (Dtags 134 that mirror the UPA module‘s Etags 132, is maintained by System Controller 110 for each UPA module having a cache memory that is to be kept coherent with the other cache memories in the system 100. The Dtags 134 support direct mapped cache memories. For each Etag entry there is a corresponding Dtag entry, such that a lookup on the Dtags by the System Controller 110 correctly indicates the corresponding Etag state for a data block, without interfering with a processors access to its Etags.

The Snoopbus 140 is an address bus capable of carrying all the relevant physical address bits PA[40:6] corresponding to the size of the cacheable address space in the system (size of main memory space). The Snoopbus further includes two bidirectional bit lines, a match signal line and a write control line for each Dtag array 134. The two bit lines convey a 2-bit cache line state from the Dtags arrays 134 to the System Controller 100 when the Dtags are being read, and are used to transmit a 2-bit updated line cache when the System Controller 110 updates the Dtags. The match line for a particular Dtag array carries a match signal indicating whether the address on the Snoopbus 140 matches the address of a data block stored in the associated cache memory. The match signal is equivalent to the cache hit/miss signal generated by the cache memory‘s primary cache index when presented with the same address, except that the match signal is generated from the Dtag array without interfering with the operation of the cache memory‘s primary index (i.e., the Etag array).

The Snoopbus 140 is scaleable independent of the address busses 114 and the independent of the data busses 116. Thus, the number of parallel snoopbusses 140 used and the number of Dtag arrays 134 which load each Snoopbus 140 can be based solely on the speed requirements of the Dtag lookup and update operations, without any dependence on the speed requirements of the address and data busses 114, 116.

UPA PORT MODEL

Each UPA port 104 is identified by a unique 5-bit value, called the Port ID or UPA_Port_ID (see FIG. 3). This allows a maximum of 32 UPA ports in a system 100.

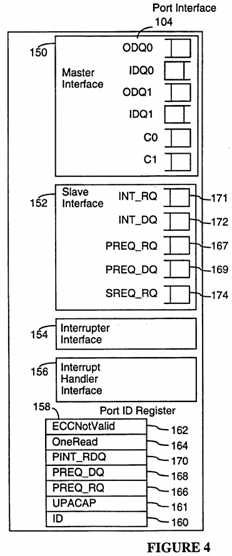

Referring to FIG. 4, each UPA port can have as many as four functional interfaces: a master interface 150, a slave interface 152, an interrupter interface 154 and an interrupt handler interface 156. All UPA ports include the UPA slave interface 152 and a port ID register 158. The port ID register 158 is used by the UPA port 104 to communicate its capabilities to the System Controller 110.

As shown in FIG. 4, these interfaces includes a number of queues. The slave interface 152 includes input request queues for receiving transaction requests (PREQ, SREQ), interrupt requests (INT), and data (PREQ_DQ, INT_DQ) associated with the requests. The master interface 150 includes queues C0, C1 for outbound requests and optionally includes inbound and outbound data queues IDQ0, ODQ0, IDO1, ODQ1 for each master class.

Some of the following explanations make reference to specific transactions and reply messages. All such transactions and reply messages are described in the section of this document entitled "Detailed Description of Transactions."

The fields of the port ID register 158 are as follows:

? the ID field 160 is a 16-bit field that identifies the UPA module associated with the port, including 6 bits for a manufacturer‘s ID (assigned by Sun Microsystems, Inc.), 6 bits for the module or processor type (assigned by the manufacturer), and 4 bits for a module version/revision number (assigned by the manufacturer).

? The UPACAP field 161 is a 5-bit mask field to indicate the capabilities of the UPA port.

? UPACAP[0] is set if UPA has a master interface.

? UPACAP[1] is set if the UPA module has a cache (making the UPA port a "CacheMaster").

? UPACAP[2] is set if the UPA port has an interrupter interface using the UPA_Slave_Int_L signal. This bit is set primarily by slave-only UPA ports. Software assigns this UPA port a target-MID corresponding to an interrupt handler.

? UPACAP[3] is set if the UPA port has an interrupter interface using the P_INT_REQ transaction request protocol. Software assigns this UPA port a target-MID corresponding to an interrupt handler.

? UPACAP[4] is set if the UPA port has an interrupt handler interface. The System Controller forwards P_INT_REQ interrupt requests from other UPA ports to this port only if this bit is set.

? The ECCNotValid field 162 is a 1-bit field to indicate that this UPA port does not support ECC. This field is set to 0×0 if the UPA port can generate ECC when sourcing data. It is set to 0×1 if the UPA port does not support ECC generation when sourcing data, and requires the System Controller 110 to indicate to the recipient UPA port to disable ECC checking. When ECCNotValid is set to 0×1, the UPA port will also not support ECC checking on the UPA data bus, nor parity checking on the UPA address bus.

? The ONEREAD field 164 is a 1-bit field to indicate that this UPA port only supports one outstanding slave read P_REQ transaction to it at a time. If the ONEREAD field is set, this UPA port cannot issue P_RAB_REPLY and P_RASP--REPLY messages, it must use the P_RASB_REPLY reply message. As a result, if the ONEREAD field is set, this UPA port will use the P_RASB reply for P_NCRD_REQ and P_NCBRD_REQ transactions directed to it for slave access. As will be explained below, the System Controller will keep the MID, Class, and size information for these transactions on behalf of the UPA port. The P_NCRD_REQ transaction request message and the P_RASB reply message are also described in more detail below.

? The PREQ_RQ[3:0] field 166 is a 4-bit field that encodes the size of the PREQ_RQ queue 167 in the slave interface 152. This field specifies the maximum number of (2 cycle) incoming P_REQ transaction request packets that the UPA slave interface 152 can simultaneously store. The minimum value of PREQ_RQ is 0×1, since every UPA port, at a minimum, must support a slave read of its port ID register.

? The PREQ_DQ[5:0] field 168 is a 6-bit field that encodes the size of the PREQ_DQ queue 169. This field specifies the number of incoming quadwords (16-byte quantities) that the UPA slave port can receive in its P_REQ write data queue 169. The write data queue PREQ_DQ must accommodate data blocks (64 bytes) for the maximum number of requests supported in the PREQ_RQ request queue. As a result, PREQ_DQ data queue is always 4 times the size specified in the PREQ_RQ field 166, except that PREQ_DQ can be set equal to 0×0 if the UPA port does not support slave writes.

? The PINT_RDQ[1:0] field 170 is a 2-bit field that encodes the size of the INT_RQ and INT_DQ queues 171, 172. The PINT_RDQ field 170 is valid only if the UPA port has an Interrupt Handler interface 156. The size of the interrupt request queue, INT_RQ 171, is equal to the binary value of this field plus 1. The maximum size of the interrupt request queue is four interrupt requests. In summary, this field specifies the number of (2 cycle) incoming P_INT_REQ requests that the UPA slave port can receive, as well as the number of 64-byte interrupt data blocks the UPA slave interface can receive.

With regard to the UPACAP field, there is no mask bit to indicate slave capability, as every UPA port is required to have a slave interface in order for the port ID register to be read. The following are some examples of the settings for the UPACAP field. A full function UPA port such as a processor module, will have its UPACAP mask set to 0×1B. A slave only UPA port such as a graphics device that is only mapped for slave access and does not interrupt, will have its UPACAP mask set to 0×0. A smart slave only UPA port that generates interrupts (with UPA_Slave_Int) will have its UPACAP mask set to 0×04. An I/O UPA port that performs DVMA (direct virtual memory access), does not have a cache, and generates P_INT_REQ interrupt request transaction will have its UPACAP mask set to 0×9.

Master Interface

A master interface 150 is optional. A UPA master interface allows a UPA port to initiate transaction requests (P_REQ). A UPA port 104 having a mater interface 150 is herein called a master port.

The UPA module for a master port may contain a physically addressed coherent cache, in which case it is called a cache master port. The cache participates in the "MOESI cache coherence protocol" (which is explained in detail below), and responds to copyback-invalidation requests from the System Controller 110. The coherent cache has total inclusion of any other private local caches in the UPA module. In the preferred embodiment, each caching UPA master port can at most have one outstanding dirty victim writeback, in part because each data processor‘s cache memory has only a single writeback buffer (see buffer 280 in FIG. 9), and in part to avoid complex control logic that multiple writeback buffers would require.

In other embodiments, where the data processor can issue multiple loads and cache misses or prefetches, there can be a plurality of writeback buffers in the data processor and a corresponding number of Dtag transient buffers in the System Controller. The writeback handling logical protocols described here work with any number of outstanding writebacks from the UPA port.

A UPA master interface 150 has up to two independent outgoing request queues C0 and C1 for two "classes" of transaction requests. C0 and C1 are also called "master classes," since they are classes of transactions initiated by UPA master ports. The UPA master interface may issue a transaction request from any class. A bit in each transaction request packet specifies to the System Controller which class the request is from. The port ID of the UPA port is also carried in the transaction packet in the MID field (see discussion of transactions, below). The master interface 150 may also include inbound and outbound data queues, IDQ0, ODQ0, and IDQ1, ODQ1, for each of the transaction classes.

The purpose of having two or more transaction classes (herein called master classes) is to increase parallel execution of memory transactions by allowing each data processor to indicate which memory transactions need to be sequentially ordered and which do not. Transactions in each master class from a particular data processor are "strongly ordered," which means that the transactions in each class must be completed in the same order that the data processor generates the memory requests in that class. There is no ordering requirement between memory transactions in different classes. Thus, if a data processor issues memory transaction requests T1-1, T1-2 and T1-3 in sequence in class 1 and memory transactions T2-1 and T2-2 in sequence in class two, the System Controller must complete transactions T1-1, T1-2 and T1-3 in the same sequential order that they were generated. Similarly, the System Controller must complete transactions T2-1 and T2-2 in the same sequential order that they were generated; but it can complete transactions T2-1 and T2-2 at any time with respect to transactions T1-1, T1-2 and T1-3.

Data queues are used in the preferred embodiments to simplify the handling of data transfers. Outbound data queues are always filled with data to be transmitted before the corresponding transaction request or reply message is transmitted. In this way, when a data write transaction request message is transmitted, or a "data ready" reply message is transmitted by a UPA port, the interconnect is assured that the associated data is ready for immediate transmission. Inbound data queues, most of which are optional, are typically implemented using FIFO (first-in first-out) buffers that can be filled independently of the status of any other logic. As a result, whenever inbound data queues are provided in a UPA port, neither the UPA module (such as a data processor) nor its UPA port needs to manage the handshake protocol for receiving data packets. Rather, the data is simply stored by the interconnect in the inbound data queue and the associated UPA port or its UPA module processes that data whenever the required resources are available.

The S_REPLY for the transactions in each master request class are issued by System Controller 110 to the requesting master UPA port in the same order as the order in which the transaction requests were originally issued by the requesting UPA port. This requirement is implemented in the System controller by (A) treating each master class request queue SCIQ0/1 as a first-in first-out buffer such that the transactions within each master request class are activated by the System Controller strictly in the same order as the order in which the transaction requests are issued, and (B) within the set of active transactions, queuing the issuance of S_REPLY‘s for transactions having the same requesting UPA port and master class in same order as those transactions were activated.

As noted above, there is no ordering relation between the two transaction request classes C0, C1. The S_REPLY for a request from one class may come earlier or later than the S_REPLY for a request from the second class, without regard to the order in which those requests were transmitted to the System Controller.

There is also no ordering relation between requests from different master UPA ports. Read/write requests from different master UPA ports directed to a UPA port‘s slave interface can be executed by the UPA port slave interface in any order, but requests from the same master UPA port and in the same master request class will be executed by the UPA port slave interface in the same order as they are received by the UPA port slave interface.

References to I/O devices are sequentially consistent. The slave UPA interface orders transactions by device addresses. All references to the same I/O device (or a predefined address range) are required to complete in the same order that they arrive in at the UPA slave interface. However, there is no ordering of references going to different I/O devices hanging off the same UPA slave interface (such as in a bus bridge) and the UPA slave interface is allowed to execute transactions going to different I/O devices (or different predefined address ranges) in parallel.

Each UPA module is required to put in a single master request class all transaction requests for which ordering is important. The preferred class assignment for all processor UPA modules is as follows:

? Class 0 is used for read transactions due to cache misses, and block loads.

? Class 1 is used for writeback requests, WriteInvalidate requests, block stores, interrupt requests, and non-cached read/write requests.

This assignment of memory transactions to classes enables the memory transactions caused by cache misses to not be blocked by other transactions and is especially significant when the data processor supports multiple outstanding loads and/or prefetching. This gives the lowest possible latency for cache fills, in conjunction with other optimizations.

The interconnect can maximize parallel execution of transactions and allow them to complete in any order, except for transactions from the same UPA port and class. For maintaining coherence, and to achieve sequential consistency from the programmer‘s point of view, the TSO (total store order), PSO (partial store order) and RMO (relaxed memory order) memory models and the SSO (strong sequential order) I/O space memory model are supported without actually making the hardware of the interconnect sequentially consistent.

A UPA master port is solely responsible for the ordering of its internal memory events based on its memory model, and can issue any combination of transactions from any request class to support that memory model ordering requirement. The UPA port‘s data processor may use the two master classes to parallelize and order transactions as needed based on its local memory model. All barriers and synchronizations are enforced by the data processor based on its memory model before it issues the transactions from the master classes.

All data transactions always complete and there is no retry NACK from the System Controller 110 to the master UPA port (with the exception of one interrupt transaction).

The UPA master port must not make a read/write slave access to its own slave port, nor make a request for a data block which is already in its cache, nor send interrupts to itself. Loopback is not supported by the preferred embodiment of the present invention due to electrical constraints associated with the connectors. However, there is nothing in the system architecture of the present invention that logically prevents the use of loopbacks. In fact, the protocols of S_REPLY, data transfers and cache coherence are designed to work with lookback.

Slave Interface

All UPA ports include a slave interface 152, and all UPA ports implement the port ID register 158. A slave interface 152 can only respond to transactions, it cannot initiate them. A slave interface 152 is sometimes herein called a "slave port". All references to slave ports mean the slave interface of a UPA port, regardless of whether or not the UPA port is a master UPA port.

A UPA slave interface 152 on a caching master UPA port allows the UPA 6 port to receive copyback-invalidation requests from System Controller 110. A UPA slave interface 152 allows a UPA port to receive interrupt packet transactions if the slave interface is part of a UPA port that includes an interrupt handler interface 156.

A UPA slave interface has a noncached address space, and allows programmed I/O (PIO) read and write access to devices and registers, including reading its port ID register 158, on the UPA module from master UPA ports. Each UPA slave interface is assigned an 8-gigabyte non-cached address space. When a UPA port sees the UPA_Addr_Valid signal asserted to it, if the most significant address bit, PA[40], is equal to 1, the physical address bits PA[32:4] from the transaction request packet represent addresses in the non-cache address space.

The UPA interconnect architecture does not define the system wide address space, nor the address decodes for any of the system registers, except the port ID register 158.

A UPA slave interface processes PIO read/write transaction requests from the same master class from a master UPA port in the same order as those requests are received. That is, it sends P_REPLY messages for those transaction requests in the same order as the transaction requests were received. It need not, however, maintain any ordering for requests in different master classes from a UPA port, or for requests from different UPA ports.

If the UPA slave interface is connected to an I/O bus interface, it is required that the I/O bus interface also maintain the ordering for the transactions it receives for each distinct address or address range. For instance, a write transaction to address A (or to device A) on the I/O bus followed by a read transaction to address A (or to device A) on the same I/O bus must not cause the I/O bus interface to reorder the read before the write. However, a write to address A (or to device A) followed by read of address B (or to device B) can be executed by the I/O bus interface in any order. The precise mechanism for I/O bus ordering can vary from implementation to implementation of the I/O bus interface. However, a blocking bit and blocking bit map scheme similar the one described above for class ordering can be used for both class based ordering and I/O address based ordering.

A UPA slave interface cannot guarantee write completion on a write transaction. A write followed by a read (by the same processor) will return the result of the last write if the location exists. However, since read-write to I/O device registers can have implementation specific side affects, the semantics of this is up to each I/O device.

A master UPA port communicates to a slave UPA port only through the interconnect module 112, even if the two are sharing the same UPA address bus.

A slave-only UPA port (a UPA port that does not have a master interface) can use a dedicated interrupt wire to signal an interrupt to the System Controller. The System Controller will generate an interrupt packet for it, and will forward it to an interrupt handler UPA port.

The System Controller 110 handles flow control of requests to a UPA port‘s slave interface by knowing the maximum size of the three slave request receive queues (PREQ_RQ, SREQ_RQ, INT_RQ) and the two data queues (PREQ_DQ, INT--DQ) shown in FIGS. 4 and 5. The port ID register 158 for each UPA port specifies the maximum number of outstanding transaction requests of each type it can simultaneously stored in its queues, and thus how many such requests can be sent to it by System Controller 110 until some of those requests have been services. The port ID register 158 also specifies the maximum number of quad-words (16-byte units) that each of the data queues can store.

The UPA slave port interface 152 cannot reply with a "retry NACK" to any transaction forwarded to it. To avoid the need for such negative acknowledgement messages, the System Controller 110 does not issue more requests to the UPA slave interface than what the slave interface has room for in its queues. A P_REPLY from the UPA slave interface to acknowledge the completion of a previous transaction informs System Controller 110 that there is room for one more request of that type in that slave UPA port‘s incoming request queue.

The maximum size of system controller request queue SREQ_RQ 174 in the slave port interface 152 in the preferred embodiment is fixed at 1. Thus there can be at most one outstanding S_REQ to a slave UPA port.

The reason a single entry request queue SREQ_RQ 174 can be used without hurting system performance is that all S--REQ requests are given the highest priority service by the slave interface and are serviced so quickly that queuing S_REQ requests is unnecessary. In particular, as shown in FIG. 7, in the preferred embodiment, the cache controller 176 in each caching UPA master port 104 is dual ported such that the cache controller accepts cache access requests from both the port‘s data processor 178 and from the SREQ_RQ request queue, with the SREQ_RQ being given higher priority than the data processor. Using this configuration, SREQ‘s are generally serviced by each slave interface within two to five system clock cycles of their storage in the SREQ_RQ request queue 174. In particular, if an atomic read-modify-write cache memory operation was started by the data processor 178 one clock cycle immediately prior to the SREQ being asserted, it might take as long as three additional system clock cycles for that cache transaction to complete, after which the SREQ would be serviced, typically within two system clock cycles.

Furthermore, using the dual ported cache controller methodology described above, a copyback request from one data processor 178 to another will complete in approximately the same amount of time as a load from main memory when the interconnect has no competing memory transactions. In particular, in the preferred embodiment, a load from main memory takes about 8 system clock cycles to complete, and a copyback from one data processor‘s cache memory to another data processor‘s cache memory also takes about 8 system clock cycles to complete when there are no competing memory transactions.

In most implementations, each UPA slave interface is required to perform parity checks on transaction requests received via the UPA address bus and to report any parity error with a fatal error P_REPLY message. In most implementations each UPA slave interface 152 is also required to perform an ECC check on write transactions if ECC is valid, and to log and report data errors.

Interrupter Interface

An interrupter interface 154 is optional. If the UPA port supports a master interface 150, it can issue an interrupt packet transaction from any master class in the master UPA port to a target slave UPA port that is an interrupt handler.

An interrupter interface in a master UPA port generates interrupts by initiating a P_INT_REQ transaction (see discussion of transactions, below). The master UPA port generates an interrupt packet for a particular destination interrupt handler UPA port by specifying a target-ID<4:0> in the request packet. The target-ID is the same as the unique 5-bit UPA_Port--ID of the destination UPA port. An interrupt directed by a UPA Port to itself is not supported on the UPA interface in the preferred embodiment due to electrical connector considerations, but could well be supported in alternate embodiments of the present invention.

The target-ID of one (or more) interrupt handler UPA port(s) is assigned to each non-processor interrupter UPA port by system initialization software. The non-processor interrupter UPA port can then send interrupt transactions only to the assigned target-IDs. A processor UPA port can send interrupt transactions to any interrupt handler target-ID (for processor to processor software cross calls).

The target-ID<4:0> for each interrupt transaction request P_INT_REQ is carried in the physical address field PA<18:14> in the first cycle of the two cycle interrupt packet (see FIG. 9C). The UPA port can put the P_INT_REQ in any master request class. There is no requirement that it should be in one class or another. It is, however, put in class 1 in the preferred embodiment so as not to block any cache fill transactions.

If the UPA port that initiated the interrupt transaction receives a S_INAK reply (otherwise known as a NACK reply), the requesting UPA port removes the interrupt data from its out-going data queue and the P_INT_REQ request from the master request class queue, and retries after some back-off interval.

If the UPA port receives a S_WAB reply for driving the 64 bytes of interrupt data on the UPA data bus, then it is guaranteed that both the P_INT_REQ and the data will be delivered to the target UPA port by the System Controller (just like a non-cached block write transaction) if the target is a valid interrupt handler. If the target is not an interrupt handler, then either the System Controller may discard it (and set a status bit), or the recipient UPA port may discard it (silently).

An interrupter can send multiple back-to-back P_INT_REQ with different target-IDs (destination UPA Ports). If the interrupt packet can be delivered by the System Controller, it will be accepted. Otherwise it will be NACK‘ed by the System Controller.

If an interrupter issues multiple back-to-back P_INT_REQ transactions to different UPA ports, there is no guarantee that they will get delivered in the same order. However back-to-back P_INT_REQ specifying the same target-ID will be delivered by the System Controller to the target UPA port in the same order, provided that any interrupt requests that result in NACKs from the System Controller 110 are also retried by the UPA interrupter interface in their original issue order.

If the UPA port does not support a master interface, but requires an interrupt interface 154, the interrupt interface 154 is coupled by a dedicated wire (labelled UPA_Slave_Int_L in FIG. 3) to signal a single priority level interrupt to the System Controller 110. The System Controller 110 will cause an interrupt packet to be generated and sent to an Interrupt handler UPA port.

In slave UPA ports having an interrupter interface, a second interrupt cannot be asserted on the UPA_Slave_Int_L line until the interrupt handler clears the interrupt with a slave write to a predefined interrupt-clear register in the slave UPA port. In addition, only a single interrupt priority level is available for interrupts generated using the UPA_Slave_Int_L line.

Interrupt Handler Interface

A UPA port may be an interrupt handler 156. A data processor UPA module will typically support the interrupt handler interface. In order to be an interrupt handler, the UPA port must support the INT and INT_DQ queues shown in FIG. 16. The max size of the INT request queue is 4 interrupts.

The P_INT_REQ that is deposited in the INT request queue is examined by the processor. In the preferred embodiment, it causes a trap to a software interrupt handler. After the interrupt handler has completed handling the interrupt, it causes the P_REPLY to be generated by the UPA port to the System Controller to indicate that the P_INT_REQ has been handled and that there is room for another P_INT_REQ in its incoming interrupt request queue. In the preferred embodiment, the P_REPLY is generated when the software performs a write to an `interrupt clear` register in the interrupt handler interface.

System Controller Registers

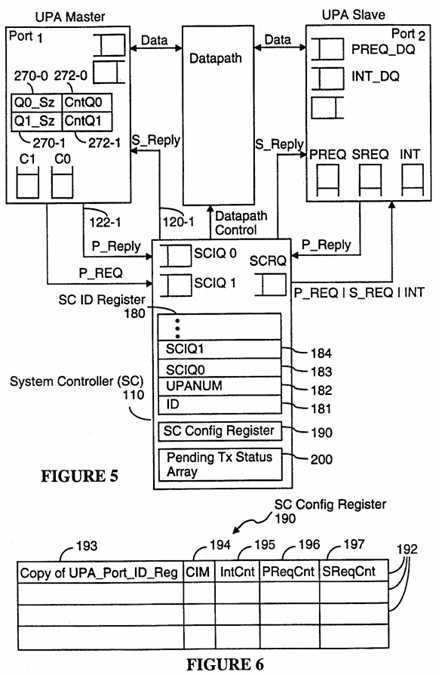

Referring to FIG. 5, the System Controller 110 includes separate queues for receiving transaction requests in each master class (SCIQ0, SCIQ1), as well as a queue (SCRQ) for both requests it generates and requests it forwards to UPA ports. The System Controller 110 also includes an SC ID register 180 for communicating its capabilities to the UPA ports, an SC Config Register 190, and a pending transaction status array 200. The SC Config Register 190 is used for storing the capabilities of all the UPA ports in the system, as well as to keep track of how many transaction requests are currently stored in the input queues of each of the UPA ports 104, and the pending transaction status array 200 is used by the System Controller to keep track of all Inactive and Active pending transactions.

The fields of the SC ID register 180 are as follows:

? the ID field 181 is a 16-bit field that identifies the System Controller.

? The UPANUM field 182 is a 5-bit mask field that specifies the maximum number of UPA ports the System Controller can support.

? SCIQ0[3:0] field 183 is a 4-bit field that specifies the number of (2-cycle) requests packets that can be stored in the incoming class 0 request queue SCIQ 0 for a particular UPA port.

? SCIQ1[3:0] field 184 is a 4-bit field that specifies the number of (2-cycle) requests packets that can be stored in the incoming class 1 request queue SCIQ 1 for a particular UPA port.

There is a separate set of SCIQ0 and SQIC1 registers for each master UPA port to indicate the size of the SCIQ0 and SCIQ1 request input queue for each such master UPA port.

Referring to FIG. 6, the SC Config register 190 includes one row or record 192 for each UPA port that can be supported by the System Controller 110. Each row‘s position in the SC Config register corresponds to the port ID of the corresponding UPA port. Thus, the first row of the SC Config register 190 stores configuration data for the UPA port with port ID 00000, the second row stores configuration data for the UPA port with port ID 00001, and so on. Each such record is herein called a "port record in the SC Config register". The fields in each port record in the SC Config register 190 are as follows:

? Copy of UPA_Port_ID_Reg 193 is, literally, a copy of the port ID register of the corresponding UPA port.

? Cache Index Mask (CIM) field 194 specifies the number of Etag block entries or lines in the coherent cache, if any, of the corresponding UPA port. This indicates to the System Controller how many low order address bit of the physical address PA{40:6] to use for address compares for implementing the cache coherence protocol. This field if valid only for cache master UPA ports.

? IntCnt field 195 is the number of interrupt requests that the System Controller 110 has forwarded to the corresponding UPA port that have yet to be acknowledged by that UPA port. The System Controller blocks the transmission of additional interrupt requests to the UPA port whenever the value in this field is equal to the PINT_RDQ[1:0] field 170 in its copy 193 of the UPA_Port_ID register.

? PReqCnt field 196 is the number of port transaction requests that the System Controller 110 has forwarded to the corresponding UPA port that have yet to be acknowledged by that UPA port. The System Controller blocks the transmission of additional port transaction requests to the UPA port whenever the value in this field is equal to the PREQ_RQ[3:0] field 166 in its copy 193 of the UPA port ID register.

? SReqCnt field 197 is the number of System Controller transaction requests sent to the corresponding UPA port that have yet to be acknowledged by that UPA port. The System Controller blocks the transmission of additional System Controller transaction requests to the UPA port whenever the value in this field is equal to 1, since the maximum size of the SREQ queue in each slave interface is one.

Data Flow

Referring to FIGS. 5 and 7, typical read/write data flow to and from memory is as follows. Although not explicitly shown in the Figures, the System Controller 110 includes a separate set of SCIQ 0 and SCIQ 1 input request queues for each master UPA port. In addition, it is to be noted that the flow chart in FIG. 8 does not show all the steps of all data transfer transactions. Rather, it shows only those steps that are common to most data transfer transactions. Further details of all the defined data transfer transactions are described in the section of this document entitled "Detailed Description of Transactions."

The UPA master port issues a read/write transaction request (P_REQ) on its UPA address bus to the System Controller 110 (210), which the System Controller receives in one of its two request input queues (212). If it is a coherent request (214), System Controller 110 does a Dtag lookup (snoop) operation on the Snoopbus, followed by a Dtag update operation (216). At the same time as the Dtag lookup, if a read transaction is being performed on an address located in main memory, the System Controller starts a memory cycle (217).

A "snoop operation" simultaneously accesses all the Dtag arrays 134 coupled to the Snoopbus 140 to determine if any of the Dtag arrays 134 store a valid entry for a specified address. Each of the Dtag arrays 134 outputs a 2-bit status value as well as a hit/no-hit bit in response to each snoop operation. The 2-bit status value output by a Dtag array represents the state of a Dtag only if the hit/no-hit bit indicates that a matching entry was found in the Dtag array 134. A "hit" is detected in a Dtag array if the "hit" bit is True and the two bit Dtag state value is not equal to 00.

Depending on the specific transaction request being made, if a "hit" is detected in any of the Dtag arrays 134, data may be sourced from the cache memory of one of the master UPA ports, the cache entries in some or all of the cache memories which store data for the specified address may be invalidated, or the tag status of one or more the cache entries in the Dtag and Etag arrays may be updated in another manner, as will be described below.

For a coherent read transaction, if the snoop determines that the data is coming from memory because (A) there were no hits for the specified address in the Dtag arrays 134 (222), or (B) all Dtags corresponding to the cache hits are in the unmodified S (Shared Clean) state and the transaction is not a read to own (RDO) transaction (223), then a datapath through the interconnect module 112 from the main memory to the requesting UPA port is set up by the System Controller 110 (224). The System Controller 110 sends a S_REPLY message to the requesting UPA port (226) when it is time for the requesting UPA port to receive the data block corresponding to the specified address (228).

A coherent read from another UPA port‘s cache is required when there is a cache hit (222) for a cache memory in a data processor other than the requesting processor, and either (A) the Dtag for the non-requesting data processor is in the O or M state, indicated a modified data block, or (B) the read transaction is a read to own (P_RDO_REQ) transaction (223).

When System Controller determines (222, 223) that a data block must be sourced by another UPA port‘s cache, the System Controller 110 sends a copyback S_REQ to the slave interface of the sourcing UPA port, and aborts the memory cycle (240). In systems having more than two data processors, the System Controller also sends invalidate transaction requests (S_INV_REQ) to all caching UPA master ports for which a cache hit was detected, other than the sourcing UPA port (240).

When the data is ready, the slave UPA port issues a P_REPLY to the System Controller 110 (242). System Controller 110 then sends control signals to the interconnect module 112 to form a datapath from the sourcing UPA port to the requesting UPA port (244). The System Controller 112 also sends an S_REPLY to the sourcing UPA slave interface to drive the requested data on its UPA data bus, and sends an S_REPLY to the requesting UPA master port (246) to prompt it to receive the data from its UPA data bus (228).

In a typical coherent write (P_WRI_REQ) transaction, invalidate requests are sent by the System Controller to the cache memories storing the same data block as the one being written to main memory (218), and an S_REPLY message is issued to the requesting UPA port by the System Controller (230) to cause it to source the data for the write operation (232) after a datapath through the interconnect module 112 from the requesting UPA port to the main memory is set up by the System Controller 110 and the main memory has been primed to write the data (220).

Writeback (P_WRB_REQ) transactions are handled differently than other coherent write transactions. When the results of the Dtag lookup (snoop) for the transaction are received, if the Dtag corresponding for the specified address in the writeback transaction request is invalid (250), that means another data processor has performed a transaction that required invalidation of the addressed data block. When this happens, the writeback transaction is cancelled by the System Controller by sending a writeback cancel (S_WBCAN) reply message back to the requesting UPA port (251), which causes the requesting UPA port to invalidate the contents of its writeback buffer 280 (see FIG. 9).

If the writeback transaction is not cancelled (250), the System Controller sets up a datapath from the requesting UPA port to main memory (252) and sends a write block acknowledgment message (S_WAB) to the requesting data process (253), which instructs the requesting data processor to source the data block to main memory (253, 254).

Referring to FIG. 8D, in most cases the Dtag update operation (216) stores new Dtag values in the same Dtag entries that are read during the Dtag lookup operation. However, there are two exceptions concerning read/writeback transaction pairs.

When executing the Dtag update operation for a writeback transaction, if the Dtag transient buffer Dtag TB for the requesting processor is currently storing a valid value (255), that means the read transaction paired with the current writeback transaction has been completed (i.e., it completed before the writeback transaction). In that case, the Dtag update operation (256) performed at the end of the writeback transaction transfers the contents of the DtagTB into the Dtag corresponding to the address in the writeback request. If the DtagTB is not currently storing a valid value (255), that means the paired read transaction has not yet completed, in which case the Dtag update operation for the writeback transaction invalidates the Dtag corresponding to the address in the writeback request (257).

Note that if the DtagTB valid bit is set to True when a writeback transaction is canceled, the Dtag update operation still copies the contents of the DtagTB into the associated cache line‘s Dtag. The Dtags for all other data processors are neither inspected nor changed by the writeback transaction.

When executing the Dtag update operation for a read transaction, if the DVP (dirty victim pending) bit is set to "1" and the lookup of the Dtags for the requesting processor indicates that the corresponding writeback transaction is still pending (i.e., the Dtag state for the address data block is not equal to I) (258), the new Dtag state for the addressed data block is stored in the Dtag transient buffer (DtagTB) awaiting the writeback (259a). Otherwise (i.e., if the Dtag state for the addressed data block is equal to I), the writeback transaction has preceded the read transaction and the new Dtag value generated by the transaction is directly written into the Dtag for the addressed data block (259b).

There is no Dtag update operation for ReadToDiscard transactions since ReadToDiscard operations do not affect the contents of the cache memory of any UPA module. Similarly, the DVP bit should not be set in ReadToDiscard transactions because ReadToDiscard transactions do not displace data blocks in cache memory.

Referring to FIGS. 8A and 8B, a typical non-cached slave read sequence from another UPA Port is as follows. The UPA master port issues a read request (P_REQ) on its UPA address bus to the System Controller 110 (210, 212). After decoding the address and determining that the address in not in the coherent domain (214), the System Controller 110 forwards the P_REQ to the slave interface of the target (addressed) UPA port on the target UPA‘s UPA address bus (after arbitrating for it) (260). When the requested data is ready for transmission, the target UPA port issues a P_REPLY to the System Controller 110 (261). The System Controller 110 sets up a datapath in the interconnect module from the target UPA port to the requesting UPA port (262), issues an S_REPLY to the target UPA port to drive the requested data on its UPA data bus, and issues an S_REPLY to the requesting UPA master port (263) to receive the data from its UPA data bus (264).

A typical non-cached slave write sequence to another UPA port is as follows. The UPA master port issues a write request (P_REQ) on its UPA address bus to the System Controller 110 (210, 212). After decoding the address and determining that the address is not in the coherent domain (214), the System Controller 110 forwards the P_REQ to the addressed UPA port on that port‘s UPA address bus (after arbitrating for it) (250). The System Controller 110 sets up a datapath in the interconnect module 112 from the requesting UPA port to the target UPA port (266), issues an S_REPLY to the requesting master port to drive the data on its UPA data bus, and issues an S_REPLY to the target port to receive the data from its UPA data bus (267). The requesting master port considers the transaction completed when it receives the S_REPLY and has transferred the data. However, the target UPA port issues a P_REPLY when it finishes processing the sourced data (268), which is important for flow control purposes because the P_REPLY enables the System to decrement its PReqCnt count of outstanding requests in the target UPA port‘s slave input request and data queues.

Note that since the address and data paths are independent, and since the slave interface for every UPA port includes both request and data input queues, the request packet and the corresponding data may be forwarded in any order to the UPA port‘s slave interface, i.e., the data could be given to it before the address, and vice versa. When the data is delivered to the slave interface before the corresponding request packet, the delivered data simply sits in the slave interface‘s input data queue until the slave interface is ready to process it.

When the slave interface has drained the data and transaction request from its input queue, it issues a P_REPLY to the System Controller 110 indicating it is ready for another slave transaction. The System Controller 110 considers the transaction complete at this point.

Flow control is accomplished by ensuring that the sourcing device (A) always knows, in advance, the maximum size of the queue downstream and (B) keeps count of the remaining space in the latter queue. The maximum queue sizes are statically determined by initialization software at power-on from the UPA port ID registers 158 for all the UPA ports and from SC ID register 180 in the System Controller, and written into flow control registers for the queue immediately upstream. The flow control registers in the System Controller are (A) the queue size parameters PREQ_RQ and PINT_RDQ stored in the System Controller‘s copy 193 of the UPA Port ID register, and (B) the IntCnt, PReqCnt and SReqCnt counters 194, 195 and 196 in the SC Config Register 190. The other flow control registers in the system are registers 270, 272 in the master interfaces 150 of the UPA ports. In particular, referring to FIG. 5, each master interface 150 of each UPA port 104 includes two registers 270-0, 270-1 that indicate the size of the C0 and C1 master class request queues in the System Controller for that UPA port, and two counters 272-0, 272-1 that indicate the number of requests currently pending in each of the two master class request queues. The queue size values in the SC ID register SCIQ0, SCIQ1 (183, 184) for each UPA master port are copied into the size registers 270 of each corresponding UPA port master interface by the initialization software at power-on.

Flow control is described more completely in patent application Ser. No. 08/414,875, filed Mar. 31, 1995, which is hereby incorporated by reference.

There are no specific size restrictions or requirements for the data and request output queues, except that each such queue must be large enough to handle the maximum number of requests or data packets that the associated device may want to enqueue. Further, since the sizes of output data and request queues are not relevant to other devices in the system for flow control purposes, those size values are not denoted in the configuration registers accessed by the initialization software.

After the software initialization, the queue upstream does not issue more requests to the queue immediately downstream than what the latter queue has capacity for. An S_REPLY from System Controller 110 to the UPA port indicates to the UPA port that System Controller 110 has freed up space for one more request in the respective queue, and is ready for another master request for that queue. A P_REPLY from a UPA port to the System Controller 110 indicates to the System Controller 110 that the UPA slave port has freed up space for one more request in its respective queue, and is ready for another slave request.

An upstream device, such as a UPA port, can transmit in quick succession a series of transactions up to the maximum capacity of a downstream queue, without waiting for any replies, and then wait for at least one S_REPLY or P_REPLY before transmitting any additional requests to the downstream queue.

Flow control is pictorially depicted in FIG. 5. The System Controller 110 input request queues SCIQ0 and SCIQ1 are downstream from the UPA master request class queues C0 and C1 respectively (conversely, C0 and C1 are upstream). Similarly, all the queues in the UPA slave interface are downstream from the System Controller 110.

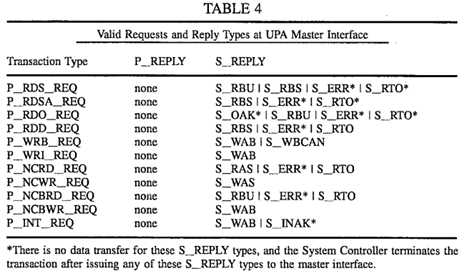

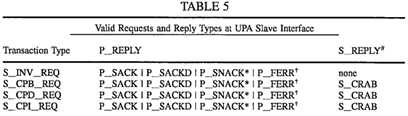

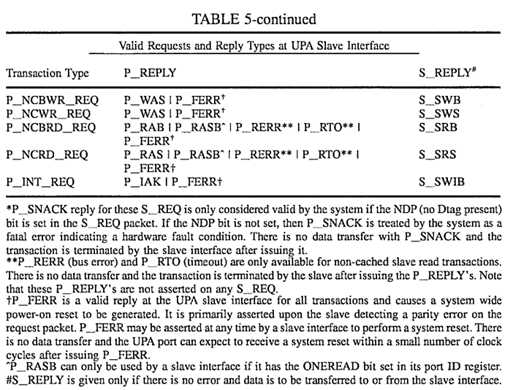

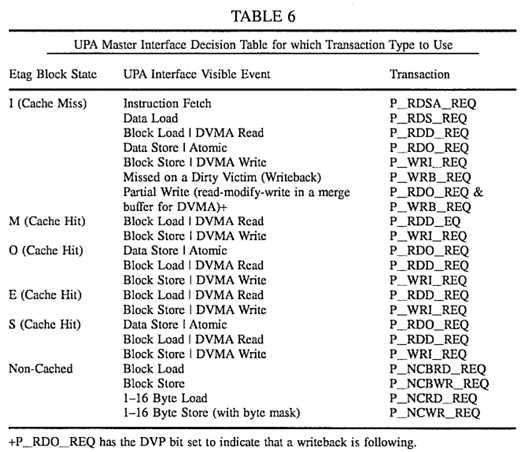

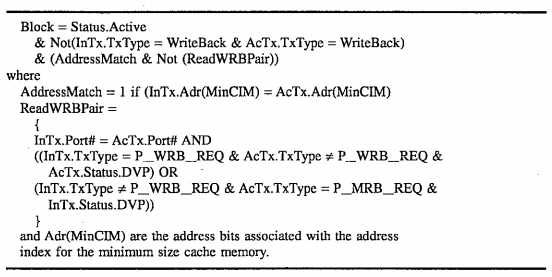

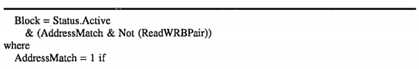

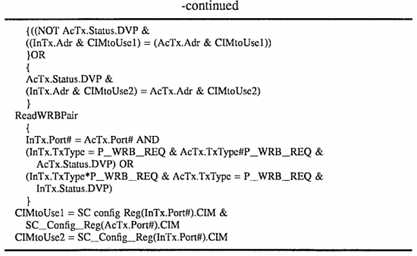

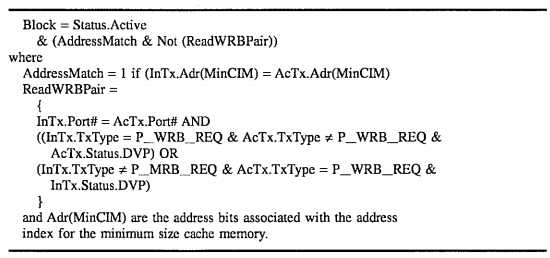

Cache Coherence Model Overview