Prometheus 是一个开源监控系统,它本身已经成为了云原生中指标监控的事实标准 。

第一版本:Cadvisor+InfluxDB+Grafana

只能从主机维度进行采集,没有Namespace、Pod等维度的汇聚功能

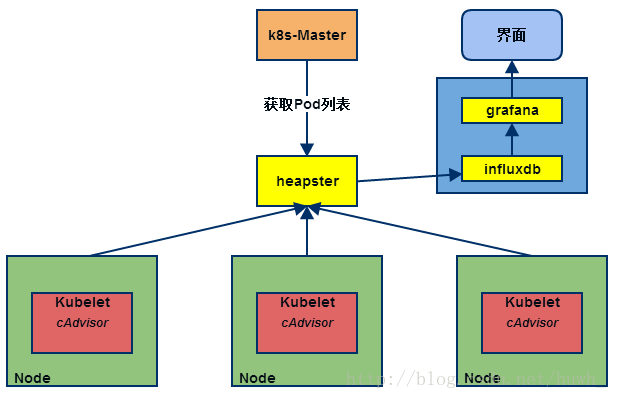

第二版本: Heapster+InfluxDB+Grafana

heapster负责调用各node中的cadvisor接口,对数据进行汇总,然后导到InfluxDB , 可以从cluster,node,pod的各个层面提供详细的资源使用情况。

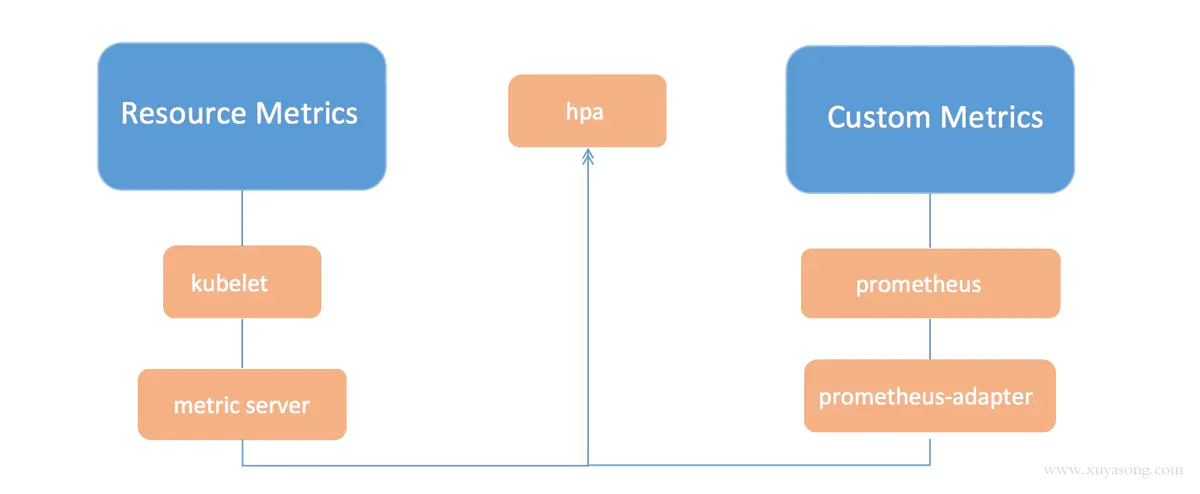

第三版本:Metrics-Server + Prometheus

k8s对监控接口进行了标准化,主要分了三类:

Resource Metrics

对应的接口是 metrics.k8s.io,主要的实现就是 metrics-server,它提供的是资源的监控,比较常见的是节点级别、pod 级别、namespace 级别、class 级别。这类的监控指标都可以通过 metrics.k8s.io 这个接口获取到

Custom Metrics

对应的接口是 custom.metrics.k8s.io,主要的实现是 Prometheus, 它提供的是资源监控和自定义监控,资源监控和上面的资源监控其实是有覆盖关系的。

自定义监控指的是:比如应用上面想暴露一个类似像在线人数,或者说调用后面的这个数据库的 MySQL 的慢查询。这些其实都是可以在应用层做自己的定义的,然后并通过标准的 Prometheus 的 client,暴露出相应的 metrics,然后再被 Prometheus 进行采集

External Metrics

对应的接口是 external.metrics.k8s.io。主要的实现厂商就是各个云厂商的 provider,通过这个 provider 可以通过云资源的监控指标

基于go开发, https://github.com/prometheus/prometheus

若使用docker部署直接启动镜像即可:

$ docker run --name prometheus -d -p 127.0.0.1:9090:9090 prom/prometheus

我们想制作Prometheus的yaml文件,可以先启动容器进去看一下默认的启动命令:

$ docker run -d --name tmp -p 127.0.0.1:9090:9090 prom/prometheus:v2.19.2

$ docker exec -ti tmp sh

#/ ps aux

#/ cat /etc/prometheus/prometheus.yml

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

# scrape_timeout is set to the global default (10s).

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093

# Load rules once and periodically evaluate them according to the global ‘evaluation_interval‘.

rule_files:

# - "first_rules.yml"

# - "second_rules.yml"

# A scrape configuration containing exactly one endpoint to scrape:

# Here it‘s Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: ‘prometheus‘

# metrics_path defaults to ‘/metrics‘

# scheme defaults to ‘http‘.

static_configs:

- targets: [‘localhost:9090‘]

本例中,使用k8s来部署,所需的资源清单如下:

# 创建新的命名空间 monitor,存储prometheus相关资源

$ cat prometheus-namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: monitor

# 需要准备配置文件,因此使用configmap的形式保存

$ cat prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: ‘prometheus‘

static_configs:

- targets: [‘localhost:9090‘]

# prometheus的资源文件

# 出现Prometheus数据存储权限问题,因为Prometheus内部使用nobody启动进程,挂载数据目录后权限为root,因此使用initContainer进行目录权限修复:

$ cat prometheus-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus

namespace: monitor

labels:

app: prometheus

spec:

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

serviceAccountName: prometheus

nodeSelector:

app: prometheus

initContainers:

- name: "change-permission-of-directory"

image: busybox

command: ["/bin/sh"]

args: ["-c", "chown -R 65534:65534 /prometheus"]

securityContext:

privileged: true

volumeMounts:

- mountPath: "/etc/prometheus"

name: config-volume

- mountPath: "/prometheus"

name: data

containers:

- image: prom/prometheus:v2.19.2

name: prometheus

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus" # 指定tsdb数据路径

- "--web.enable-lifecycle" # 支持热更新,直接执行localhost:9090/-/reload立即生效

- "--web.console.libraries=/usr/share/prometheus/console_libraries"

- "--web.console.templates=/usr/share/prometheus/consoles"

ports:

- containerPort: 9090

name: http

volumeMounts:

- mountPath: "/etc/prometheus"

name: config-volume

- mountPath: "/prometheus"

name: data

resources:

requests:

cpu: 100m

memory: 512Mi

limits:

cpu: 100m

memory: 512Mi

volumes:

- name: data

hostPath:

path: /data/prometheus/

- configMap:

name: prometheus-config

name: config-volume

# rbac,prometheus会调用k8s api做服务发现进行抓取指标

$ cat prometheus-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitor

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups:

- ""

resources:

- nodes

- services

- endpoints

- pods

- nodes/proxy

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

- nodes/metrics

verbs:

- get

- nonResourceURLs:

- /metrics

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitor

# 提供Service,为Ingress使用

$ cat prometheus-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor

labels:

app: prometheus

spec:

selector:

app: prometheus

type: ClusterIP

ports:

- name: web

port: 9090

targetPort: http

$ cat prometheus-ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: prometheus

namespace: monitor

spec:

rules:

- host: prometheus.luffy.com

http:

paths:

- path: /

backend:

serviceName: prometheus

servicePort: 9090

部署上述资源:

# 命名空间

$ kubectl create prometheus-namespace.yaml

# 给node打上label

$ kubectl label node k8s-slave1 app=prometheus

#部署configmap

$ kubectl create -f prometheus-configmap.yaml

# rbac

$ kubectl create -f prometheus-rbac.yaml

# deployment

$ kubectl create -f prometheus-deployment.yaml

# service

$ kubectl create -f prometheus-svc.yaml

# ingress

$ kubectl create -f prometheus-ingress.yaml

# 访问测试

$ kubectl -n monitor get ingress

# http://localhost:9090/metrics

$ kubectl -n monitor get po -o wide

prometheus-dcb499cbf-fxttx 1/1 Running 0 13h 10.244.1.132 k8s-slave1

$ curl http://10.244.1.132:9090/metrics

...

# HELP promhttp_metric_handler_requests_total Total number of scrapes by HTTP status code.

# TYPE promhttp_metric_handler_requests_total counter

promhttp_metric_handler_requests_total{code="200"} 149

promhttp_metric_handler_requests_total{code="500"} 0

promhttp_metric_handler_requests_total{code="503"} 0

tsdb(Time Series Database)

其中#号开头的两行分别为:

其中非#开头的每一行表示当前采集到的一个监控样本:

每次采集到的数据都会被Prometheus以time-series(时间序列)的方式保存到内存中,定期刷新到硬盘。如下所示,可以将time-series理解为一个以时间为X轴的数字矩阵:

^

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="idle"}

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="system"}

│ . . . . . . . . . . . . . . . . . . node_load1{}

│ . . . . . . . . . . . . . . . . . .

v

<------------------ 时间 ---------------->

在time-series中的每一个点称为一个样本(sample),样本由以下三部分组成:

在形式上,所有的指标(Metric)都通过如下格式标示:

<metric name>{<label name>=<label value>, ...}

http_request_total - 表示当前系统接收到的HTTP请求总量)。Prometheus:定期去Tragets列表拉取监控数据,存储到TSDB中,并且提供指标查询、分析的语句和接口。

无论是业务应用还是k8s系统组件,只要提供了metrics api,并且该api返回的数据格式满足标准的Prometheus数据格式要求即可。

其实,很多组件已经为了适配Prometheus采集指标,添加了对应的/metrics api,比如

CoreDNS:

$ kubectl -n kube-system get po -owide|grep coredns

coredns-58cc8c89f4-nshx2 1/1 Running 6 22d 10.244.0.20

coredns-58cc8c89f4-t9h2r 1/1 Running 7 22d 10.244.0.21

$ curl 10.244.0.20:9153/metrics

修改target配置:

$ cat prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 15s

scrape_configs:

- job_name: ‘prometheus‘

static_configs:

- targets: [‘localhost:9090‘]

- job_name: ‘coredns‘

static_configs:

- targets: [‘10.96.0.10:9153‘]

$ kubectl apply -f prometheus-configmap.yaml

# 重建pod生效

$ kubectl -n monitor delete po prometheus-dcb499cbf-fxttx

对于集群的监控一般我们需要考虑以下几个方面:

apiserver自身也提供了/metrics 的api来提供监控数据,

$ kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 23d

$ curl -k -H "Authorization: Bearer eyJhbGciOiJSUzI1NiIsImtpZCI6InhXcmtaSG5ZODF1TVJ6dUcycnRLT2c4U3ZncVdoVjlLaVRxNG1wZ0pqVmcifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi10b2tlbi1xNXBueiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJhZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImViZDg2ODZjLWZkYzAtNDRlZC04NmZlLTY5ZmE0ZTE1YjBmMCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDphZG1pbiJ9.iEIVMWg2mHPD88GQ2i4uc_60K4o17e39tN0VI_Q_s3TrRS8hmpi0pkEaN88igEKZm95Qf1qcN9J5W5eqOmcK2SN83Dd9dyGAGxuNAdEwi0i73weFHHsjDqokl9_4RGbHT5lRY46BbIGADIphcTeVbCggI6T_V9zBbtl8dcmsd-lD_6c6uC2INtPyIfz1FplynkjEVLapp_45aXZ9IMy76ljNSA8Uc061Uys6PD3IXsUD5JJfdm7lAt0F7rn9SdX1q10F2lIHYCMcCcfEpLr4Vkymxb4IU4RCR8BsMOPIO_yfRVeYZkG4gU2C47KwxpLsJRrTUcUXJktSEPdeYYXf9w" https://192.168.136.10:6443/metrics

可以通过手动配置如下job来试下对apiserver服务的监控,

$ cat prometheus-configmap.yaml

...

- job_name: ‘kubernetes-apiserver‘

static_configs:

- targets: [‘10.96.0.1‘]

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

node_exporter https://github.com/prometheus/node_exporter

分析:

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitor

labels:

app: node-exporter

spec:

selector:

matchLabels:

app: node-exporter

template:

metadata:

labels:

app: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

nodeSelector:

kubernetes.io/os: linux

containers:

- name: node-exporter

image: prom/node-exporter:v1.0.1

args:

- --web.listen-address=$(HOSTIP):9100

- --path.procfs=/host/proc

- --path.sysfs=/host/sys

- --path.rootfs=/host/root

- --collector.filesystem.ignored-mount-points=^/(dev|proc|sys|var/lib/docker/.+)($|/)

- --collector.filesystem.ignored-fs-types=^(autofs|binfmt_misc|cgroup|configfs|debugfs|devpts|devtmpfs|fusectl|hugetlbfs|mqueue|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|sysfs|tracefs)$

ports:

- containerPort: 9100

env:

- name: HOSTIP

valueFrom:

fieldRef:

fieldPath: status.hostIP

resources:

requests:

cpu: 150m

memory: 180Mi

limits:

cpu: 150m

memory: 180Mi

securityContext:

runAsNonRoot: true

runAsUser: 65534

volumeMounts:

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: root

mountPath: /host/root

mountPropagation: HostToContainer

readOnly: true

tolerations:

- operator: "Exists"

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: root

hostPath:

path: /

创建node-exporter服务

$ kubectl create -f node-exporter.yaml

$ kubectl -n monitor get po

问题来了,如何添加到Prometheus的target中?

之前已经给Prometheus配置了RBAC,有读取node的权限,因此Prometheus可以去调用Kubernetes API获取node信息,所以Prometheus通过与 Kubernetes API 集成,提供了内置的服务发现分别是:Node、Service、Pod、Endpoints、Ingress

配置job即可:

- job_name: ‘kubernetes-sd-node-exporter‘

kubernetes_sd_configs:

- role: node

重建查看效果:

$ kubectl apply -f prometheus-configmap.yaml

$ kubectl -n monitor delete po prometheus-dcb499cbf-6cwlg

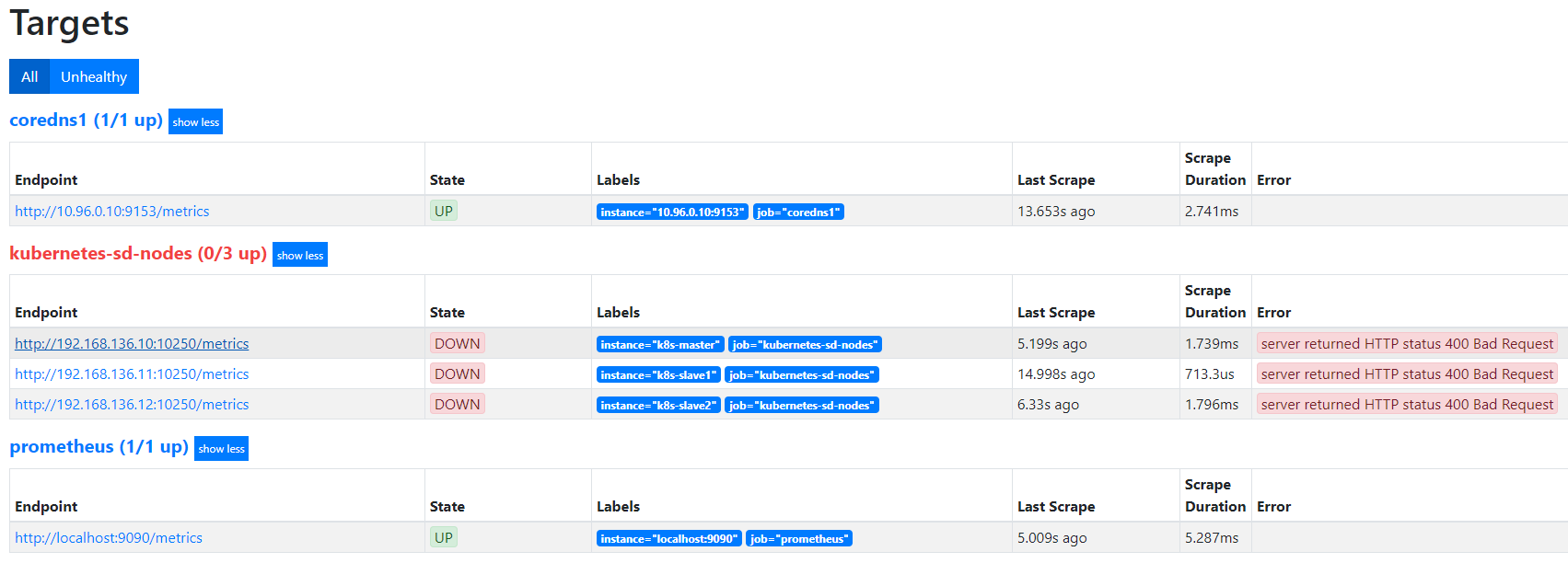

默认访问的地址是http://node-ip/10250/metrics,10250是kubelet API的服务端口,说明Prometheus的node类型的服务发现模式,默认是和kubelet的10250绑定的,而我们是期望使用node-exporter作为采集的指标来源,因此需要把访问的endpoint替换成http://node-ip:9100/metrics。

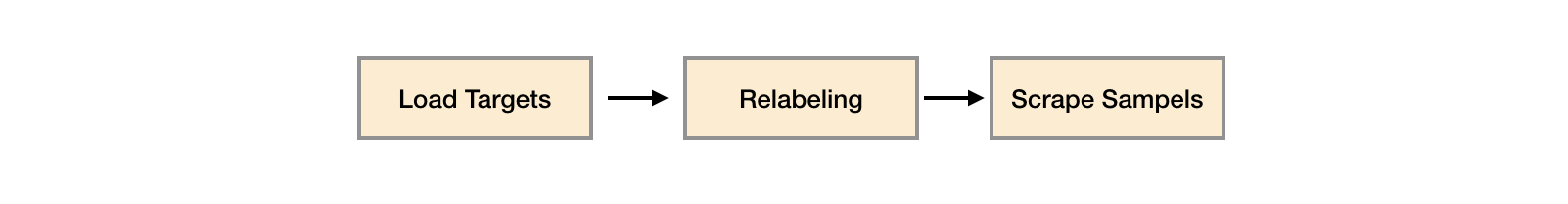

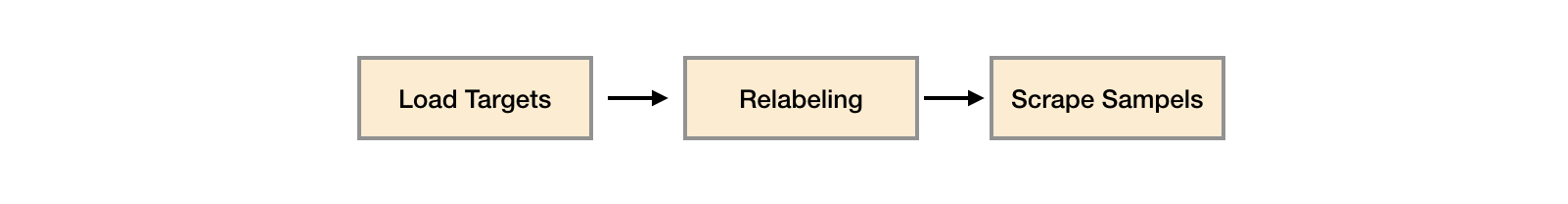

在真正抓取数据前,Prometheus提供了relabeling的能力。怎么理解?

查看Target的Label列,可以发现,每个target对应会有很多Before Relabeling的标签,这些__开头的label是系统内部使用,不会存储到样本的数据里,但是,我们在查看数据的时候,可以发现,每个数据都有两个默认的label,即:

prometheus_notifications_dropped_total{instance="localhost:9090",job="prometheus"}

instance的值其实则取自于__address__

这种发生在采集样本数据之前,对Target实例的标签进行重写的机制在Prometheus被称为Relabeling。

因此,利用relabeling的能力,只需要将__address__替换成node_exporter的服务地址即可。

- job_name: ‘kubernetes-sd-node-exporter‘

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: ‘(.*):10250‘

replacement: ‘${1}:9100‘

target_label: __address__

action: replace

再次更新Prometheus服务后,查看targets列表及node-exporter提供的指标,node_load1

cAdvisor 的指标访问路径为 https://10.96.0.1/api/v1/nodes/<node_name>/proxy/metrics ,

https://10.96.0.1/api/v1/nodes/k8s-master/proxy/metrics

https://10.96.0.1/api/v1/nodes/k8s-slave1/proxy/metrics

https://10.96.0.1/api/v1/nodes/k8s-slave2/proxy/metrics

分析:

node这种role - job_name: ‘kubernetes-sd-cadvisor‘

kubernetes_sd_configs:

- role: node

默认添加的target列表为:__address__ __metrics_path__

http://192.168.136.10:10250/metrics

http://192.168.136.11:10250/metrics

http://192.168.136.12:10250/metrics

抓取的地址是相同的,可以用10.96.0.1做固定值进行替换__address__

- job_name: ‘kubernetes-sd-cadvisor‘

kubernetes_sd_configs:

- role: node

relabel_configs:

- target_label: __address__

replacement: 10.96.0.1

action: replace

目前为止,替换后的样子:

http://10.96.0.1/metrics

http://10.96.0.1/metrics

http://10.96.0.1/metrics

需要把找到node-name,来做动态替换__metrics_path__

- job_name: ‘kubernetes-sd-cadvisor‘

kubernetes_sd_configs:

- role: node

relabel_configs:

- target_label: __address__

replacement: 10.96.0.1

action: replace

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

目前为止,替换后的样子:

http://10.96.0.1/api/v1/nodes/k8s-master/proxy/metrics

http://10.96.0.1/api/v1/nodes/k8s-slave1/proxy/metrics

http://10.96.0.1/api/v1/nodes/k8s-slave2/proxy/metrics

加上api-server的认证信息

- job_name: ‘kubernetes-sd-cadvisor‘

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- target_label: __address__

replacement: 10.96.0.1

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

重新应用配置,然后重建Prometheus的pod。查看targets列表,查看cadvisor指标,比如container_cpu_system_seconds_total

综上,利用node类型,可以实现对daemonset类型服务的目标自动发现以及监控数据抓取。

比如集群中存在100个业务应用,每个业务应用都需要被Prometheus监控。

每个服务是不是都需要手动添加配置?有没有更好的方式?

- job_name: ‘kubernetes-sd-endpoints‘

kubernetes_sd_configs:

- role: endpoints

添加到Prometheus配置中进行测试:

$ kubectl apply -f prometheus-configmap.yaml

$ kubectl -n monitor delete po prometheus-dcb499cbf-4h9qj

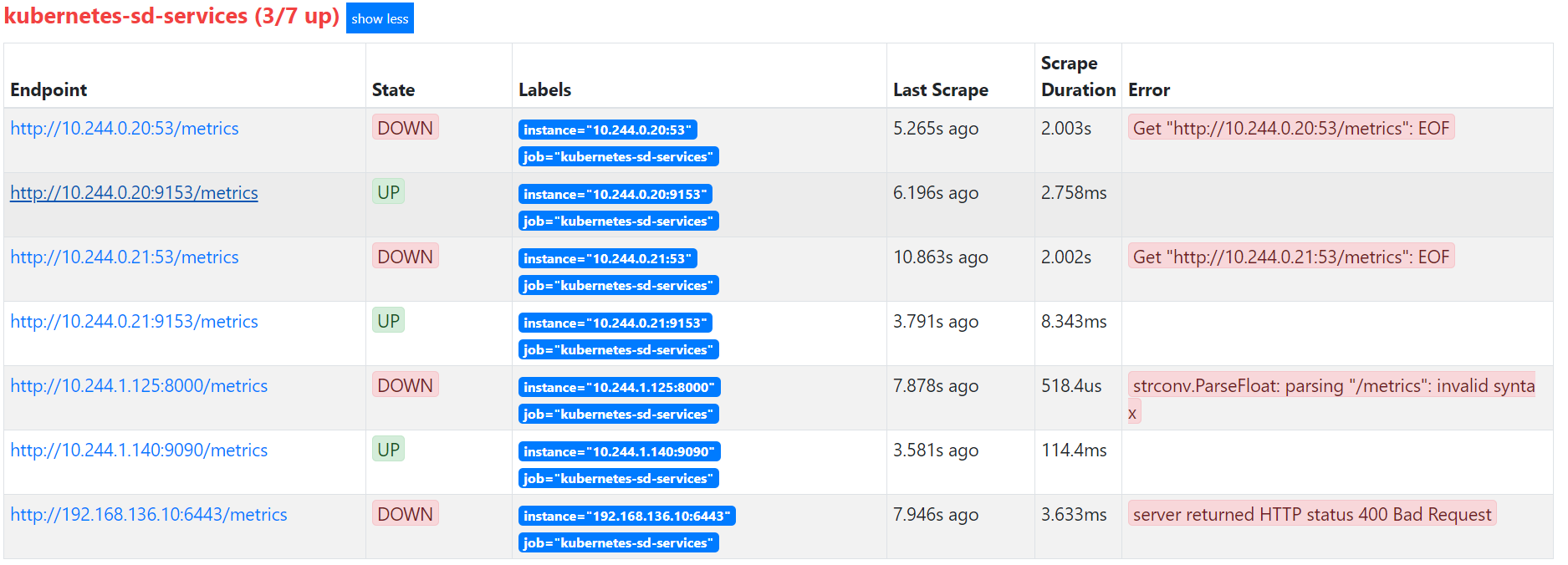

此使的Target列表中,kubernetes-sd-endpoints下出现了N多条数据,

可以发现,实际上endpoint这个类型,目标是去抓取整个集群中所有的命名空间的Endpoint列表,然后使用默认的/metrics进行数据抓取,我们可以通过查看集群中的所有ep列表来做对比:

$ kubectl get endpoints --all-namespaces

但是实际上并不是每个服务都已经实现了/metrics监控的,也不是每个实现了/metrics接口的服务都需要注册到Prometheus中,因此,我们需要一种方式对需要采集的服务实现自主可控。这就需要利用relabeling中的keep功能。

我们知道,relabel的作用对象是target的Before Relabling标签,比如说,假如通过如下定义:

- job_name: ‘kubernetes-sd-endpoints‘

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__keep_this_service__]

action: keep

regex: “true”

那么就可以实现target的Before Relabling中若存在__keep_this_service__,且值为true的话,则会加入到kubernetes-endpoints这个target中,否则就会被删除。

因此可以为我们期望被采集的服务,加上对应的Prometheus的label即可。

问题来了,怎么加?

查看coredns的metrics类型Before Relabling中的值,可以发现,存在如下类型的Prometheus的标签:

__meta_kubernetes_service_annotation_prometheus_io_scrape="true"

__meta_kubernetes_service_annotation_prometheus_io_port="9153"

这些内容是如何生成的呢,查看coredns对应的服务属性:

$ kubectl -n kube-system get service kube-dns -oyaml

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

creationTimestamp: "2020-06-28T17:05:35Z"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: KubeDNS

name: kube-dns

namespace: kube-system

...

发现存在annotations声明,因此,可以联想到二者存在对应关系,Service的定义中的annotations里的特殊字符会被转换成Prometheus中的label中的下划线。

我们即可以使用如下配置,来定义服务是否要被抓取监控数据。

- job_name: ‘kubernetes-sd-endpoints‘

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

这样的话,我们只需要为服务定义上如下的声明,即可实现Prometheus自动采集数据

annotations:

prometheus.io/scrape: "true"

有些时候,我们业务应用提供监控数据的path地址并不一定是/metrics,如何实现兼容?

同样的思路,我们知道,Prometheus会默认使用Before Relabling中的__metrics_path作为采集路径,因此,我们再自定义一个annotation,prometheus.io/path

annotations:

prometheus.io/scrape: "true"

prometheus.io/path: "/path/to/metrics"

这样,Prometheus端会自动生成如下标签:

__meta_kubernetes_service_annotation_prometheus_io_path="/path/to/metrics"

我们只需要在relabel_configs中用该标签的值,去重写__metrics_path__的值即可。因此:

- job_name: ‘kubernetes-sd-endpoints‘

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

有些时候,业务服务的metrics是独立的端口,比如coredns,业务端口是53,监控指标采集端口是9153,这种情况,如何处理?

很自然的,我们会想到通过自定义annotation来处理,

annotations:

prometheus.io/scrape: "true"

prometheus.io/path: "/path/to/metrics"

prometheus.io/port: "9153"

如何去替换?

我们知道Prometheus默认使用Before Relabeling中的__address__进行作为服务指标采集的地址,但是该地址的格式通常是这样的

__address__="10.244.0.20:53"

__address__="10.244.0.21"

我们的目标是将如下两部分拼接在一起:

__meta_kubernetes_service_annotation_prometheus_io_port的值因此,需要使用正则规则取出上述两部分:

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

需要注意的几点:

__address__中的:53有可能不存在,因此,使用()?的匹配方式进行()我们只需要第一和第三段,不需要中间括号部分的内容,因此使用?:的方式来做非获取匹配,即可以匹配内容,但是不会被记录到$1,$2这种变量中;号分割,因此匹配的时候需要注意添加;号此外,还可以将before relabeling 中的更多常用的字段取出来添加到目标的label中,比如:

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

因此,目前的relabel的配置如下:

- job_name: ‘kubernetes-sd-endpoints‘

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

验证一下:

更新configmap并重启Prometheus服务,查看target列表。

已经有了cadvisor,容器运行的指标已经可以获取到,但是下面这种情况却无能为力:

而这些则是kube-state-metrics提供的内容,它基于client-go开发,轮询Kubernetes API,并将Kubernetes的结构化信息转换为metrics。因此,需要借助于kube-state-metrics来实现。

指标类别包括:

部署: https://github.com/kubernetes/kube-state-metrics#kubernetes-deployment

$ wget https://github.com/kubernetes/kube-state-metrics/archive/v1.9.7.tar.gz

$ tar zxf v1.9.7.tar.gz

$ cp -r kube-state-metrics-1.9.7/examples/standard/ .

$ ll standard/

total 20

-rw-r--r-- 1 root root 377 Jul 24 06:12 cluster-role-binding.yaml

-rw-r--r-- 1 root root 1651 Jul 24 06:12 cluster-role.yaml

-rw-r--r-- 1 root root 1069 Jul 24 06:12 deployment.yaml

-rw-r--r-- 1 root root 193 Jul 24 06:12 service-account.yaml

-rw-r--r-- 1 root root 406 Jul 24 06:12 service.yaml

# 替换namespace为monitor

$ sed -i ‘s/namespace: kube-system/namespace: monitor/g‘ standard/*

$ kubectl create -f standard/

clusterrolebinding.rbac.authorization.k8s.io/kube-state-metrics created

clusterrole.rbac.authorization.k8s.io/kube-state-metrics created

deployment.apps/kube-state-metrics created

serviceaccount/kube-state-metrics created

service/kube-state-metrics created

如何添加到Prometheus监控target中?

$ cat standard/service.yaml

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "8080"

labels:

app.kubernetes.io/name: kube-state-metrics

app.kubernetes.io/version: v1.9.7

name: kube-state-metrics

namespace: monitor

spec:

clusterIP: None

ports:

- name: http-metrics

port: 8080

targetPort: http-metrics

- name: telemetry

port: 8081

targetPort: telemetry

selector:

app.kubernetes.io/name: kube-state-metrics

$ kubectl apply -f standard/service.yaml

查看target列表,观察是否存在kube-state-metrics的target。

kube_pod_container_status_running

可视化面板,功能齐全的度量仪表盘和图形编辑器,支持 Graphite、zabbix、InfluxDB、Prometheus、OpenTSDB、Elasticsearch 等作为数据源,比 Prometheus 自带的图表展示功能强大太多,更加灵活,有丰富的插件,功能更加强大。

注意点:

$ cat grafana-all.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: monitor

spec:

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

volumes:

- name: storage

hostPath:

path: /data/grafana/

nodeSelector:

app: prometheus

securityContext:

runAsUser: 0

containers:

- name: grafana

image: grafana/grafana:7.1.1

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

name: grafana

env:

- name: GF_SECURITY_ADMIN_USER

value: admin

- name: GF_SECURITY_ADMIN_PASSWORD

value: admin

readinessProbe:

failureThreshold: 10

httpGet:

path: /api/health

port: 3000

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 30

livenessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 3000

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

limits:

cpu: 150m

memory: 512Mi

requests:

cpu: 150m

memory: 512Mi

volumeMounts:

- mountPath: /var/lib/grafana

name: storage

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: monitor

spec:

type: ClusterIP

ports:

- port: 3000

selector:

app: grafana

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: grafana

namespace: monitor

spec:

rules:

- host: grafana.luffy.com

http:

paths:

- path: /

backend:

serviceName: grafana

servicePort: 3000

配置数据源:

如何丰富Grafana监控面板:

dashboard: https://grafana.com/grafana/dashboards

除了直接导入Dashboard,我们还可以通过安装插件的方式获得,Configuration -> Plugins可以查看已安装的插件,通过 官方插件列表 我们可以获取更多可用插件。

Kubernetes相关的插件:

DevOpsProdigy KubeGraf 是一个非常优秀的 Grafana Kubernetes 插件,是 Grafana 官方的 Kubernetes 插件的升级版本,该插件可以用来可视化和分析 Kubernetes 集群的性能,通过各种图形直观的展示了 Kubernetes 集群的主要服务的指标和特征,还可以用于检查应用程序的生命周期和错误日志。

# 进入grafana容器内部执行安装

$ kubectl -n monitor exec -ti grafana-594f447d6c-jmjsw bash

bash-5.0# grafana-cli plugins install devopsprodigy-kubegraf-app 1.4.1

installing devopsprodigy-kubegraf-app @ 1.4.1

from: https://grafana.com/api/plugins/devopsprodigy-kubegraf-app/versions/1.4.1/download

into: /var/lib/grafana/plugins

? Installed devopsprodigy-kubegraf-app successfully

Restart grafana after installing plugins . <service grafana-server restart>

bash-5.0# grafana-cli plugins install grafana-piechart-panel

installing grafana-piechart-panel @ 1.5.0

from: https://grafana.com/api/plugins/grafana-piechart-panel/versions/1.5.0/download

into: /var/lib/grafana/plugins

? Installed grafana-piechart-panel successfully

Restart grafana after installing plugins . <service grafana-server restart>

# 也可以下载离线包进行安装

# 重建pod生效

$ kubectl -n monitor delete po grafana-594f447d6c-jmjsw

登录grafana界面,Configuration -> Plugins 中找到安装的插件,点击插件进入插件详情页面,点击 [Enable]按钮启用插件,点击 Set up your first k8s-cluster 创建一个新的 Kubernetes 集群:

Name:luffy-k8s

Access:使用默认的Server(default)

Skip TLS Verify:勾选,跳过证书合法性校验

Auth:勾选TLS Client Auth以及With CA Cert,勾选后会下面有三块证书内容需要填写,内容均来自~/.kube/config文件,需要对文件中的内容做一次base64 解码

certificate-authority-data对应的内容client-certificate-data对应的内容client-key-data对应的内容通用的监控需求基本上都可以使用第三方的Dashboard来解决,对于业务应用自己实现的指标的监控面板,则需要我们手动进行创建。

调试Panel:直接输入Metrics,查询数据。

如,输入node_load1来查看集群节点最近1分钟的平均负载,直接保存即可生成一个panel

如何根据字段过滤,实现联动效果?

比如想实现根据集群节点名称进行过滤,可以通过如下方式:

设置 -> Variables -> Add Variable,添加一个变量node,

Preview of values查看到当前变量的可选值/.*node=\"(.+?)\".*/On Dashboard Load修改Metrics,$node和变量名字保持一致,意思为自动读取当前设置的节点的名字

node_load1{instance=~"$node"}

再添加一个面板,使用如下的表达式:

100-avg(irate(node_cpu_seconds_total{mode="idle",instance=~"$node"}[5m])) by (instance)*100

TSDB的样本分布示意图:

^

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="idle"}

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="system"}

│ . . . . . . . . . . . . . . . . . . node_load1{}

│ . . . . . . . . . . . . . . . . . . node_cpu_seconds_total{...}

v

<------------------ 时间 ---------------->

Guage类型:

$ kubectl -n monitor get po -o wide |grep k8s-master

node-exporter-ld6sq 1/1 Running 0 4d3h 192.168.136.10 k8s-master

$ curl -s 192.168.136.10:9100/metrics |grep node_load1

# HELP node_load1 1m load average.

# TYPE node_load1 gauge

node_load1 0.18

# HELP node_load15 15m load average.

# TYPE node_load15 gauge

node_load15 0.37

Gauge类型的指标侧重于反应系统的当前状态。

Guage类型的数据,通常直接查询就会有比较直观的业务含义,比如:

我们也会对这类数据做简单的处理,比如:

这就是PromQL提供的能力,可以对收集到的数据做聚合、计算等处理。

PromQL( Prometheus Query Language )是Prometheus自定义的一套强大的数据查询语言,除了使用监控指标作为查询关键字以为,还内置了大量的函数,帮助用户进一步对时序数据进行处理。

比如:

只显示k8s-master节点的平均负载

node_load1{instance="k8s-master"}

显示除了k8s-master节点外的其他节点的平均负载

node_load1{instance!="k8s-master"}

正则匹配

node_load1{instance=~"k8s-master|k8s-slave1"}

集群各节点系统内存使用率

(node_memory_MemTotal_bytes - node_memory_MemFree_bytes) / node_memory_MemTotal_bytes

counter类型:

$ curl -s 192.168.136.10:9100/metrics |grep node_cpu_seconds_total

# HELP node_cpu_seconds_total Seconds the cpus spent in each mode.

# TYPE node_cpu_seconds_total counter

node_cpu_seconds_total{cpu="0",mode="idle"} 294341.02

node_cpu_seconds_total{cpu="0",mode="iowait"} 120.78

node_cpu_seconds_total{cpu="0",mode="irq"} 0

node_cpu_seconds_total{cpu="0",mode="nice"} 0.13

node_cpu_seconds_total{cpu="0",mode="softirq"} 1263.29

counter类型的指标其工作方式和计数器一样,只增不减(除非系统发生重置)。常见的监控指标,如http_requests_total,node_cpu_seconds_total都是Counter类型的监控指标。

通常计数器类型的指标,名称后面都以_total结尾。我们通过理解CPU利用率的PromQL表达式来讲解Counter指标类型的使用。

各节点CPU的平均使用率表达式:

(1- sum(increase(node_cpu_seconds_total{mode="idle"}[2m])) by (instance) / sum(increase(node_cpu_seconds_total{}[2m])) by (instance)) * 100

分析:

node_cpu_seconds_total的指标含义是统计系统运行以来,CPU资源分配的时间总数,单位为秒,是累加的值。比如,直接运行该指标:

node_cpu_seconds_total

# 显示的是所有节点、所有CPU核心、在各种工作模式下分配的时间总和

其中mode的值和我们平常在系统中执行top命令看到的CPU显示的信息一致:

每个mode对应的含义如下:

user(us)system(sy)steal(st)softirq(si)irq(hi)nice(ni)iowait(wa)idle(id)我们通过指标拿到的各核心cpu分配的总时长数据,都是瞬时的数据,如何转换成 CPU的利用率?

先来考虑如何我们如何计算CPU利用率,假如我的k8s-master节点是4核CPU,我们来考虑如下场景:

因此,我们只需要使用PromQL取出上述过程中的值即可:

# 过滤出当前时间点idle的时长

node_cpu_seconds_total{mode="idle"}

# 使用[1m]取出1分钟区间内的样本值,注意,1m区间要大于prometheus设置的抓取周期,此处会将周期内所以的样本值取出

node_cpu_seconds_total{mode="idle"}[1m]

# 使用increase方法,获取该区间内idle状态的增量值,即1分钟内,mode="idle"状态增加的时长

increase(node_cpu_seconds_total{mode="idle"}[1m])

# 由于是多个cpu核心,因此需要做累加,使用sum函数

sum(increase(node_cpu_seconds_total{mode="idle"}[1m]))

# 由于是多台机器,因此,需要按照instance的值进行分组累加,使用by关键字做分组,这样就获得了1分钟内,每个节点上 所有CPU核心idle状态的增量时长,即前面示例中的”20+30+50+40=140s“

sum(increase(node_cpu_seconds_total{mode="idle"}[1m])) by (instance)

# 去掉mode=idle的过滤条件,即可获取1分钟内,所有状态的cpu获得的增量总时长,即4*60=240s

sum(increase(node_cpu_seconds_total{}[1m])) by (instance)

# 最终的语句

(1- sum(increase(node_cpu_seconds_total{mode="idle"}[1m])) by (instance) / sum(increase(node_cpu_seconds_total{}[1m])) by (instance)) * 100

除此之外,还会经常看到irate和rate方法的使用:

irate() 是基于最后两个数据点计算一个时序指标在一个范围内的每秒递增率 ,举个例子:

# 1min内,k8s-master节点的idle状态的cpu分配时长增量值

increase(node_cpu_seconds_total{instance="k8s-master",mode="idle"}[1m])

{cpu="0",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 56.5

{cpu="1",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 56.04

{cpu="2",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 56.6

{cpu="3",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 56.5

#以第一条数据为例,说明过去的1分钟,k8s-master节点的第一个CPU核心,有56.5秒的时长是出于idle状态的

# 1min内,k8s-master节点的idle状态的cpu分配每秒的速率

irate(node_cpu_seconds_total{instance="k8s-master",mode="idle"}[1m])

{cpu="0",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.934

{cpu="1",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.932

{cpu="2",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.933

{cpu="3",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.936

# 该值如何计算的?

# irate会取出样本中的最后两个点来作为增长依据,然后做差值计算,并且除以两个样本间的数据时长,也就是说,我们设置2m,5m取出来的值是一样的,因为只会计算最后两个样本差。

# 以第一条数据为例,表示用irate计算出来的结果是,过去的两分钟内,cpu平均每秒钟有0.934秒的时间是处于idle状态的

# rate会1min内第一个和最后一个样本值为依据,计算方式和irate保持一致

rate(node_cpu_seconds_total{instance="k8s-master",mode="idle"}[1m])

{cpu="0",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.933

{cpu="1",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.940

{cpu="2",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.935

{cpu="3",instance="k8s-master",job="kubernetes-sd-node-exporter",mode="idle"} 0.937

因此rate的值,相对来讲更平滑,因为计算的是时间段内的平均,更适合于用作告警。

开始之前,我们先来回顾一下,configmap的常用的挂载场景。

假如业务应用有一个配置文件,名为 application-1.conf,如果想将此配置挂载到pod的/etc/application/目录中。

application-1.conf的内容为:

$ cat application-1.conf

name: "application"

platform: "linux"

purpose: "demo"

company: "luffy"

version: "v2.1.0"

该配置文件在k8s中可以通过configmap来管理,通常我们有如下两种方式来管理配置文件:

通过kubectl命令行来生成configmap

# 通过文件直接创建

$ kubectl -n default create configmap application-config --from-file=application-1.conf

# 会生成配置文件,查看内容,configmap的key为文件名字

$ kubectl -n default get cm application-config -oyaml

通过yaml文件直接创建

$ cat application-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: application-config

namespace: default

data:

application-1.conf: |

name: "application"

platform: "linux"

purpose: "demo"

company: "luffy"

version: "v2.1.0"

# 创建configmap

$ kubectl create -f application-config.yaml

准备一个demo-deployment.yaml文件,挂载上述configmap到/etc/application/中

$ cat demo-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo

namespace: default

spec:

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

volumes:

- configMap:

name: application-config

name: config

containers:

- name: nginx

image: nginx:alpine

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/etc/application"

name: config

创建并查看:

$ kubectl create -f demo-deployment.yaml

修改configmap文件的内容,观察pod中是否自动感知变化:

$ kubectl edit cm application-config

整个configmap文件直接挂载到pod中,若configmap变化,pod会自动感知并拉取到pod内部。

但是pod内的进程不会自动重启,所以很多服务会实现一个内部的reload接口,用来加载最新的配置文件到进程中。

假如有多个配置文件,都需要挂载到pod内部,且都在一个目录中

$ cat application-1.conf

name: "application-1"

platform: "linux"

purpose: "demo"

company: "luffy"

version: "v2.1.0"

$ cat application-2.conf

name: "application-2"

platform: "linux"

purpose: "demo"

company: "luffy"

version: "v2.1.0"

同样可以使用两种方式创建:

$ kubectl delete cm application-config

$ kubectl create cm application-config --from-file=application-1.conf --from-file=application-2.conf

$ kubectl get cm application-config -oyaml

观察Pod已经自动获取到最新的变化

$ kubectl exec demo-55c649865b-gpkgk ls /etc/application/

application-1.conf

application-2.conf

此时,是挂载到pod内的空目录中/etc/application,假如想挂载到pod已存在的目录中,比如:

$ kubectl exec demo-55c649865b-gpkgk ls /etc/profile.d

color_prompt

locale

更改deployment的挂载目录:

$ cat demo-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo

namespace: default

spec:

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

volumes:

- configMap:

name: application-config

name: config

containers:

- name: nginx

image: nginx:alpine

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/etc/profile.d"

name: config

重建pod

$ kubectl apply -f demo-deployment.yaml

# 查看pod内的/etc/profile.d目录,发现已有文件被覆盖

$ kubectl exec demo-77d685b9f7-68qz7 ls /etc/profile.d

application-1.conf

application-2.conf

实现多个配置文件,可以挂载到pod内的不同的目录中。比如:

application-1.conf挂载到/etc/application/application-2.conf挂载到/etc/profile.dconfigmap保持不变,修改deployment文件:

$ cat demo-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo

namespace: default

spec:

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

volumes:

- name: config

configMap:

name: application-config

items:

- key: application-1.conf

path: application1

- key: application-2.conf

path: application2

containers:

- name: nginx

image: nginx:alpine

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/etc/application/application-1.conf"

name: config

subPath: application1

- mountPath: "/etc/profile.d/application-2.conf"

name: config

subPath: application2

测试挂载:

$ kubectl apply -f demo-deployment.yaml

$ kubectl exec demo-78489c754-shjhz ls /etc/application

application-1.conf

$ kubectl exec demo-78489c754-shjhz ls /etc/profile.d/

application-2.conf

color_prompt

locale

使用subPath挂载到Pod内部的文件,不会自动感知原有ConfigMap的变更

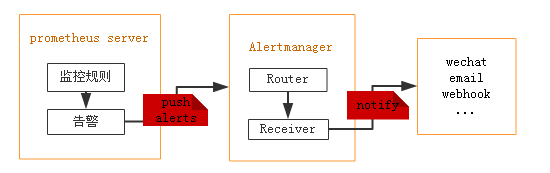

Alertmanager是一个独立的告警模块。

如果集群主机的内存使用率超过80%,且该现象持续了2分钟?想实现这样的监控告警,如何做?

从上图可得知设置警报和通知的主要步骤是:

安装和配置 Alertmanager

配置Prometheus与Alertmanager对话

在Prometheus中创建警报规则

Alertmanager, https://github.com/prometheus/alertmanager#install

./alertmanager --config.file=config.yml

alertmanager.yml配置文件格式:

$ cat alertmanager-config.yml

apiVersion: v1

data:

config.yml: |

global:

# 当alertmanager持续多长时间未接收到告警后标记告警状态为 resolved

resolve_timeout: 5m

# 配置邮件发送信息

smtp_smarthost: ‘smtp.163.com:25‘

smtp_from: ‘earlene163@163.com‘

smtp_auth_username: ‘earlene163@163.com‘

smtp_auth_password: ‘qzpm10‘

smtp_require_tls: false

# 所有报警信息进入后的根路由,用来设置报警的分发策略

route:

# 接收到的报警信息里面有许多alertname=NodeLoadHigh 这样的标签的报警信息将会批量被聚合到一个分组里面

group_by: [‘alertname‘]

# 当一个新的报警分组被创建后,需要等待至少 group_wait 时间来初始化通知,如果在等待时间内当前group接收到了新的告警,这些告警将会合并为一个通知向receiver发送

group_wait: 30s

# 相同的group发送告警通知的时间间隔

group_interval: 30s

# 如果一个报警信息已经发送成功了,等待 repeat_interval 时间来重新发送

repeat_interval: 10m

# 默认的receiver:如果一个报警没有被一个route匹配,则发送给默认的接收器

receiver: default

# 上面所有的属性都由所有子路由继承,并且可以在每个子路由上进行覆盖。

routes:

- {}

# 配置告警接收者的信息

receivers:

- name: ‘default‘

email_configs:

- to: ‘654147123@qq.com‘

send_resolved: true # 接受告警恢复的通知

kind: ConfigMap

metadata:

name: alertmanager

namespace: monitor

主要配置的作用:

配置文件:

$ kubectl create -f alertmanager-config.yml

其他资源清单文件:

$ cat alertmanager-all.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: alertmanager

namespace: monitor

labels:

app: alertmanager

spec:

selector:

matchLabels:

app: alertmanager

template:

metadata:

labels:

app: alertmanager

spec:

volumes:

- name: config

configMap:

name: alertmanager

containers:

- name: alertmanager

image: prom/alertmanager:v0.21.0

imagePullPolicy: IfNotPresent

args:

- "--config.file=/etc/alertmanager/config.yml"

- "--log.level=debug"

ports:

- containerPort: 9093

name: http

volumeMounts:

- mountPath: "/etc/alertmanager"

name: config

resources:

requests:

cpu: 100m

memory: 256Mi

limits:

cpu: 100m

memory: 256Mi

---

apiVersion: v1

kind: Service

metadata:

name: alertmanager

namespace: monitor

spec:

type: ClusterIP

ports:

- port: 9093

selector:

app: alertmanager

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: alertmanager

namespace: monitor

spec:

rules:

- host: alertmanager.luffy.com

http:

paths:

- path: /

backend:

serviceName: alertmanager

servicePort: 9093

是否告警是由Prometheus进行判断的,若有告警产生,Prometheus会将告警push到Alertmanager,因此,需要在Prometheus端配置alertmanager的地址:

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

因此,修改Prometheus的配置文件,然后重新加载pod

# 编辑prometheus-configmap.yaml配置,添加alertmanager内容

$ vim prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 30s

evaluation_interval: 30s

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

...

$ kubectl apply -f prometheus-configmap.yaml

# 现在已经有监控数据了,因此使用prometheus提供的reload的接口,进行服务重启

# 查看配置文件是否已经自动加载到pod中

$ kubectl -n monitor get po -o wide

prometheus-dcb499cbf-pljfn 1/1 Running 0 47h 10.244.1.167

$ kubectl -n monitor exec -ti prometheus-dcb499cbf-pljfn cat /etc/prometheus/prometheus.yml |grep alertmanager

# 使用软加载的方式,

$ curl -X POST 10.244.1.167:9090/-/reload

目前Prometheus与Alertmanager已经连通,接下来我们可以针对收集到的各类指标配置报警规则,一旦满足报警规则的设置,则Prometheus将报警信息推送给Alertmanager,进而转发到我们配置的邮件中。

在哪里配置?同样是在prometheus-configmap中:

$ vim prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 30s

evaluation_interval: 30s

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

# Load rules once and periodically evaluate them according to the global ‘evaluation_interval‘.

rule_files:

- /etc/prometheus/alert_rules.yml

# - "first_rules.yml"

# - "second_rules.yml"

scrape_configs:

- job_name: ‘prometheus‘

static_configs:

- targets: [‘localhost:9090‘]

...

rules.yml我们同样使用configmap的方式挂载到prometheus容器内部,因此只需要在已有的configmap中加一个数据项目

$ vim prometheus-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 30s

evaluation_interval: 30s

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

# Load rules once and periodically evaluate them according to the global ‘evaluation_interval‘.

rule_files:

- /etc/prometheus/alert_rules.yml

# - "first_rules.yml"

# - "second_rules.yml"

scrape_configs:

- job_name: ‘prometheus‘

static_configs:

- targets: [‘localhost:9090‘]

... # 省略中间部分

alert_rules.yml: |

groups:

- name: node_metrics

rules:

- alert: NodeLoad

expr: node_load15 < 1

for: 2m

annotations:

summary: "{{$labels.instance}}: Low node load detected"

description: "{{$labels.instance}}: node load is below 1 (current value is: {{ $value }}"

告警规则的几个要素:

group.name:告警分组的名称,一个组下可以配置一类告警规则,比如都是物理节点相关的告警alert:告警规则的名称expr:是用于进行报警规则 PromQL 查询语句,expr通常是布尔表达式,可以让Prometheus根据计算的指标值做 true or false 的判断for:评估等待时间(Pending Duration),用于表示只有当触发条件持续一段时间后才发送告警,在等待期间新产生的告警状态为pending,屏蔽掉瞬时的问题,把焦点放在真正有持续影响的问题上labels:自定义标签,允许用户指定额外的标签列表,把它们附加在告警上,可以用于后面做路由判断,通知到不同的终端,通常被用于添加告警级别的标签annotations:指定了另一组标签,它们不被当做告警实例的身份标识,它们经常用于存储一些额外的信息,用于报警信息的展示之类的规则配置中,支持模板的方式,其中:

{{$labels}}可以获取当前指标的所有标签,支持{{$labels.instance}}或者{{$labels.job}}这种形式

{{ $value }}可以获取当前计算出的指标值

更新配置并软重启,并查看Prometheus报警规则。

一个报警信息在生命周期内有下面3种状态:

inactive: 表示当前报警信息处于非活动状态,即不满足报警条件pending: 表示在设置的阈值时间范围内被激活了,即满足报警条件,但是还在观察期内firing: 表示超过设置的阈值时间被激活了,即满足报警条件,且报警触发时间超过了观察期,会发送到Alertmanager端对于已经 pending 或者 firing 的告警,Prometheus 也会将它们存储到时间序列ALERTS{}中。当然我们也可以通过表达式去查询告警实例:

ALERTS{}

查看Alertmanager日志:

level=warn ts=2020-07-28T13:43:59.430Z caller=notify.go:674 component=dispatcher receiver=email integration=email[0] msg="Notify attempt failed, will retry later" attempts=1 err="*email.loginAuth auth: 550 User has no permission"

说明告警已经推送到Alertmanager端了,但是邮箱登录的时候报错,这是因为邮箱默认没有开启第三方客户端登录。因此需要登录163邮箱设置SMTP服务允许客户端登录。

目前官方内置的第三方通知集成包括:邮件、 即时通讯软件(如Slack、Hipchat)、移动应用消息推送(如Pushover)和自动化运维工具(例如:Pagerduty、Opsgenie、Victorops)。可以在alertmanager的管理界面中查看到。

每一个receiver具有一个全局唯一的名称,并且对应一个或者多个通知方式:

name: <string>

email_configs:

[ - <email_config>, ... ]

hipchat_configs:

[ - <hipchat_config>, ... ]

slack_configs:

[ - <slack_config>, ... ]

opsgenie_configs:

[ - <opsgenie_config>, ... ]

webhook_configs:

[ - <webhook_config>, ... ]

如果想实现告警消息推送给企业常用的即时聊天工具,如钉钉或者企业微信,如何配置?

Alertmanager的通知方式中还可以支持Webhook,通过这种方式开发者可以实现更多个性化的扩展支持。

# 警报接收者

receivers:

#ops

- name: ‘demo-webhook‘

webhook_configs:

- send_resolved: true

url: http://demo-webhook/alert/send

当我们配置了上述webhook地址,则当告警路由到demo-webhook时,alertmanager端会向webhook地址推送POST请求:

$ curl -X POST -d"$demoAlerts" http://demo-webhook/alert/send

$ echo $demoAlerts

{

"version": "4",

"groupKey": <string>, alerts (e.g. to deduplicate) ,

"status": "<resolved|firing>",

"receiver": <string>,

"groupLabels": <object>,

"commonLabels": <object>,

"commonAnnotations": <object>,

"externalURL": <string>, // backlink to the Alertmanager.

"alerts":

[{

"labels": <object>,

"annotations": <object>,

"startsAt": "<rfc3339>",

"endsAt": "<rfc3339>"

}]

}

因此,假如我们想把报警消息自动推送到钉钉群聊,只需要:

如何给钉钉群聊发送消息? 钉钉机器人

钉钉群聊机器人设置:

每个群聊机器人在创建的时候都会生成唯一的一个访问地址:

https://oapi.dingtalk.com/robot/send?access_token=e54f616718798e32d1e2ff1af5b095c37501878f816bdab2daf66d390633843a

这样,我们就可以使用如下方式来模拟给群聊机器人发送请求,实现消息的推送:

curl ‘https://oapi.dingtalk.com/robot/send?access_token=e54f616718798e32d1e2ff1af5b095c37501878f816bdab2daf66d390633843a‘ -H ‘Content-Type: application/json‘ -d ‘{"msgtype": "text","text": {"content": "我就是我, 是不一样的烟火"}}‘

https://gitee.com/agagin/prometheus-webhook-dingtalk

镜像地址:timonwong/prometheus-webhook-dingtalk:master

二进制运行:

$ ./prometheus-webhook-dingtalk --config.file=config.yml

假如使用如下配置:

targets:

webhook_dev:

url: https://oapi.dingtalk.com/robot/send?access_token=e54f616718798e32d1e2ff1af5b095c37501878f816bdab2daf66d390633843a

webhook_ops:

url: https://oapi.dingtalk.com/robot/send?access_token=d4e7b72eab6d1b2245bc0869d674f627dc187577a3ad485d9c1d131b7d67b15b

则prometheus-webhook-dingtalk启动后会自动支持如下API的POST访问:

http://locahost:8060/dingtalk/webhook_dev/send

http://localhost:8060/dingtalk/webhook_ops/send

这样可以使用一个prometheus-webhook-dingtalk来实现多个钉钉群的webhook地址

部署prometheus-webhook-dingtalk,从Dockerfile可以得知需要注意的点:

/etc/prometheus-webhook-dingtalk/config.yml,可以通过configmap挂载配置文件:

$ cat webhook-dingtalk-configmap.yaml

apiVersion: v1

data:

config.yml: |

targets:

webhook_dev:

url: https://oapi.dingtalk.com/robot/send?access_token=e54f616718798e32d1e2ff1af5b095c37501878f816bdab2daf66d390633843a

kind: ConfigMap

metadata:

name: webhook-dingtalk-config

namespace: monitor

Deployment和Service

$ cat webhook-dingtalk-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: webhook-dingtalk

namespace: monitor

spec:

selector:

matchLabels:

app: webhook-dingtalk

template:

metadata:

labels:

app: webhook-dingtalk

spec:

containers:

- name: webhook-dingtalk

image: timonwong/prometheus-webhook-dingtalk:master

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/etc/prometheus-webhook-dingtalk/config.yml"

name: config

subPath: config.yml

ports:

- containerPort: 8060

name: http

resources:

requests:

cpu: 50m

memory: 100Mi

limits:

cpu: 50m

memory: 100Mi

volumes:

- name: config

configMap:

name: webhook-dingtalk-config

items:

- key: config.yml

path: config.yml

---

apiVersion: v1

kind: Service

metadata:

name: webhook-dingtalk

namespace: monitor

spec:

selector:

app: webhook-dingtalk

ports:

- name: hook

port: 8060

targetPort: http

创建:

$ kubectl create -f webhook-dingtalk-configmap.yaml

$ kubectl create -f webhook-dingtalk-deploy.yaml

# 查看日志,可以得知当前的可用webhook日志

$ kubectl -n monitor logs -f webhook-dingtalk-f7f5589c9-qglkd

...

file=/etc/prometheus-webhook-dingtalk/config.yml msg="Completed loading of configuration file"

level=info ts=2020-07-30T14:05:40.963Z caller=main.go:117 component=configuration msg="Loading templates" templates=

ts=2020-07-30T14:05:40.963Z caller=main.go:133 component=configuration msg="Webhook urls for prometheus alertmanager" urls="http://localhost:8060/dingtalk/webhook_dev/send http://localhost:8060/dingtalk/webhook_ops/send"

level=info ts=2020-07-30T14:05:40.963Z caller=web.go:210 component=web msg="Start listening for connections" address=:8060

修改Alertmanager路由及webhook配置:

$ cat alertmanager-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: alertmanager

namespace: monitor

data:

config.yml: |-

global:

# 当alertmanager持续多长时间未接收到告警后标记告警状态为 resolved

resolve_timeout: 5m

# 配置邮件发送信息

smtp_smarthost: ‘smtp.163.com:25‘

smtp_from: ‘earlene163@163.com‘

smtp_auth_username: ‘earlene163@163.com‘

# 注意这里不是邮箱密码,是邮箱开启第三方客户端登录后的授权码

smtp_auth_password: ‘GXIWNXKMMEVMNHAJ‘

smtp_require_tls: false

# 所有报警信息进入后的根路由,用来设置报警的分发策略

route:

# 按照告警名称分组

group_by: [‘alertname‘]

# 当一个新的报警分组被创建后,需要等待至少 group_wait 时间来初始化通知,这种方式可以确保您能有足够的时间为同一分组来获取多个警报,然后一起触发这个报警信息。

group_wait: 30s

# 相同的group之间发送告警通知的时间间隔

group_interval: 30s

# 如果一个报警信息已经发送成功了,等待 repeat_interval 时间来重新发送他们,不同类型告警发送频率需要具体配置

repeat_interval: 10m

# 默认的receiver:如果一个报警没有被一个route匹配,则发送给默认的接收器

receiver: default

# 路由树,默认继承global中的配置,并且可以在每个子路由上进行覆盖。

routes:

- {}

receivers:

- name: ‘default‘

email_configs:

- to: ‘654147123@qq.com‘

send_resolved: true # 接受告警恢复的通知

webhook_configs:

- send_resolved: true

url: http://webhook-dingtalk:8060/dingtalk/webhook_dev/send

验证钉钉消息是否正常收到。

真实的场景中,我们往往期望可以给告警设置级别,而且可以实现不同的报警级别可以由不同的receiver接收告警消息。

Alertmanager中路由负责对告警信息进行分组匹配,并向告警接收器发送通知。告警接收器可以通过以下形式进行配置:

routes:

- receiver: ops

group_wait: 10s

match:

severity: critical

- receiver: dev

group_wait: 10s

match_re:

severity: normal|middle

receivers:

- ops

...

- dev

...

- <receiver> ...

因此可以为了更全面的感受报警的逻辑,我们再添加两个报警规则:

alert_rules.yml: |

groups:

- name: node_metrics

rules:

- alert: NodeLoad

expr: node_load15 < 1

for: 2m

labels:

severity: normal

annotations:

summary: "{{$labels.instance}}: Low node load detected"

description: "{{$labels.instance}}: node load is below 1 (current value is: {{ $value }}"

- alert: NodeMemoryUsage

expr: (node_memory_MemTotal_bytes - (node_memory_MemFree_bytes + node_memory_Buffers_bytes + node_memory_Cached_bytes)) / node_memory_MemTotal_bytes * 100 > 40

for: 2m

labels:

severity: critical

annotations:

summary: "{{$labels.instance}}: High Memory usage detected"

description: "{{$labels.instance}}: Memory usage is above 40% (current value is: {{ $value }}"

- name: targets_status

rules:

- alert: TargetStatus

expr: up == 0

for: 1m

labels:

severity: critical

annotations:

summary: "{{$labels.instance}}: prometheus target down"

description: "{{$labels.instance}}: prometheus target down,job is {{$labels.job}}"

我们为不同的报警规则设置了不同的标签,如severity: critical,针对规则中的label,来配置alertmanager路由规则,实现转发给不同的接收者。

$ cat alertmanager-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: alertmanager

namespace: monitor

data:

config.yml: |-

global:

# 当alertmanager持续多长时间未接收到告警后标记告警状态为 resolved

resolve_timeout: 5m

# 配置邮件发送信息

smtp_smarthost: ‘smtp.163.com:25‘

smtp_from: ‘earlene163@163.com‘

smtp_auth_username: ‘earlene163@163.com‘

# 注意这里不是邮箱密码,是邮箱开启第三方客户端登录后的授权码

smtp_auth_password: ‘GXIWNXKMMEVMNHAJ‘

smtp_require_tls: false

# 所有报警信息进入后的根路由,用来设置报警的分发策略

route:

# 按照告警名称分组

group_by: [‘alertname‘]

# 当一个新的报警分组被创建后,需要等待至少 group_wait 时间来初始化通知,这种方式可以确保您能有足够的时间为同一分组来获取多个警报,然后一起触发这个报警信息。

group_wait: 30s

# 相同的group之间发送告警通知的时间间隔

group_interval: 30s

# 如果一个报警信息已经发送成功了,等待 repeat_interval 时间来重新发送他们,不同类型告警发送频率需要具体配置

repeat_interval: 10m

# 默认的receiver:如果一个报警没有被一个route匹配,则发送给默认的接收器

receiver: default

# 路由树,默认继承global中的配置,并且可以在每个子路由上进行覆盖。

routes:

- receiver: critical_alerts

group_wait: 10s

match:

severity: critical

- receiver: normal_alerts

group_wait: 10s

match_re:

severity: normal|middle

receivers:

- name: ‘default‘

email_configs:

- to: ‘654147123@qq.com‘

send_resolved: true # 接受告警恢复的通知

- name: ‘critical_alerts‘

webhook_configs:

- send_resolved: true

url: http://webhook-dingtalk:8060/dingtalk/webhook_ops/send

- name: ‘normal_alerts‘

webhook_configs:

- send_resolved: true

url: http://webhook-dingtalk:8060/dingtalk/webhook_dev/send

再配置一个钉钉机器人,修改webhook-dingtalk的配置,添加webhook_ops的配置:

$ cat webhook-dingtalk-configmap.yaml

apiVersion: v1

data:

config.yml: |

targets:

webhook_dev:

url: https://oapi.dingtalk.com/robot/send?access_token=e54f616718798e32d1e2ff1af5b095c37501878f816bdab2daf66d390633843a

webhook_ops:

url: https://oapi.dingtalk.com/robot/send?access_token=5a68888fbecde75b1832ff024d7374e51f2babd33f1078e5311cdbb8e2c00c3a

kind: ConfigMap

metadata:

name: webhook-dingtalk-config

namespace: monitor

设置webhook-dingtalk开启lifecycle

分别更新Prometheus和Alertmanager配置,查看报警的发送。

前面我们知道,告警的group(分组)功能通过把多条告警数据聚合,有效的减少告警的频繁发送。除此之外,Alertmanager还支持Inhibition(抑制) 和 Silences(静默),帮助我们抑制或者屏蔽报警。

Inhibition 抑制

抑制是当出现其它告警的时候压制当前告警的通知,可以有效的防止告警风暴。

比如当机房出现网络故障时,所有服务都将不可用而产生大量服务不可用告警,但这些警告并不能反映真实问题在哪,真正需要发出的应该是网络故障告警。当出现网络故障告警的时候,应当抑制服务不可用告警的通知。

在Alertmanager配置文件中,使用inhibit_rules定义一组告警的抑制规则:

inhibit_rules:

[ - <inhibit_rule> ... ]

每一条抑制规则的具体配置如下:

target_match:

[ <labelname>: <labelvalue>, ... ]

target_match_re:

[ <labelname>: <regex>, ... ]

source_match:

[ <labelname>: <labelvalue>, ... ]

source_match_re:

[ <labelname>: <regex>, ... ]

[ equal: ‘[‘ <labelname>, ... ‘]‘ ]

当已经发送的告警通知匹配到target_match或者target_match_re规则,当有新的告警规则如果满足source_match或者定义的匹配规则,并且已发送的告警与新产生的告警中equal定义的标签完全相同,则启动抑制机制,新的告警不会发送。

例如,定义如下抑制规则:

- source_match:

alertname: NodeDown

severity: critical

target_match:

severity: critical

equal:

- node

如当集群中的某一个主机节点异常宕机导致告警NodeDown被触发,同时在告警规则中定义了告警级别severity=critical。由于主机异常宕机,该主机上部署的所有服务,中间件会不可用并触发报警。根据抑制规则的定义,如果有新的告警级别为severity=critical,并且告警中标签node的值与NodeDown告警的相同,则说明新的告警是由NodeDown导致的,则启动抑制机制停止向接收器发送通知。

演示:实现如果 NodeMemoryUsage 报警触发,则抑制NodeLoad指标规则引起的报警。

inhibit_rules:

- source_match:

alertname: NodeMemoryUsage

severity: critical

target_match:

severity: normal

equal:

- instance

Silences: 静默

简单直接的在指定时段关闭告警。静默通过匹配器(Matcher)来配置,类似于路由树。警告进入系统的时候会检查它是否匹配某条静默规则,如果是则该警告的通知将忽略。 静默规则在Alertmanager的 Web 界面里配置。

一条告警产生后,还要经过 Alertmanager 的分组、抑制处理、静默处理、去重处理和降噪处理最后再发送给接收者。这个过程中可能会因为各种原因会导致告警产生了却最终没有进行通知,可以通过下图了解整个告警的生命周期:

https://github.com/liyongxin/prometheus-webhook-wechat

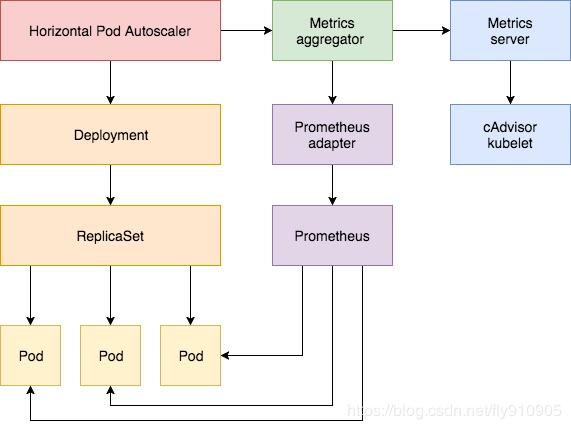

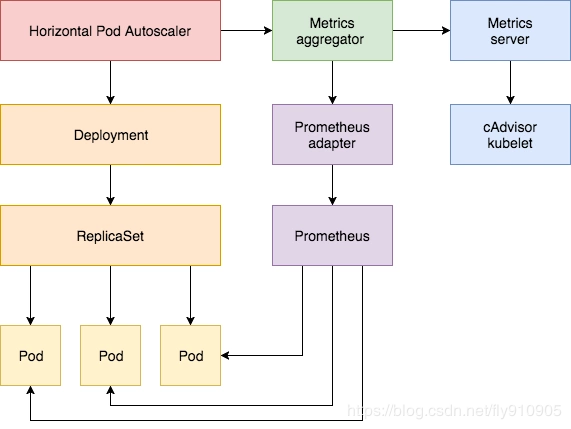

我们基于CPU和内存的HPA,即利用metrics-server及HPA,可以实现业务服务可以根据pod的cpu和内存进行弹性伸缩。

k8s对监控接口进行了标准化:

Resource Metrics

对应的接口是 metrics.k8s.io,主要的实现就是 metrics-server

Custom Metrics

对应的接口是 custom.metrics.k8s.io,主要的实现是 Prometheus, 它提供的是资源监控和自定义监控

安装完metrics-server后,利用kube-aggregator的功能,实现了metrics api的注册。可以通过如下命令

$ kubectl api-versions

...

metrics.k8s.io/v1beta1

HPA通过使用该API获取监控的CPU和内存资源:

# 查询nodes节点的cpu和内存数据

$ kubectl get --raw="/apis/metrics.k8s.io/v1beta1/nodes"|jq

$ kubectl get --raw="/apis/metrics.k8s.io/v1beta1/pods"|jq

# 若本机没有安装jq命令,可以参考如下方式进行安装

$ wget http://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm

$ rpm -ivh epel-release-latest-7.noarch.rpm

$ yum install -y jq

同样,为了实现通用指标的采集,需要部署Prometheus Adapter,来提供custom.metrics.k8s.io,作为HPA获取通用指标的入口。

项目地址为: https://github.com/DirectXMan12/k8s-prometheus-adapter

$ git clone https://github.com/DirectXMan12/k8s-prometheus-adapter.git

# 最新release版本v0.7.0,代码切换到v0.7.0分支

$ git checkout v0.7.0

查看部署说明 https://github.com/DirectXMan12/k8s-prometheus-adapter/tree/v0.7.0/deploy

镜像使用官方提供的v0.7.0最新版 https://hub.docker.com/r/directxman12/k8s-prometheus-adapter/tags

准备证书

$ export PURPOSE=serving

$ openssl req -x509 -sha256 -new -nodes -days 365 -newkey rsa:2048 -keyout ${PURPOSE}.key -out ${PURPOSE}.crt -subj "/CN=ca"

$ kubectl -n monitor create secret generic cm-adapter-serving-certs --from-file=./serving.crt --from-file=./serving.key

# 查看证书

$ kubectl -n monitor describe secret cm-adapter-serving-certs

准备资源清单

$ mkdir yamls

$ cp manifests/custom-metrics-apiserver-deployment.yaml yamls/

$ cp manifests/custom-metrics-apiserver-service.yaml yamls/

$ cp manifests/custom-metrics-apiservice.yaml yamls/

$ cd yamls

# 新建rbac文件

$ vi custom-metrics-apiserver-rbac.yaml

kind: ServiceAccount

apiVersion: v1

metadata:

name: custom-metrics-apiserver

namespace: monitor

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: custom-metrics-resource-cluster-admin

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: custom-metrics-apiserver

namespace: monitor

# 新建配置文件

$ vi custom-metrics-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: adapter-config

namespace: monitor

data:

config.yaml: |

rules:

- {}

替换命名空间

# 资源清单文件默认用的命名空间是custom-metrics,替换为本例中使用的monitor

$ sed -i ‘s/namespace: custom-metrics/namespace: monitor/g‘ yamls/*

配置adapter对接的Prometheus地址

# 由于adapter会和Prometheus交互,因此需要配置对接的Prometheus地址

# 替换掉28行:manifests/custom-metrics-apiserver-deployment.yaml 中的--prometheus-url

$ vim manifests/custom-metrics-apiserver-deployment.yaml

...

18 spec:

19 serviceAccountName: custom-metrics-apiserver

20 containers:

21 - name: custom-metrics-apiserver

22 image: directxman12/k8s-prometheus-adapter-amd64

23 args:

24 - --secure-port=6443

25 - --tls-cert-file=/var/run/serving-cert/serving.crt

26 - --tls-private-key-file=/var/run/serving-cert/serving.key

27 - --logtostderr=true

28 - --prometheus-url=http://prometheus:9090/

29 - --metrics-relist-interval=1m

30 - --v=10

31 - --config=/etc/adapter/config.yaml

...

部署服务

$ kubectl create -f yamls/

验证一下:

$ kubectl api-versions

custom.metrics.k8s.io/v1beta1

$ kubectl get --raw /apis/custom.metrics.k8s.io/v1beta1 |jq

{

"kind": "APIResourceList",

"apiVersion": "v1",

"groupVersion": "custom.metrics.k8s.io/v1beta1",

"resources": []

}

为了演示效果,我们新建一个deployment来模拟业务应用。

$ cat custom-metrics-demo.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: custom-metrics-demo

spec:

selector:

matchLabels:

app: custom-metrics-demo

template:

metadata:

labels:

app: custom-metrics-demo

spec:

containers:

- name: custom-metrics-demo

image: cnych/nginx-vts:v1.0

resources:

limits:

cpu: 50m

requests:

cpu: 50m

ports:

- containerPort: 80

name: http

部署:

$ kubectl create -f custom-metrics-demo.yaml

$ kubectl get po -o wide

custom-metrics-demo-95b5bc949-xpppl 1/1 Running 0 65s 10.244.1.194

$ curl 10.244.1.194/status/format/prometheus

...

nginx_vts_server_requests_total{host="*",code="1xx"} 0

nginx_vts_server_requests_total{host="*",code="2xx"} 8

nginx_vts_server_requests_total{host="*",code="3xx"} 0

nginx_vts_server_requests_total{host="*",code="4xx"} 0

nginx_vts_server_requests_total{host="*",code="5xx"} 0

nginx_vts_server_requests_total{host="*",code="total"} 8

...

注册为Prometheus的target:

$ cat custom-metrics-demo-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: custom-metrics-demo

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "80"

prometheus.io/path: "/status/format/prometheus"

spec:

ports:

- port: 80

targetPort: 80

name: http

selector:

app: custom-metrics-demo

type: ClusterIP

自动注册为Prometheus的采集Targets。

通常web类的应用,会把每秒钟的请求数作为业务伸缩的指标依据。

实践:

使用案例应用custom-metrics-demo,如果custom-metrics-demo最近2分钟内每秒钟的请求数超过10次,则自动扩充业务应用的副本数。

配置自定义指标

告诉Adapter去采集转换哪些指标,Adapter支持转换的指标,才可以作为HPA的依据

配置HPA规则

apiVersion: autoscaling/v2beta1

kind: HorizontalPodAutoscaler

metadata:

name: nginx-custom-hpa

namespace: default

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: custom-metrics-demo

minReplicas: 1

maxReplicas: 3

metrics:

- type: Pods

pods:

metricName: nginx_vts_server_requests_per_second

targetAverageValue: 10

前面讲CPU的平均使用率的采集,其实是通过node_cpu_seconds_total指标计算得到的。

^

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="idle"}

│ . . . . . . . . . . . . . . . . . . . node_cpu{cpu="cpu0",mode="system"}

│ . . . . . . . . . . . . . . . . . . node_load1{}

│ . . . . . . . . . . . . . . . . . . node_cpu_seconds_total{...}

v

<------------------ 时间 ---------------->

同样,如果想获得每个业务应用最近2分钟内每秒的访问次数,也是根据总数来做计算,因此,需要使用业务自定义指标nginx_vts_server_requests_total,配合rate方法即可获取每秒钟的请求数。

rate(nginx_vts_server_requests_total[2m])

# 如查询有多条数据,需做汇聚,需要使用sum

sum(rate(nginx_vts_server_requests_total[2m])) by(kubernetes_pod_name)

自定义指标可以配置多个,因此,需要将规则使用数组来配置

rules:

- {}

告诉Adapter,哪些自定义指标可以使用

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

seriesQuery是PromQL语句,和直接用nginx_vts_server_requests_total查询到的结果一样,凡是seriesQuery可以查询到的指标,都可以用作自定义指标

告诉Adapter,指标中的标签和k8s中的资源对象的关联关系

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

resources:

overrides:

kubernetes_namespace: {resource: "namespace"}

kubernetes_pod_name: {resource: "pod"}

我们查询到的可用指标格式为:

nginx_vts_server_requests_total{code="1xx",host="*",instance="10.244.1.194:80",job="kubernetes-sd-endpoints",kubernetes_name="custom-metrics-demo",kubernetes_namespace="default",kubernetes_pod_name="custom-metrics-demo-95b5bc949-xpppl"}

由于HPA在调用Adapter接口的时候,告诉Adapter的是查询哪个命名空间下的哪个Pod的指标,因此,Adapter在去查询的时候,需要做一层适配转换(因为并不是每个prometheus查询到的结果中都是叫做kubernetes_namespace和kubernetes_pod_name)

指定自定义的指标名称,供HPA配置使用

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

resources:

overrides:

kubernetes_namespace: {resource: "namespace"}

kubernetes_pod_name: {resource: "pods"}

name:

matches: "^(.*)_total"

as: "${1}_per_second"

因为Adapter转换完之后的指标含义为:每秒钟的请求数。因此提供指标名称,该配置根据正则表达式做了匹配替换,转换完后的指标名称为:nginx_vts_server_requests_per_second,HPA规则中可以直接配置该名称。

告诉Adapter如何获取最终的自定义指标值

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

resources:

overrides:

kubernetes_namespace: {resource: "namespace"}

kubernetes_pod_name: {resource: "pod"}

name:

matches: "^(.*)_total"

as: "${1}_per_second"

metricsQuery: ‘sum(rate(<<.Series>>{<<.LabelMatchers>>}[2m])) by (<<.GroupBy>>)‘

我们最终期望的写法可能是这样:

sum(rate(nginx_vts_server_requests_total{host="*",code="total",kubernetes_namespace="default"}[2m])) by (kubernetes_pod_name)

但是Adapter提供了更简单的写法:

sum(rate(<<.Series>>{<<.LabelMatchers>>}[2m])) by (<<.GroupBy>>)

Series: 指标名称LabelMatchers: 指标查询的labelGroupBy: 结果分组,针对HPA过来的查询,都会匹配成kubernetes_pod_name更新Adapter的配置:

$ vi custom-metrics-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: adapter-config

namespace: monitor

data:

config.yaml: |

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

seriesFilters: []

resources:

overrides:

kubernetes_namespace: {resource: "namespace"}

kubernetes_pod_name: {resource: "pod"}

name:

matches: "^(.*)_total"

as: "${1}_per_second"

metricsQuery: (sum(rate(<<.Series>>{<<.LabelMatchers>>}[1m])) by (<<.GroupBy>>))

需要更新configmap并重启adapter服务:

$ kubectl apply -f custom-metrics-configmap.yaml

$ kubectl -n monitor delete po custom-metrics-apiserver-c689ff947-zp8gq

再次查看可用的指标数据:

$ kubectl get --raw /apis/custom.metrics.k8s.io/v1beta1 |jq

{

"kind": "APIResourceList",

"apiVersion": "v1",

"groupVersion": "custom.metrics.k8s.io/v1beta1",

"resources": [

{

"name": "namespaces/nginx_vts_server_requests_per_second",

"singularName": "",

"namespaced": false,

"kind": "MetricValueList",

"verbs": [

"get"

]

},

{

"name": "pods/nginx_vts_server_requests_per_second",

"singularName": "",

"namespaced": true,

"kind": "MetricValueList",

"verbs": [

"get"

]

}

]

}

我们发现有两个可用的resources,引用官方的一段解释:

Notice that we get an entry for both "pods" and "namespaces" -- the adapter exposes the metric on each resource that we‘ve associated the metric with (and all namespaced resources must be associated with a namespace), and will fill in the <<.GroupBy>> section with the appropriate label depending on which we ask for.

We can now connect to $KUBERNETES/apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/*/nginx_vts_server_requests_per_second, and we should see

$ kubectl get --raw "/apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/*/nginx_vts_server_requests_per_second" | jq

{

"kind": "MetricValueList",

"apiVersion": "custom.metrics.k8s.io/v1beta1",

"metadata": {

"selfLink": "/apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/%2A/nginx_vts_server_requests_per_second"

},

"items": [

{

"describedObject": {

"kind": "Pod",

"namespace": "default",

"name": "custom-metrics-demo-95b5bc949-xpppl",

"apiVersion": "/v1"

},

"metricName": "nginx_vts_server_requests_per_second",

"timestamp": "2020-08-02T04:07:06Z",

"value": "133m",

"selector": null

}

]

}

其中133m等于0.133,即当前指标查询每秒钟请求数为0.133次

https://github.com/DirectXMan12/k8s-prometheus-adapter/blob/master/docs/config-walkthrough.md

https://github.com/DirectXMan12/k8s-prometheus-adapter/blob/master/docs/config.md

$ cat hpa-custom-metrics.yaml

apiVersion: autoscaling/v2beta1

kind: HorizontalPodAutoscaler

metadata:

name: nginx-custom-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: custom-metrics-demo

minReplicas: 1

maxReplicas: 3

metrics:

- type: Pods

pods:

metricName: nginx_vts_server_requests_per_second

targetAverageValue: 10

$ kubectl create -f hpa-custom-metrics.yaml

$ kubectl get hpa

注意metricName为自定义的指标名称。

使用ab命令压测custom-metrics-demo服务,观察hpa的变化:

$ kubectl get svc -o wide

custom-metrics-demo ClusterIP 10.104.110.245 <none> 80/TCP 16h

$ ab -n1000 -c 5 http://10.104.110.245:80/

观察hpa变化:

$ kubectl describe hpa nginx-custom-hpa

查看adapter日志:

$ kubectl -n monitor logs --tail=100 -f custom-metrics-apiserver-c689ff947-m5vlr

...

I0802 04:43:58.404559 1 httplog.go:90] GET /apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/%2A/nginx_vts_server_requests_per_second?labelSelector=app%3Dcustom-metrics-demo: (20.713209ms) 200 [kube-controller-manager/v1.16.0 (linux/amd64) kubernetes/2bd9643/system:serviceaccount:kube-system:horizontal-pod-autoscaler 192.168.136.10:60626]

prometheus-operator

追加:Adapter查询数据和直接查询Prometheus数据不一致(相差4倍)的问题。

$ vi custom-metrics-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: adapter-config

namespace: monitor

data:

config.yaml: |

rules:

- seriesQuery: ‘nginx_vts_server_requests_total{host="*",code="total"}‘

seriesFilters: []

resources:

overrides:

kubernetes_namespace: {resource: "namespace"}

kubernetes_pod_name: {resource: "pod"}

name:

matches: "^(.*)_total"

as: "${1}_per_second"

metricsQuery: (sum(rate(<<.Series>>{<<.LabelMatchers>>,host="*",code="total"}[1m])) by (<<.GroupBy>>))

查询验证:

$ kubectl get --raw "/apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/*/nginx_vts_server_requests_per_second" | jq

{

"kind": "MetricValueList",

"apiVersion": "custom.metrics.k8s.io/v1beta1",

"metadata": {

"selfLink": "/apis/custom.metrics.k8s.io/v1beta1/namespaces/default/pods/%2A/nginx_vts_server_requests_per_second"

},

"items": [

{

"describedObject": {

"kind": "Pod",

"namespace": "default",

"name": "custom-metrics-demo-95b5bc949-xpppl",

"apiVersion": "/v1"

},

"metricName": "nginx_vts_server_requests_per_second",

"timestamp": "2020-08-02T04:07:06Z",

"value": "133m",

"selector": null

}

]

}

原文:https://www.cnblogs.com/Mr-Axin/p/14756642.html