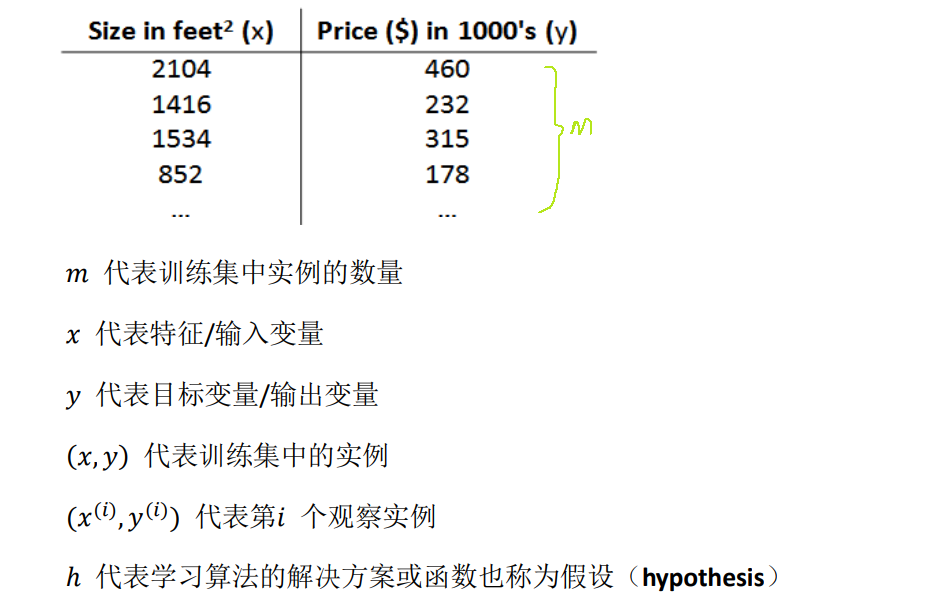

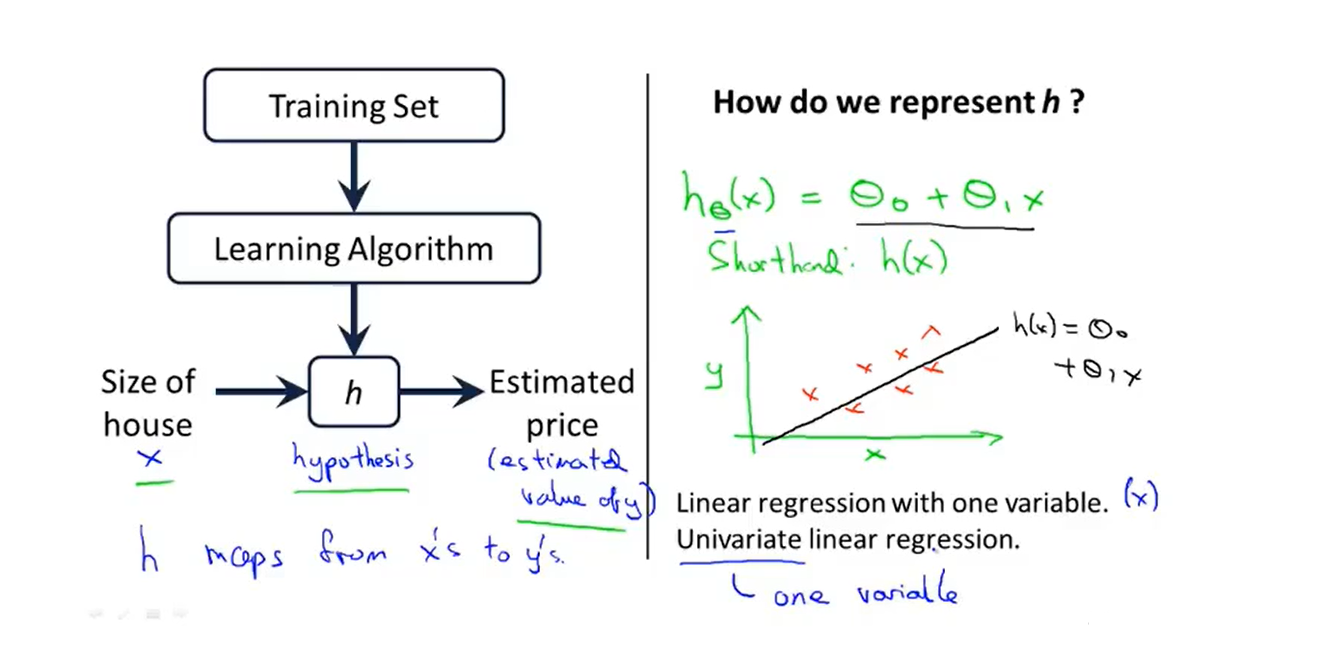

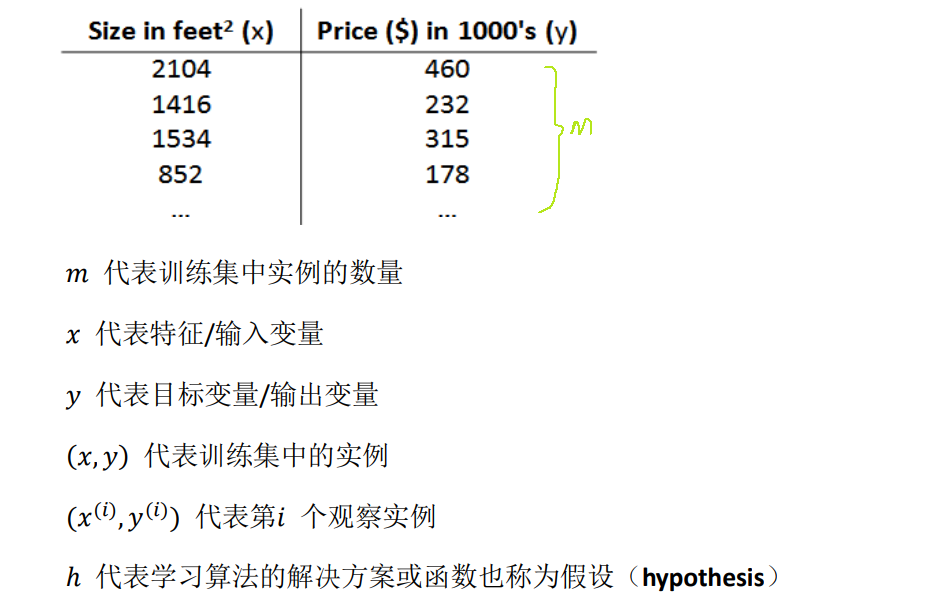

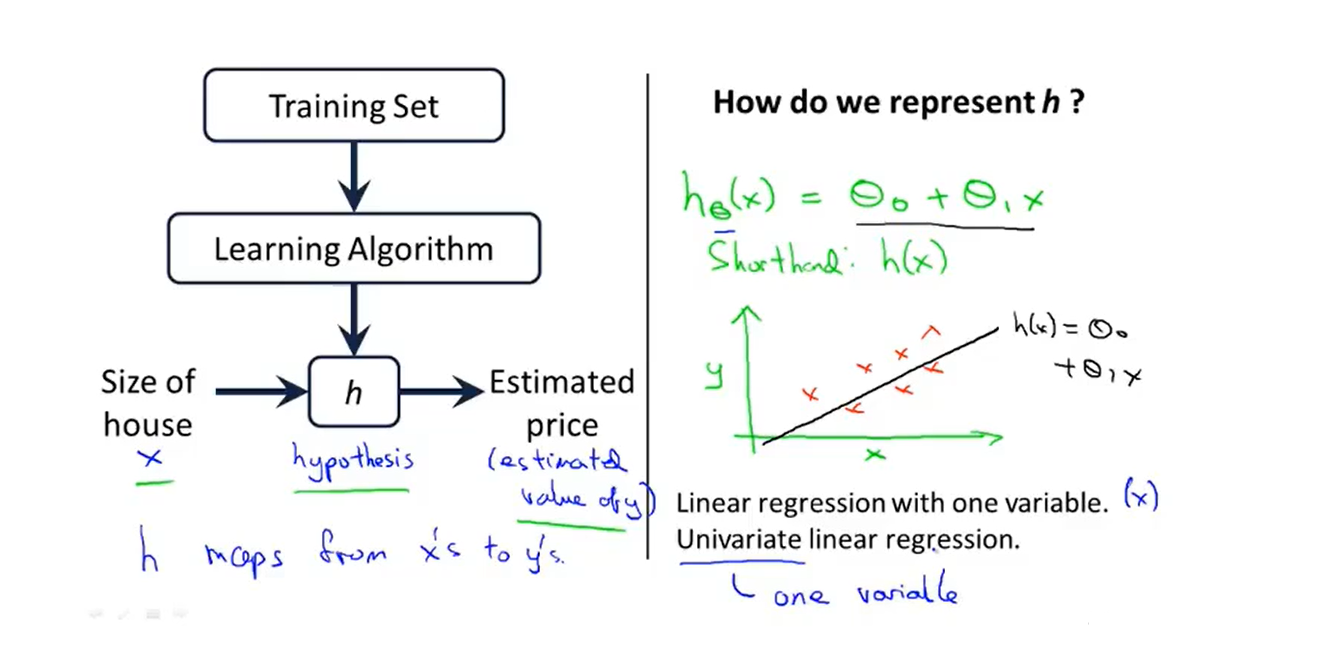

1、Model representation

- We will start with this ‘’Housing price prediction‘’ example first of fitting linear functions, and we will build on this to eventually have more complex models

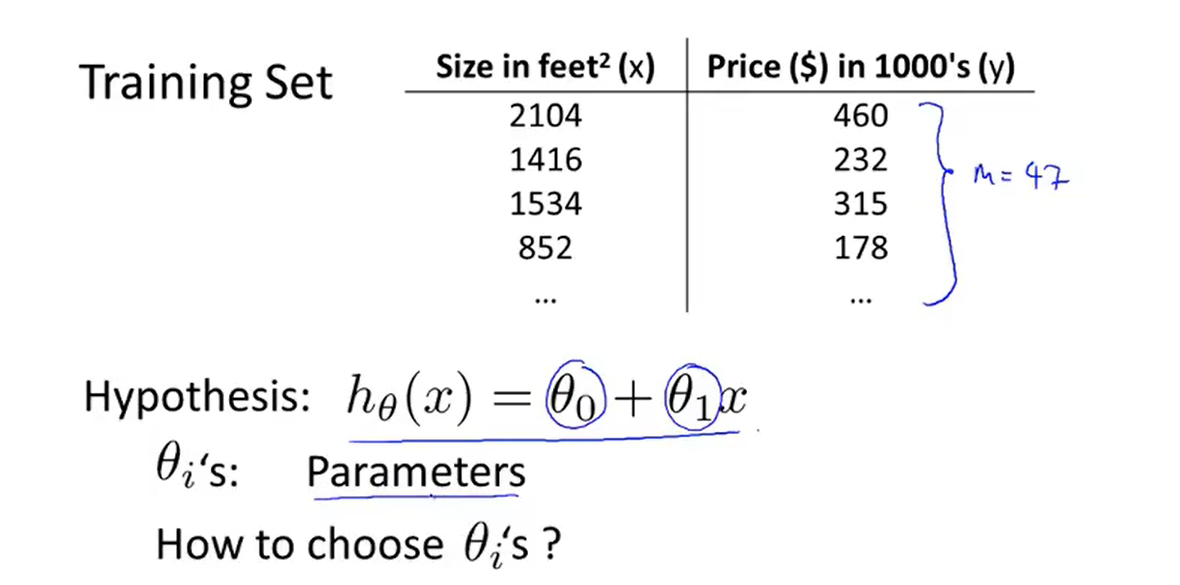

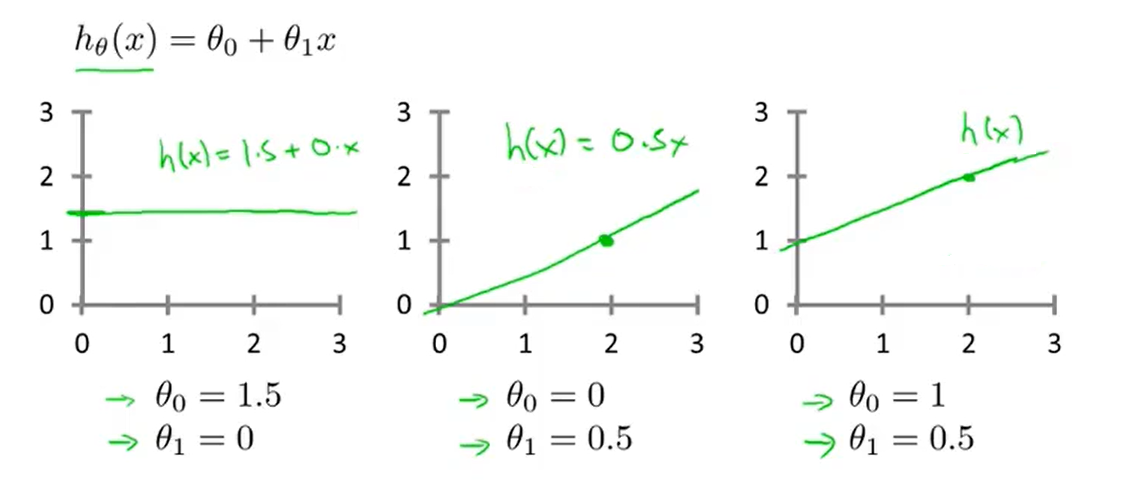

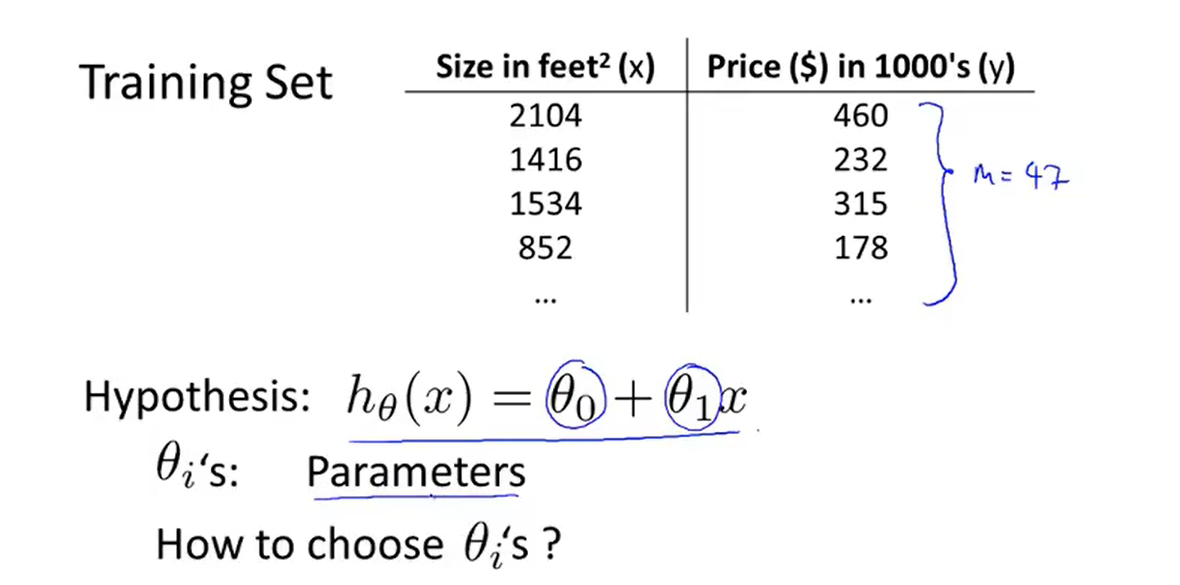

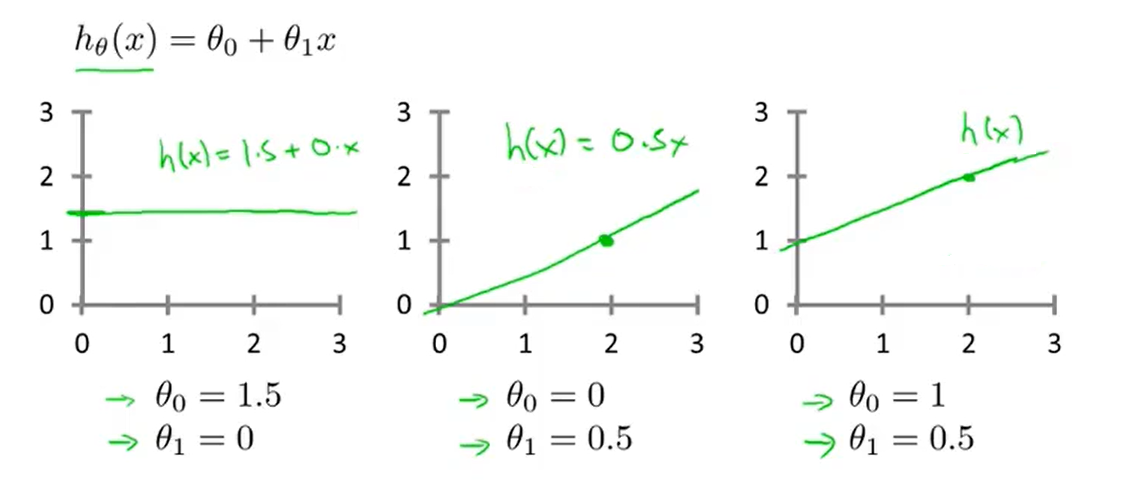

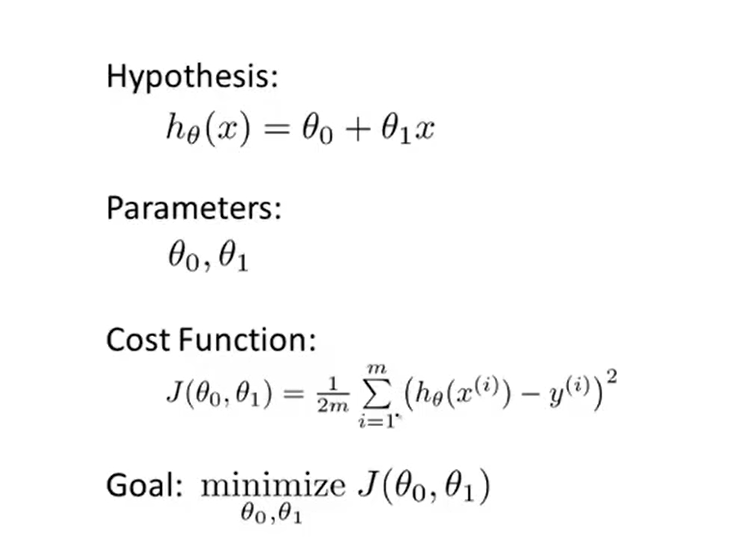

2、Cost function

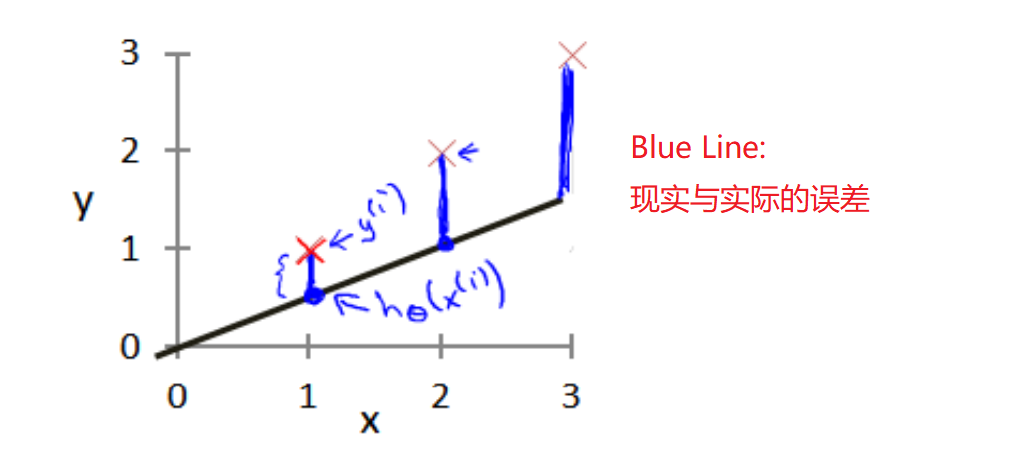

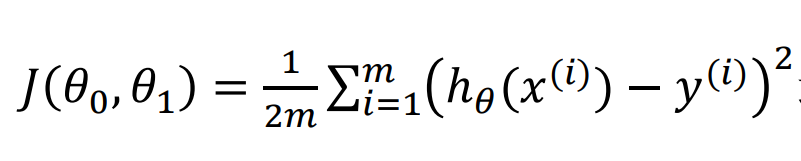

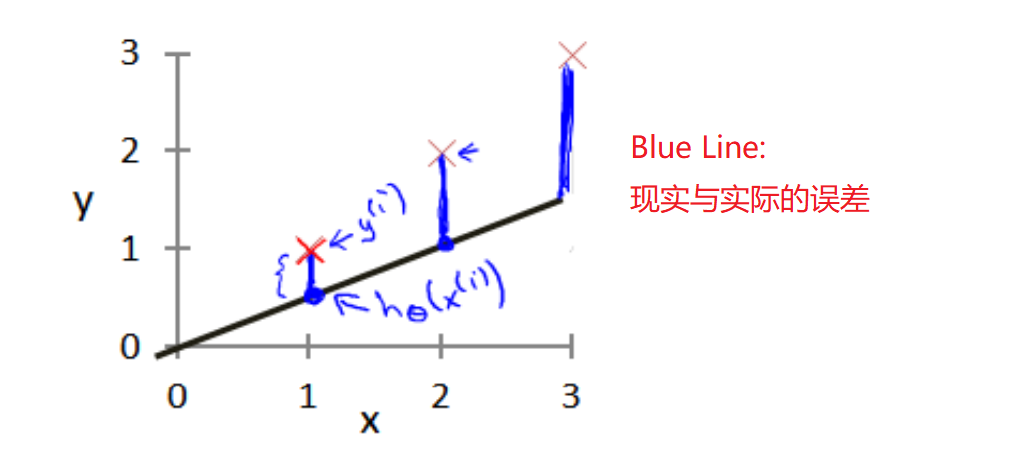

- 代价函数(平方误差函数):It figures out how to fit the best possible straight line to our data

- So how to choose θi‘s ?

- The parameters we choose determine the accuracy of the straight line we get relative to our training set

- But there is modeling error 建模误差

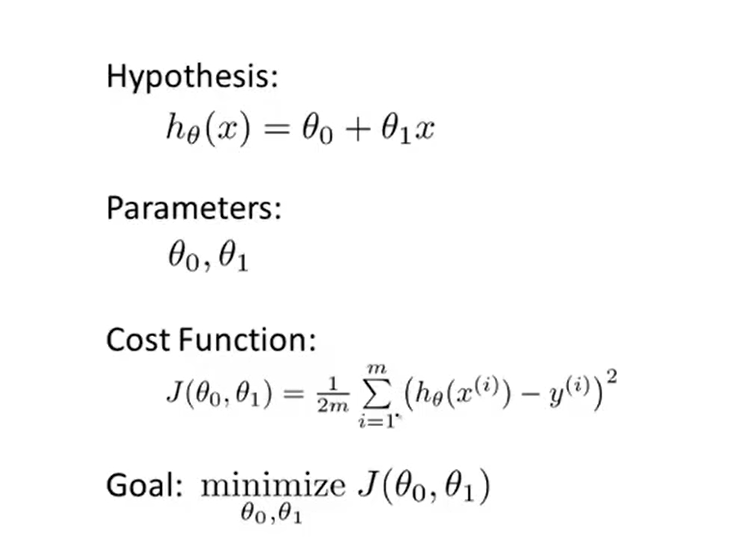

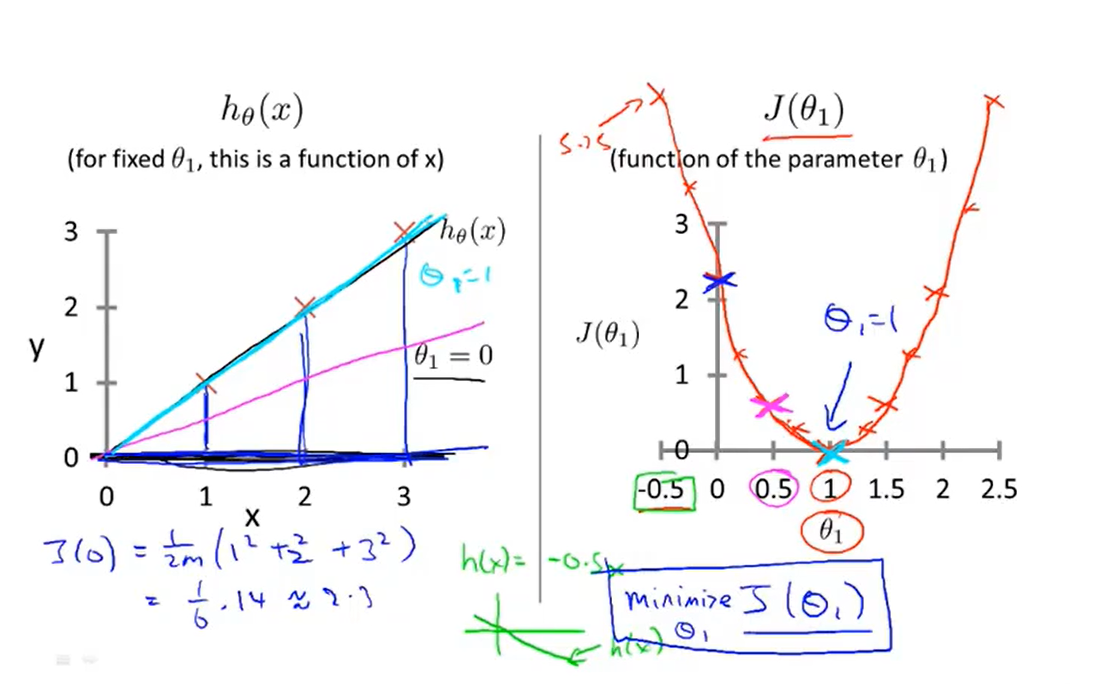

2-1、Cost function introduction I

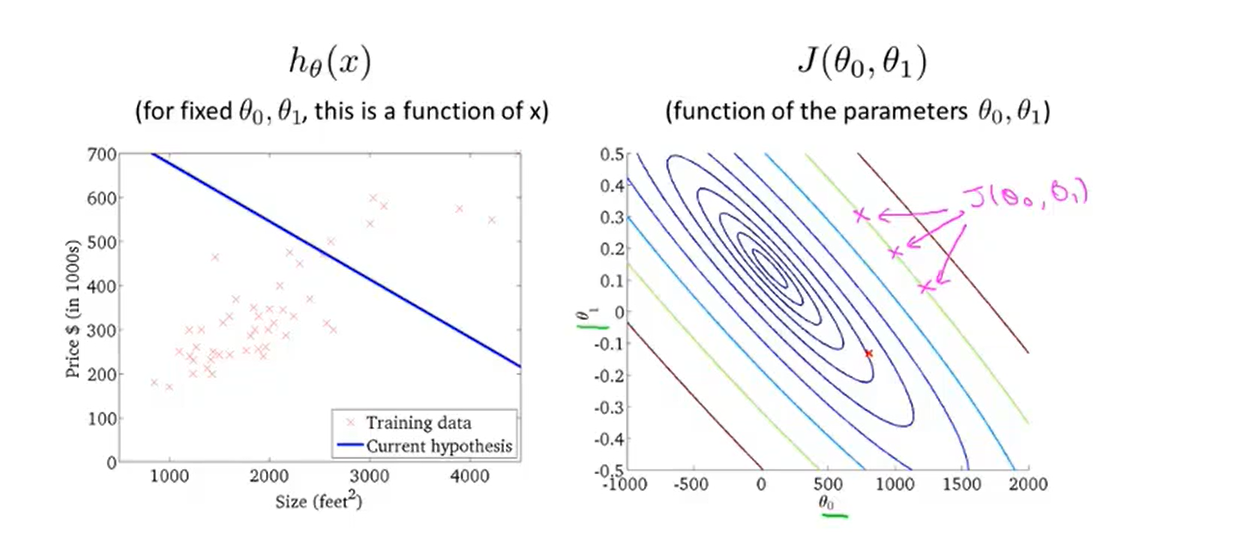

- We look up some plots to understand the cost function

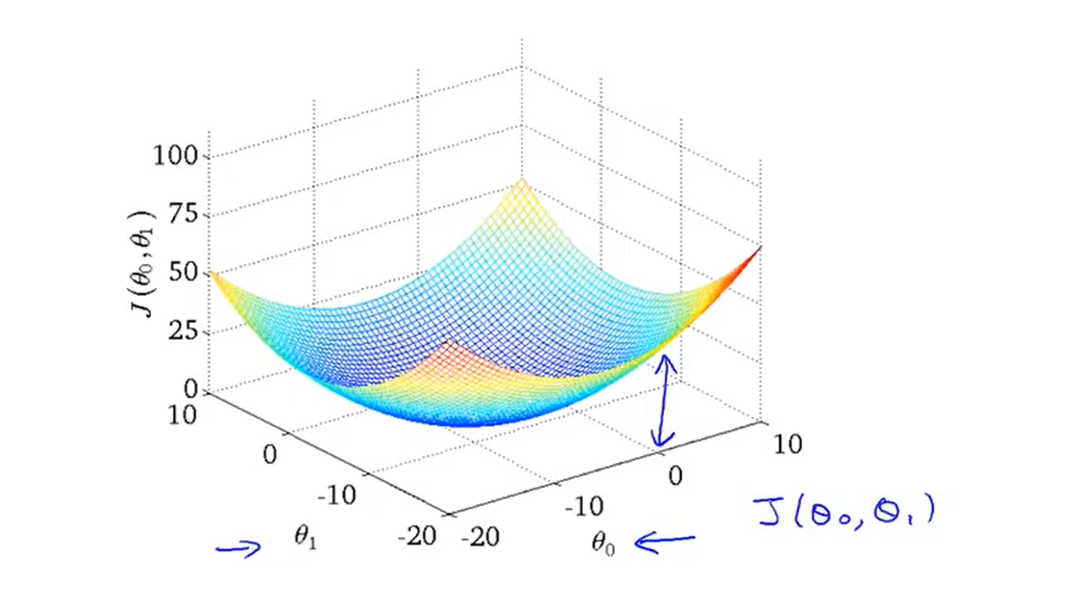

2-2、Cost function introduction II

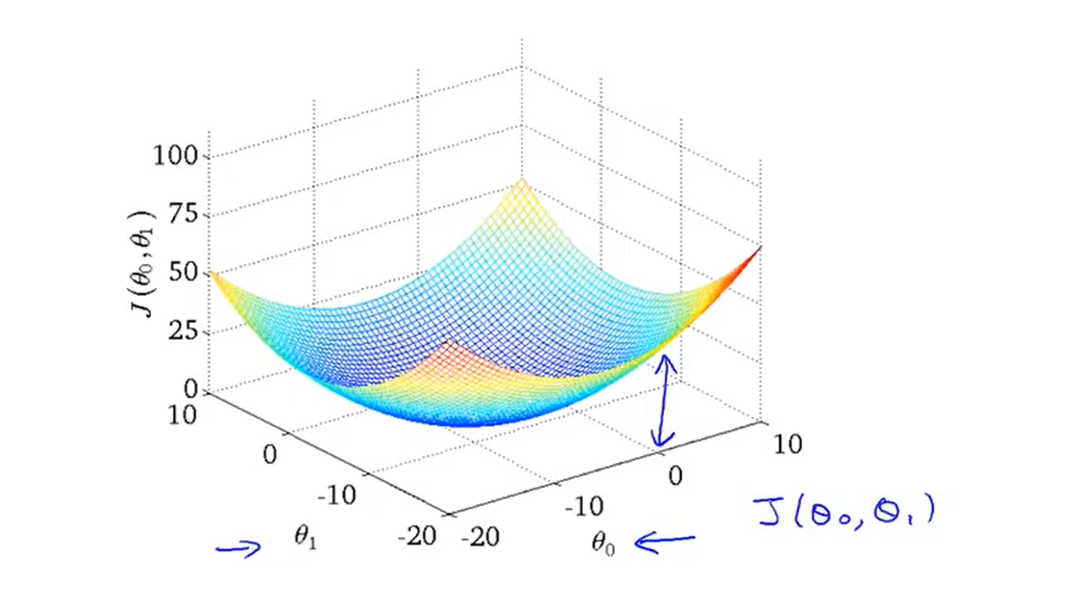

- Let‘s take a look at the three-dimensional space diagram of the cost function(also called a convex function 凸函数)

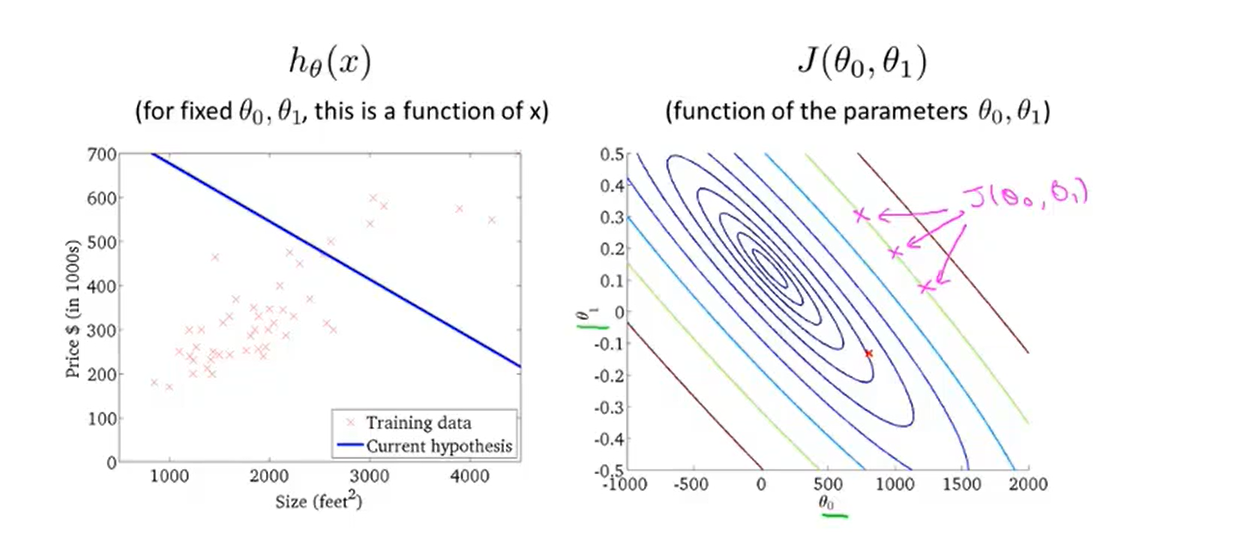

- And here is an example of a contour figure:

- The contour figure is a more convenient way to visualize the cost function

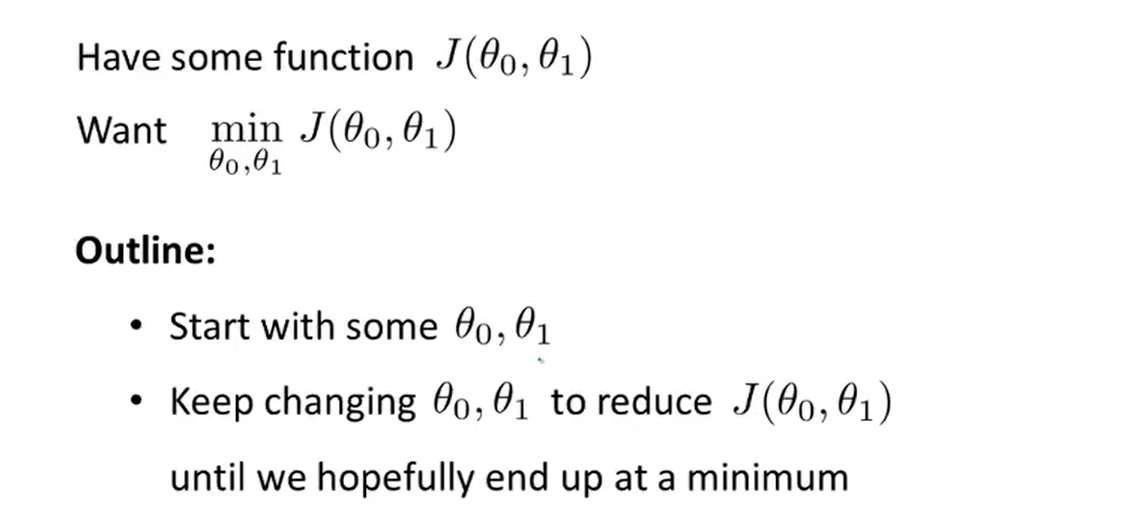

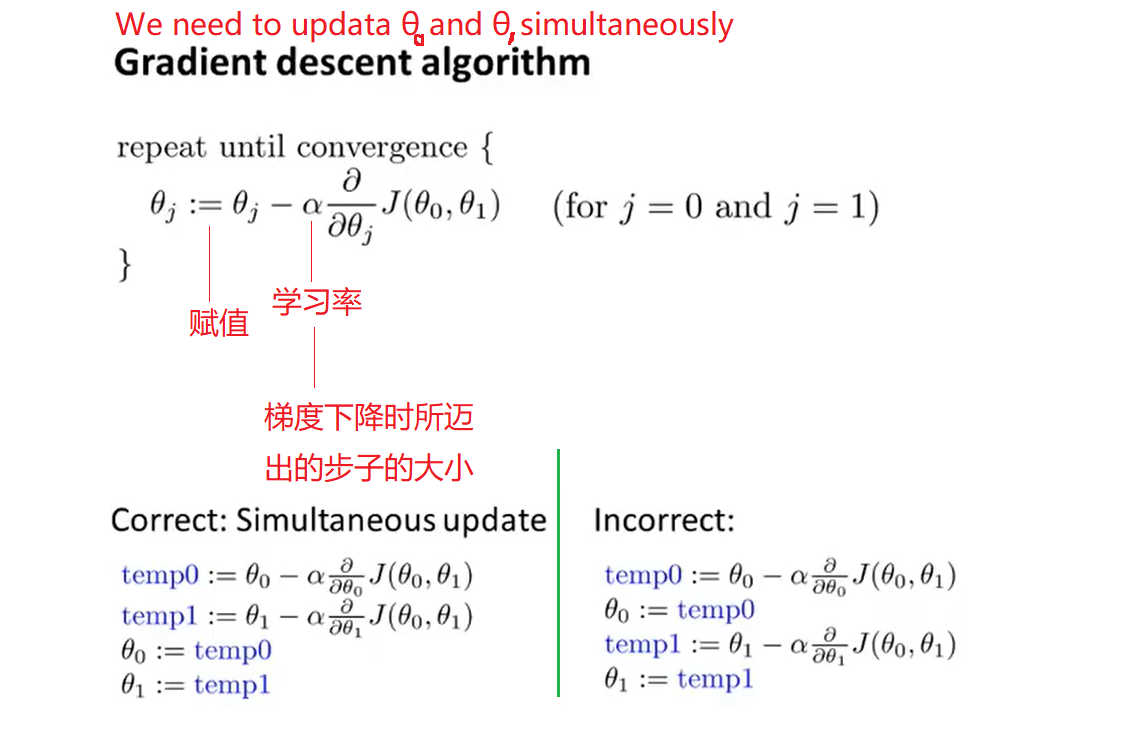

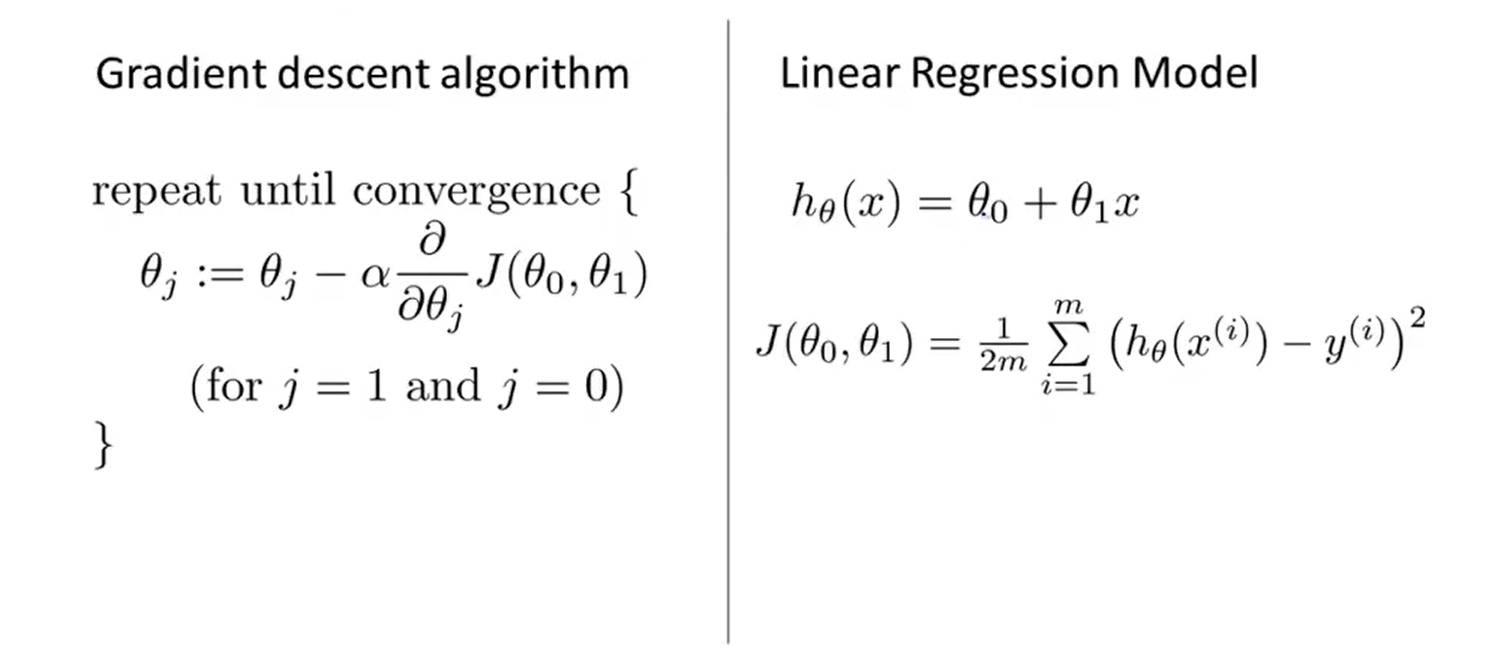

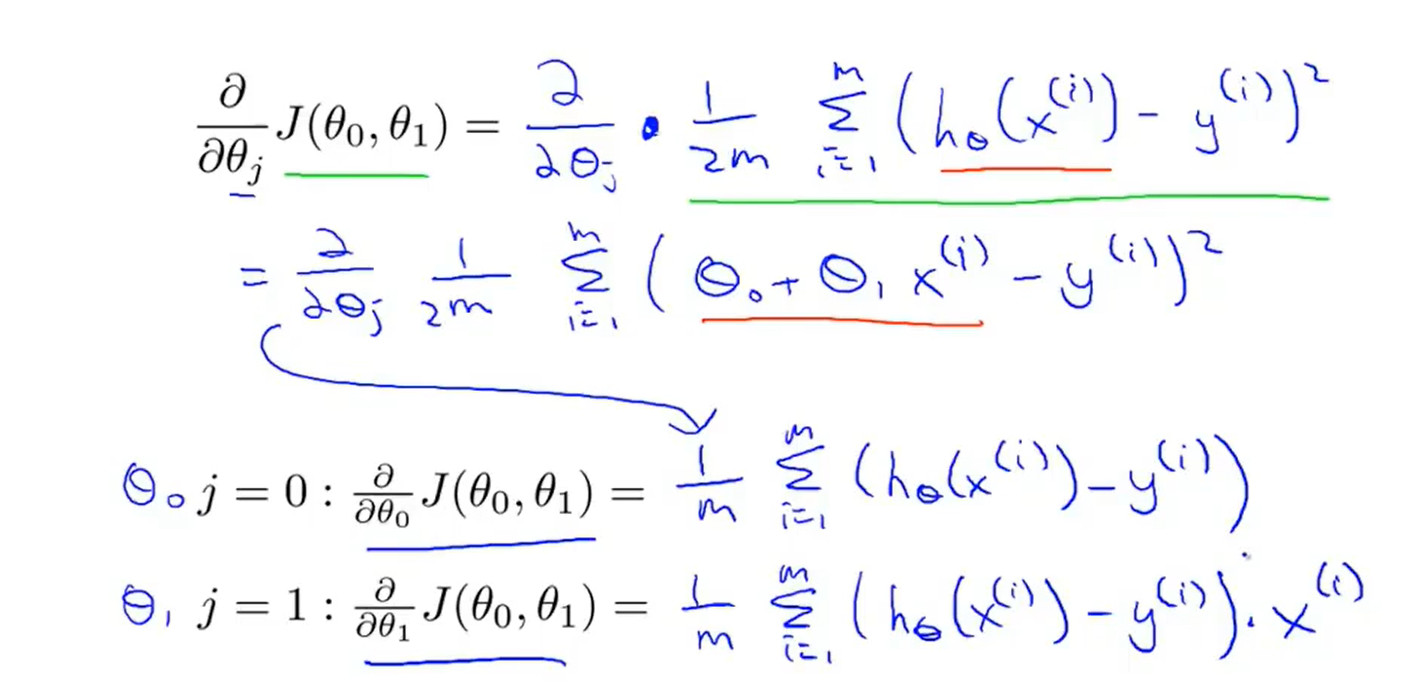

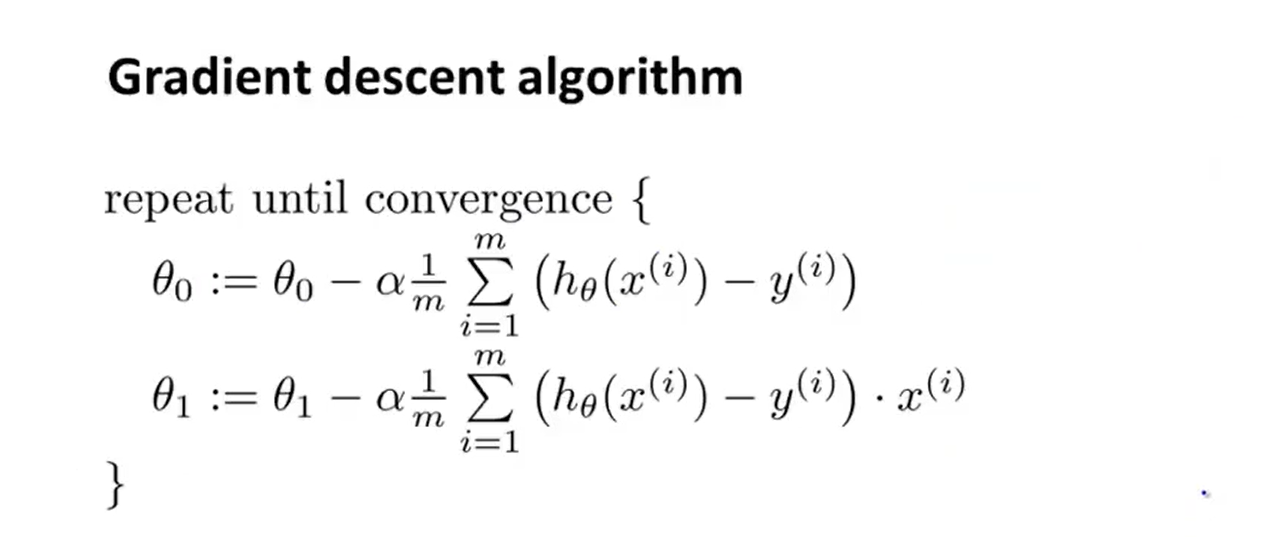

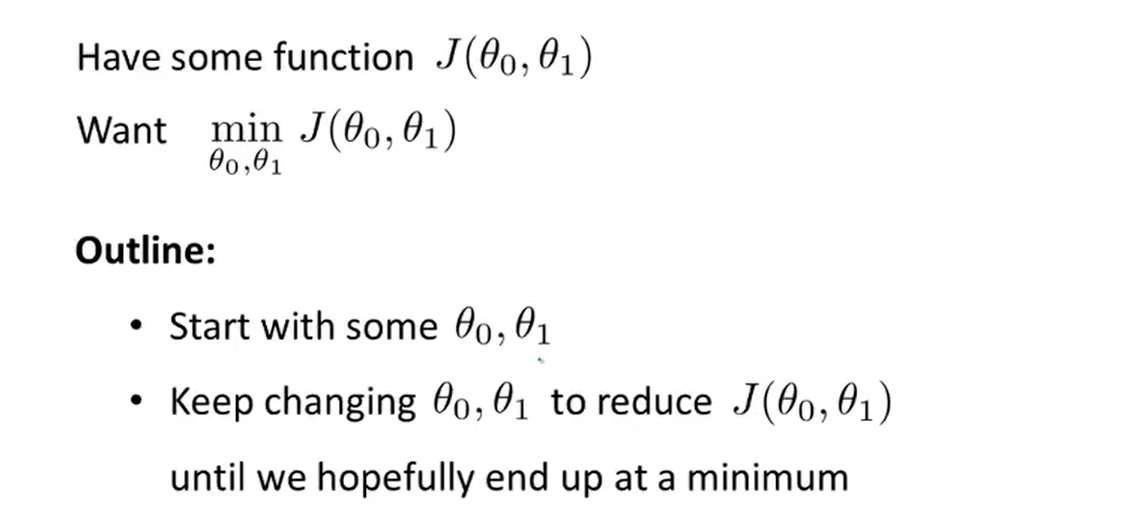

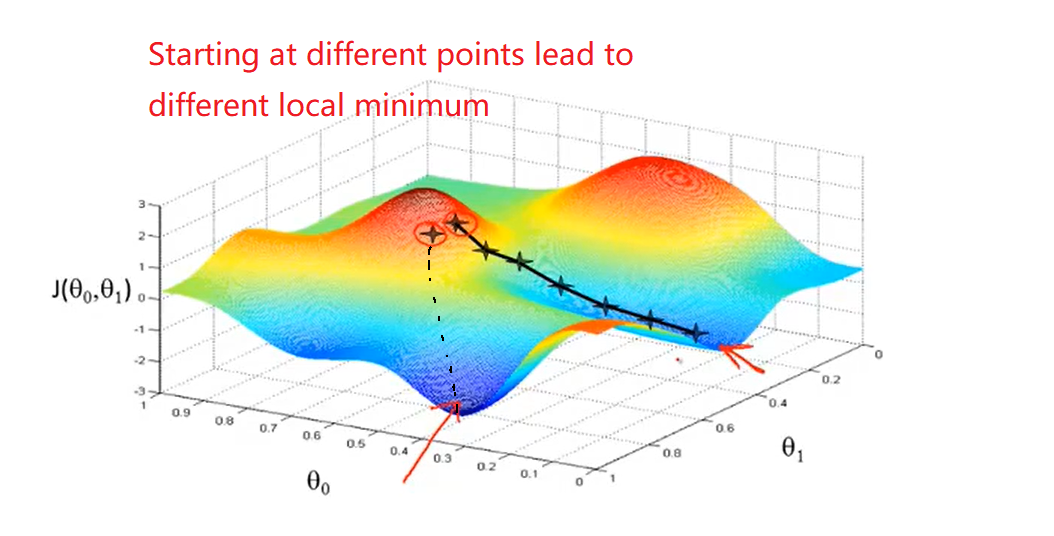

3、Gradient descent

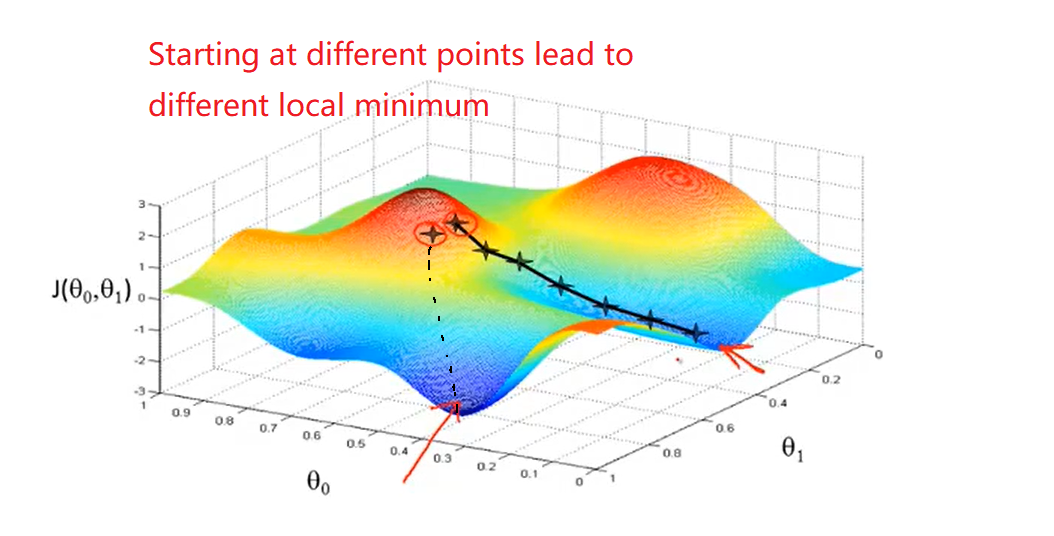

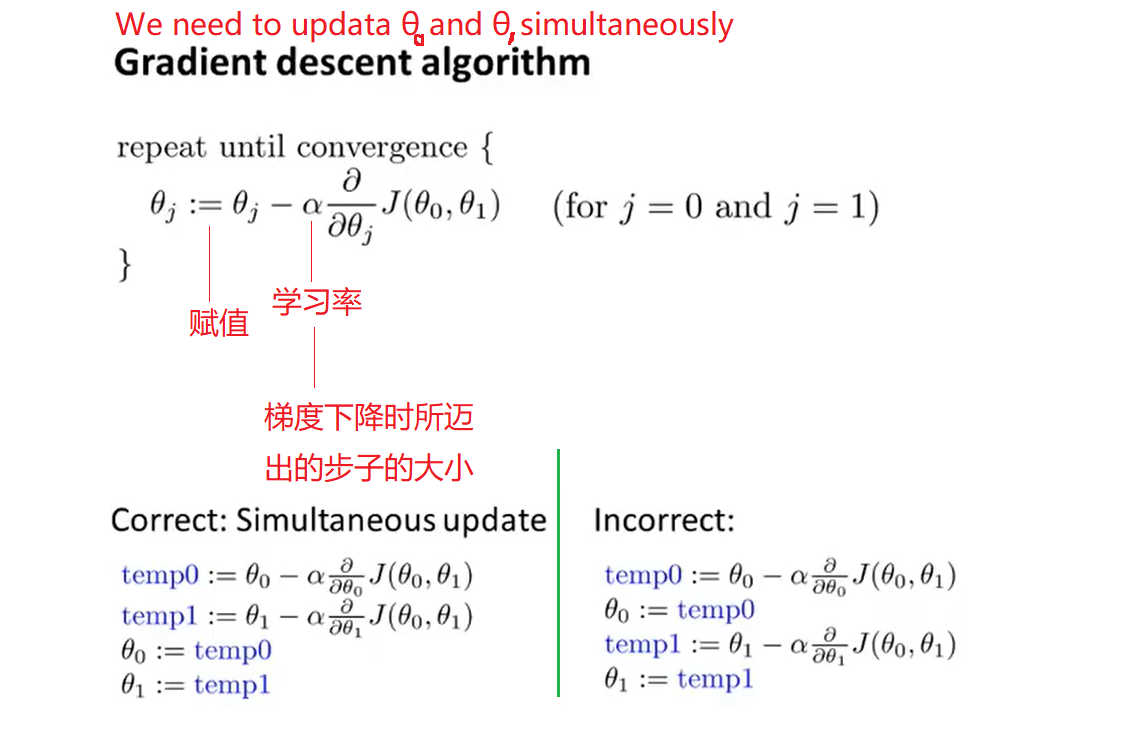

- It turns out gradient descent(梯度下降) is a more general algorithm and is used not only in linear regression. I will introduce how to use gradient descent for minimizing some arbitrary function J

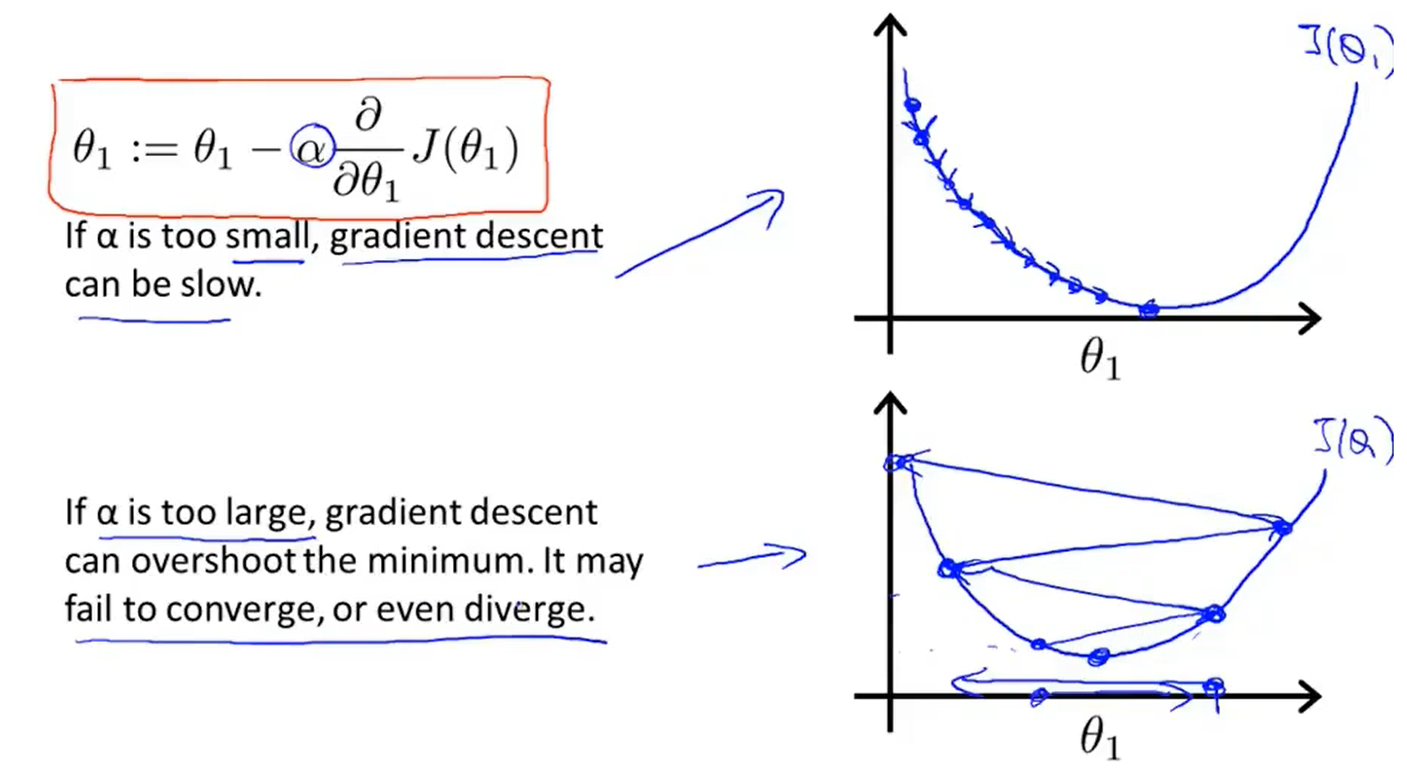

- The formula of the batch gradient descent algorithm :

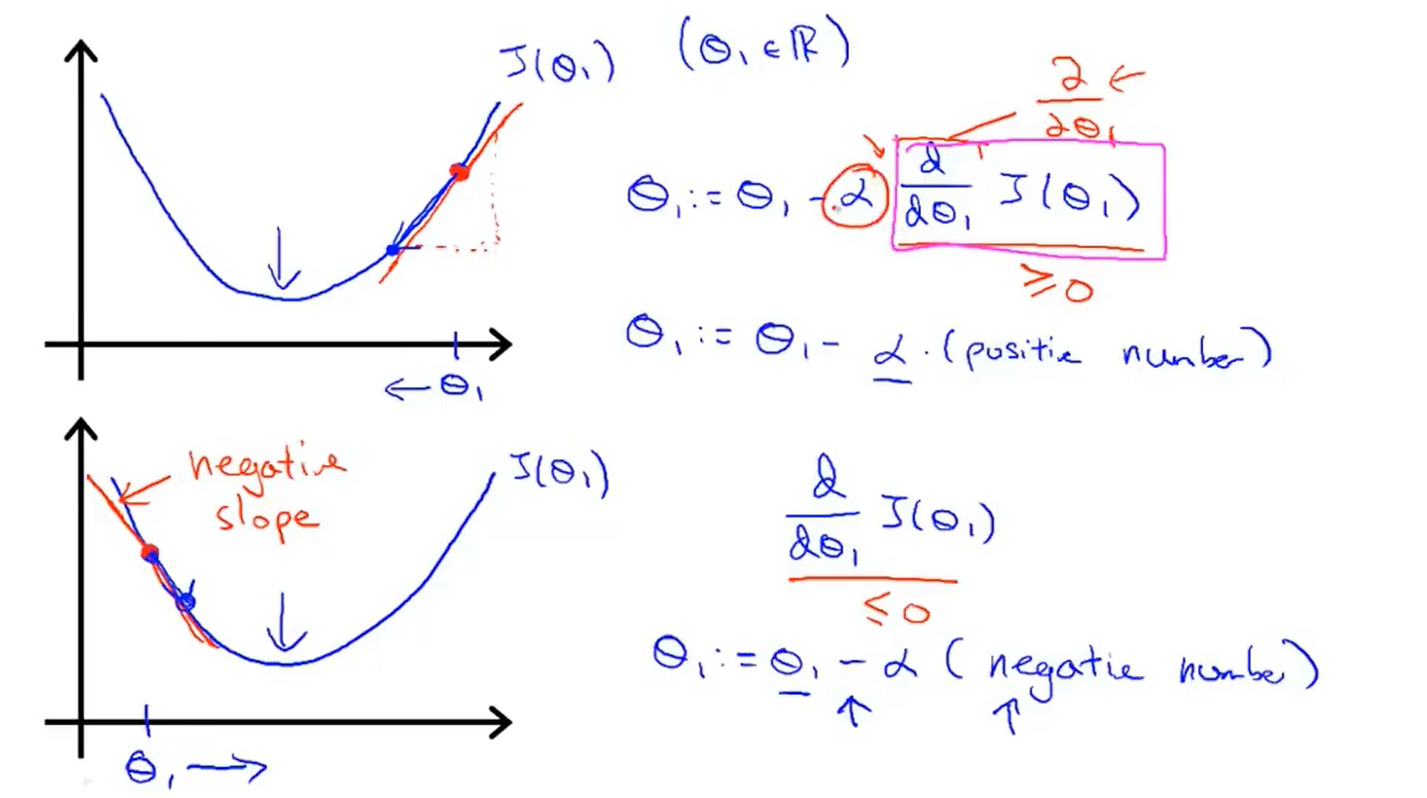

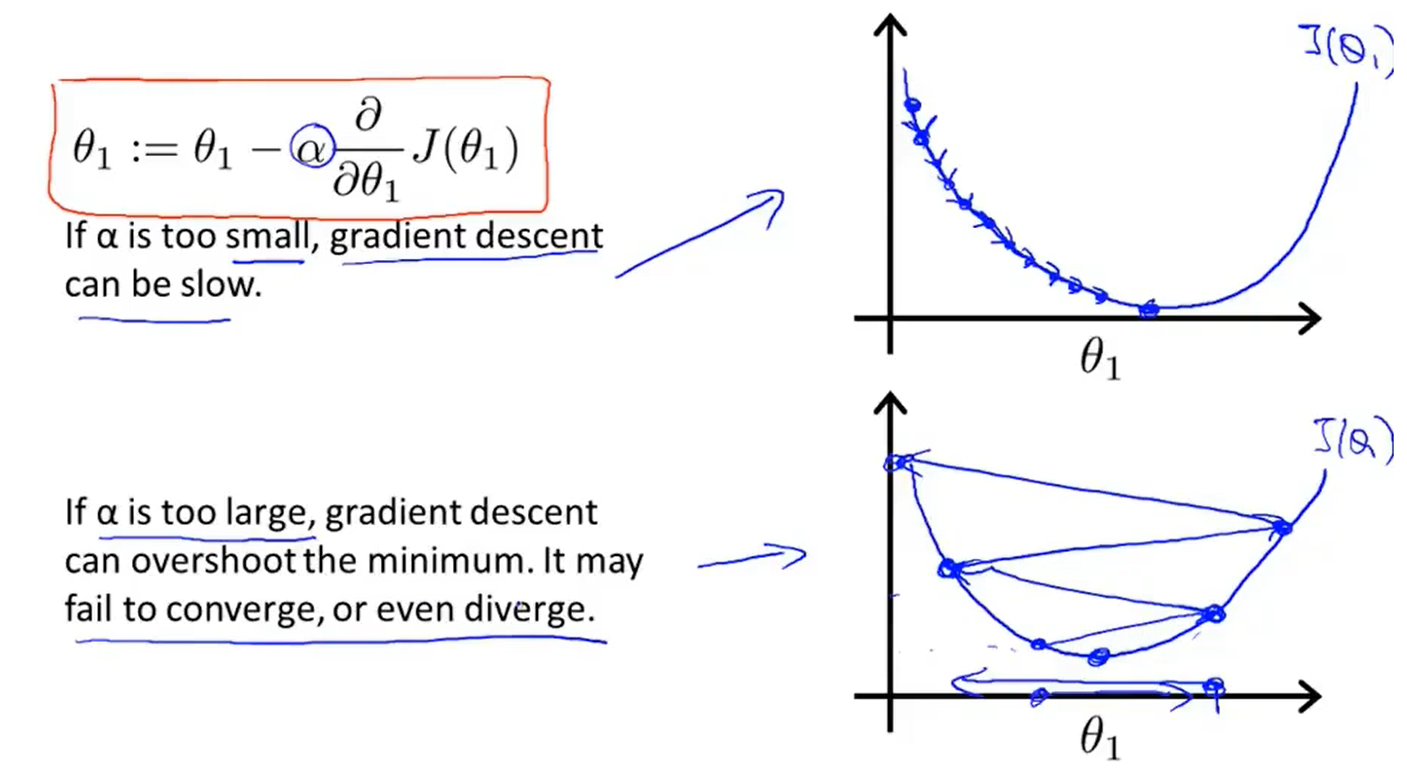

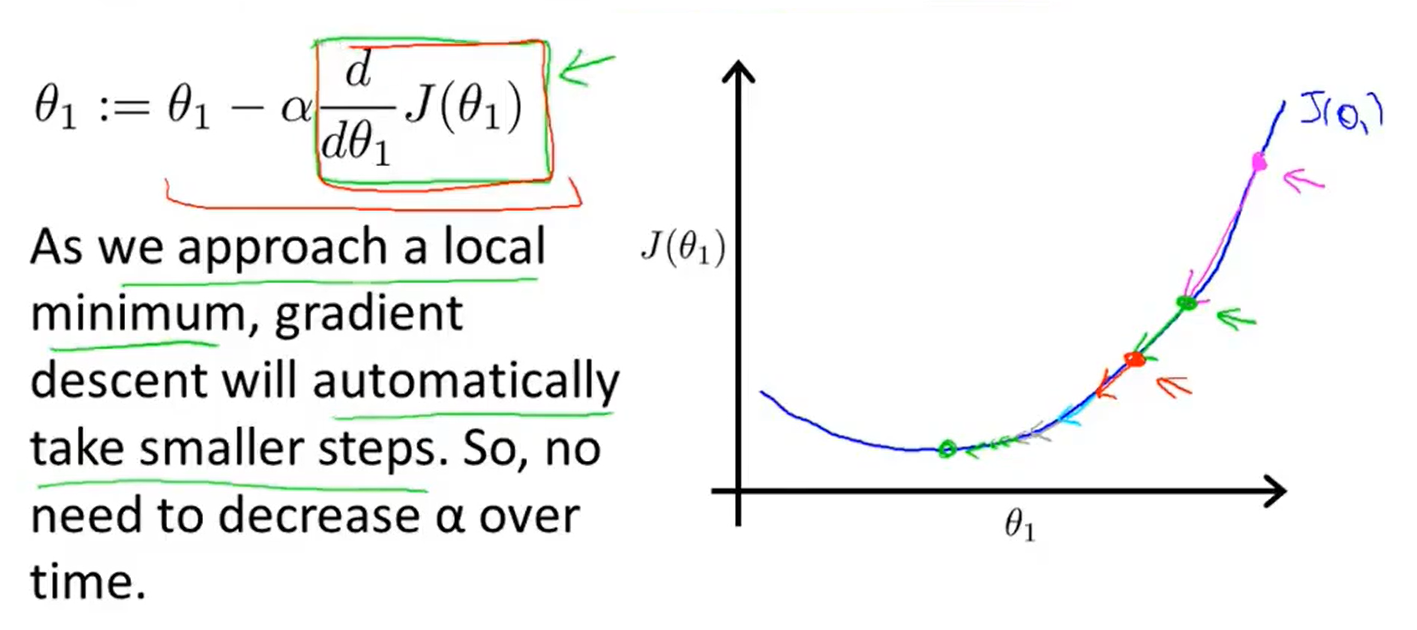

4、Gradient descent intuition

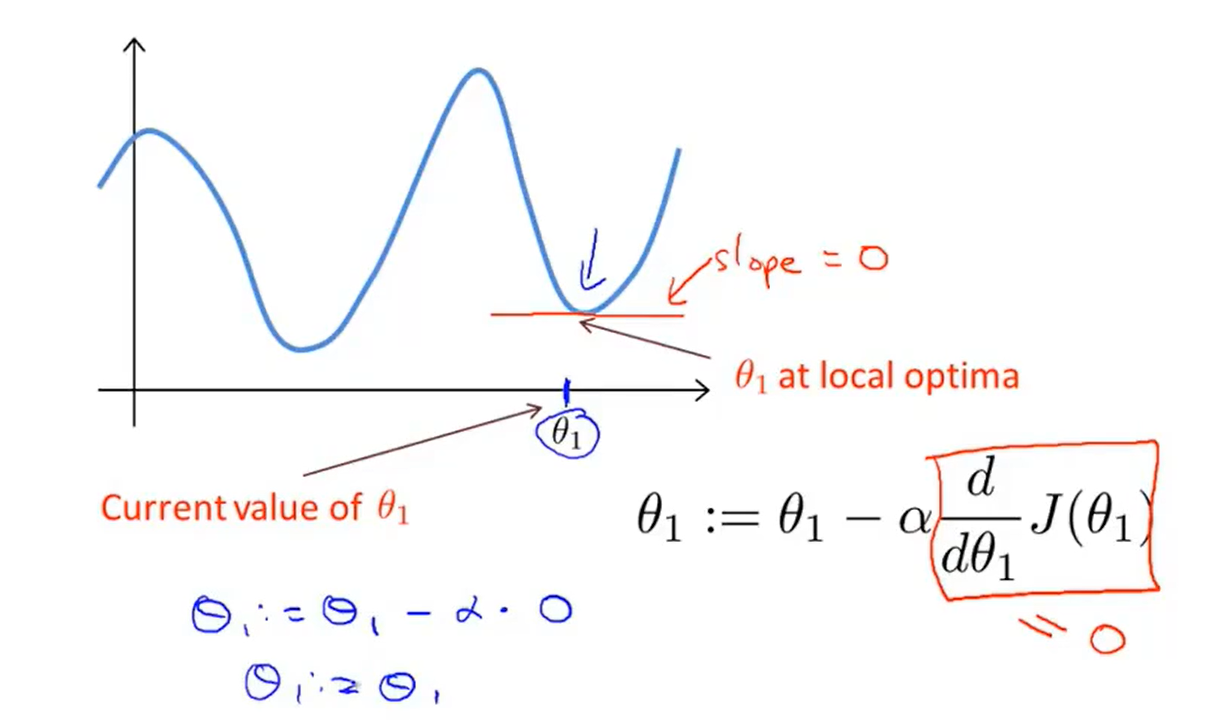

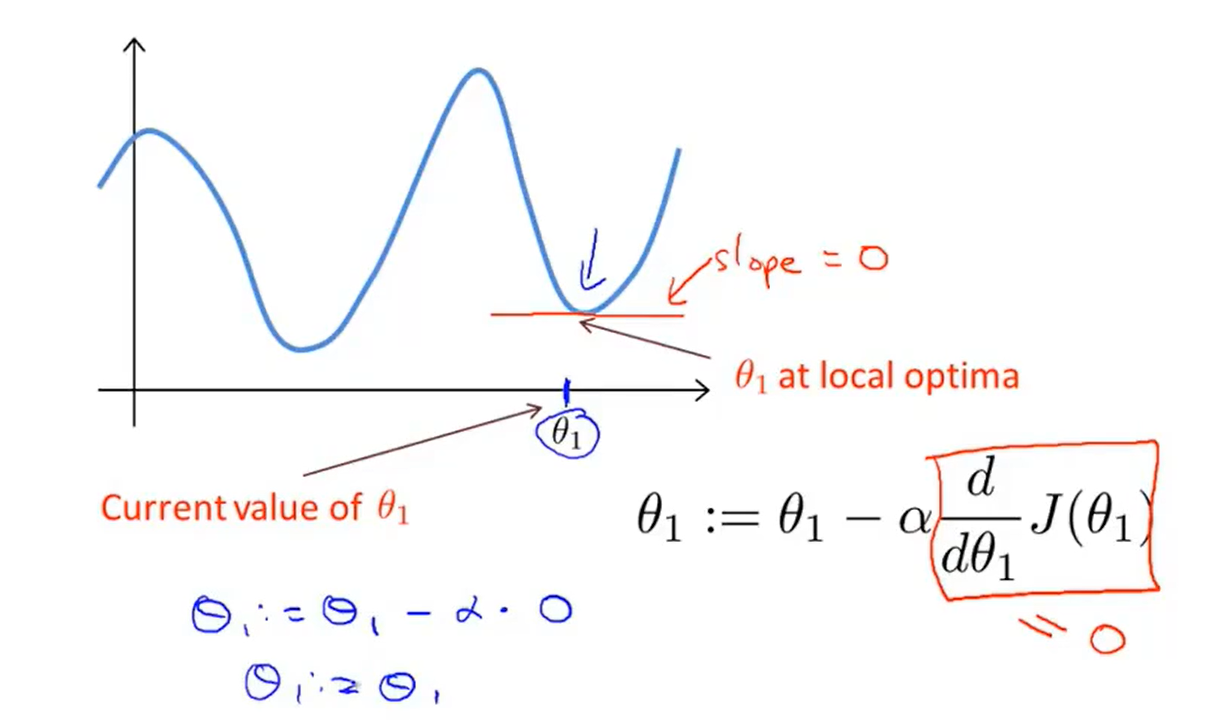

- But what if my parameter θ1 is already at a local minimum?

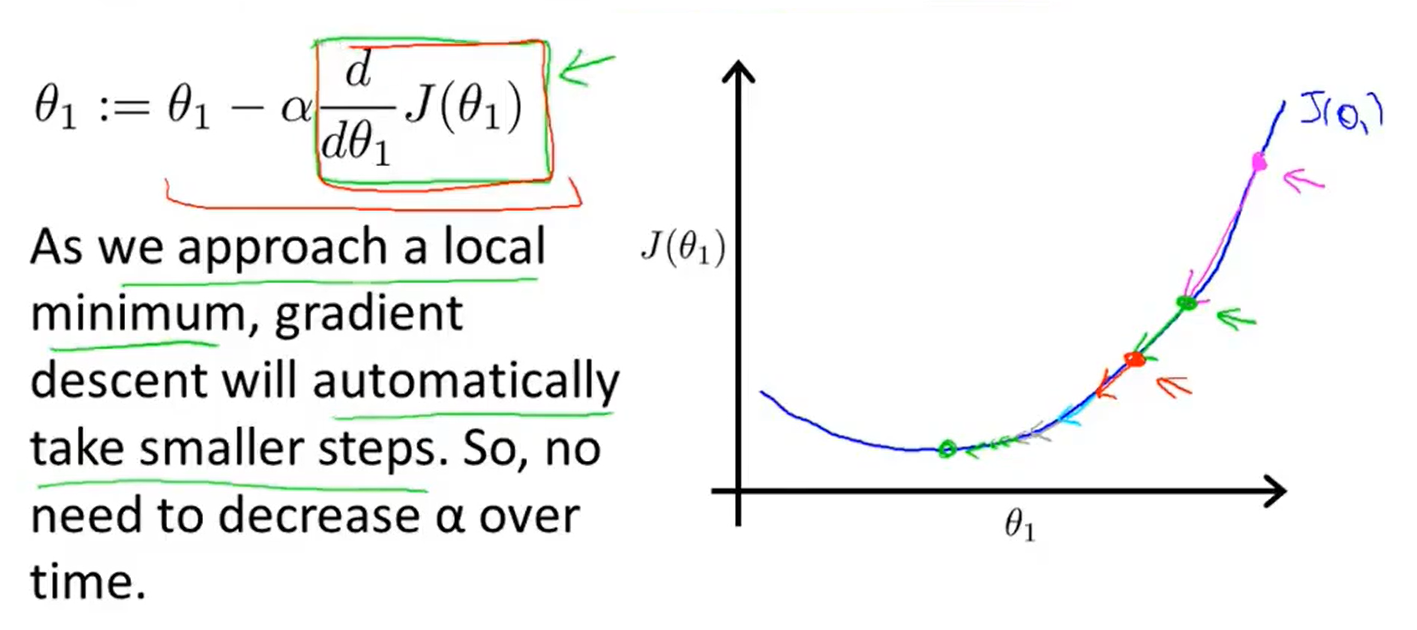

- Gradient descent can converge to a local minimum, even with the learning rate α fixed

5、Gradient descent for linear regression

Machine learning(2-Linear regression with one variable )

原文:https://www.cnblogs.com/wangzheming35/p/14861404.html